Do we have agents already?

Jared Moore and David Gottlieb

Nutshell

If you’re making an artificial moral agent from the ground up, what do you need?

Motivation

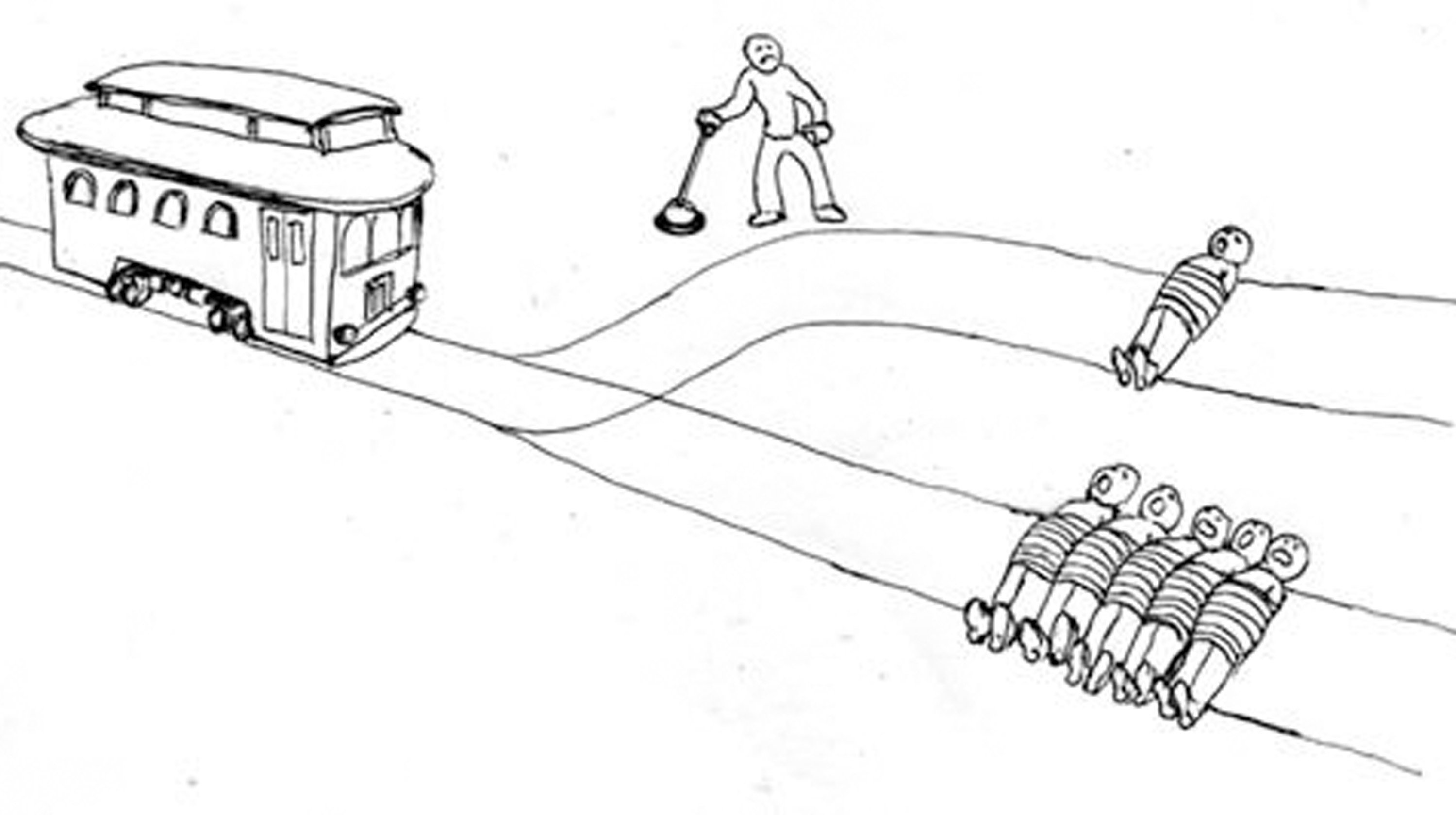

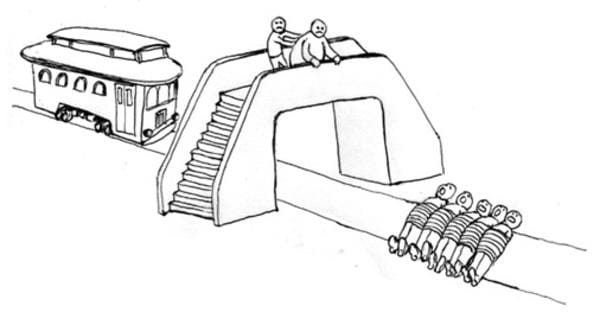

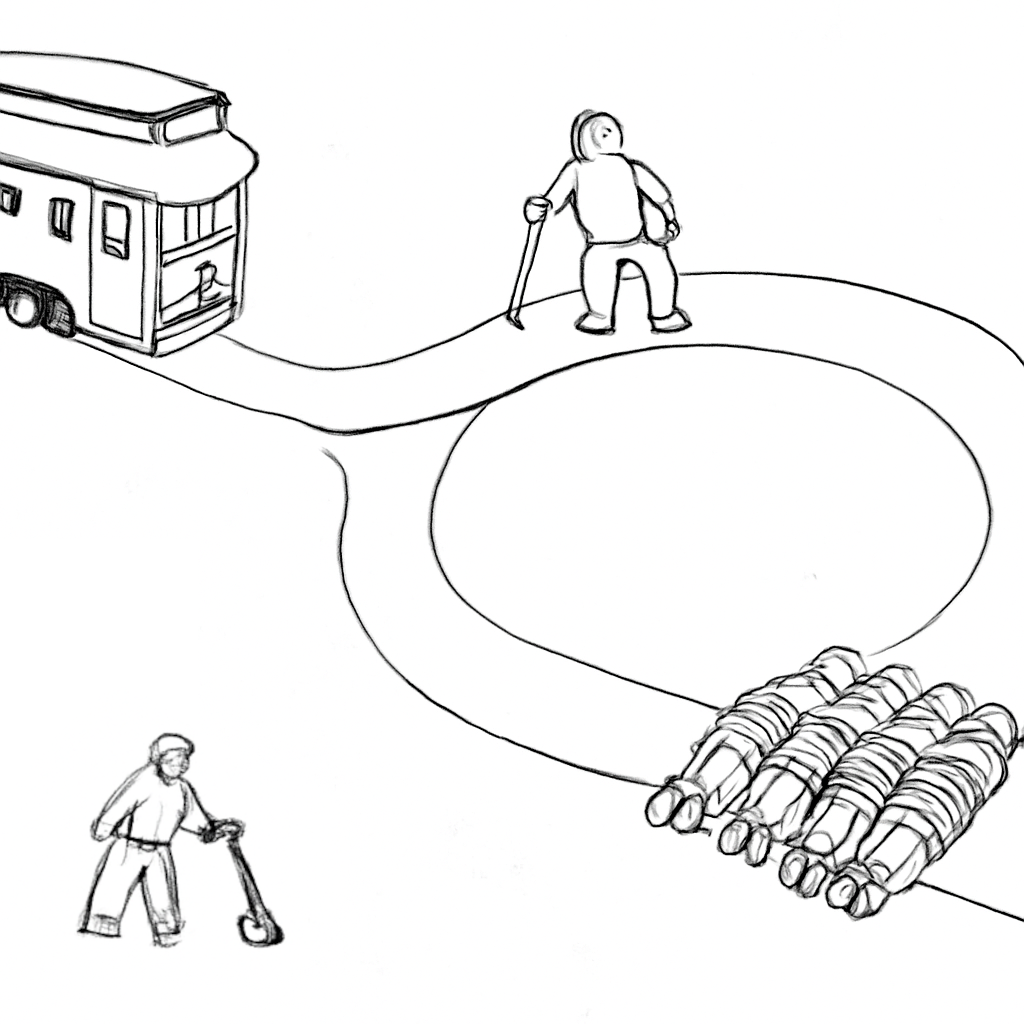

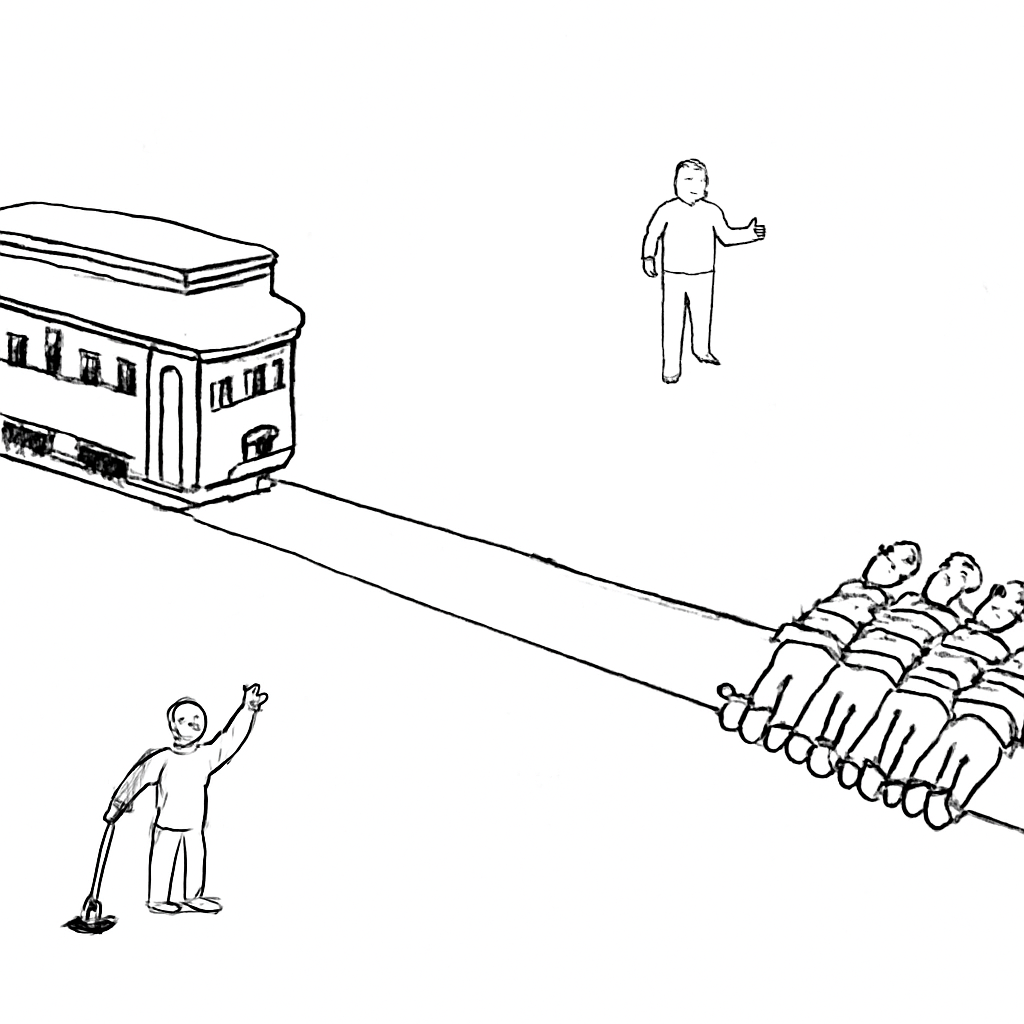

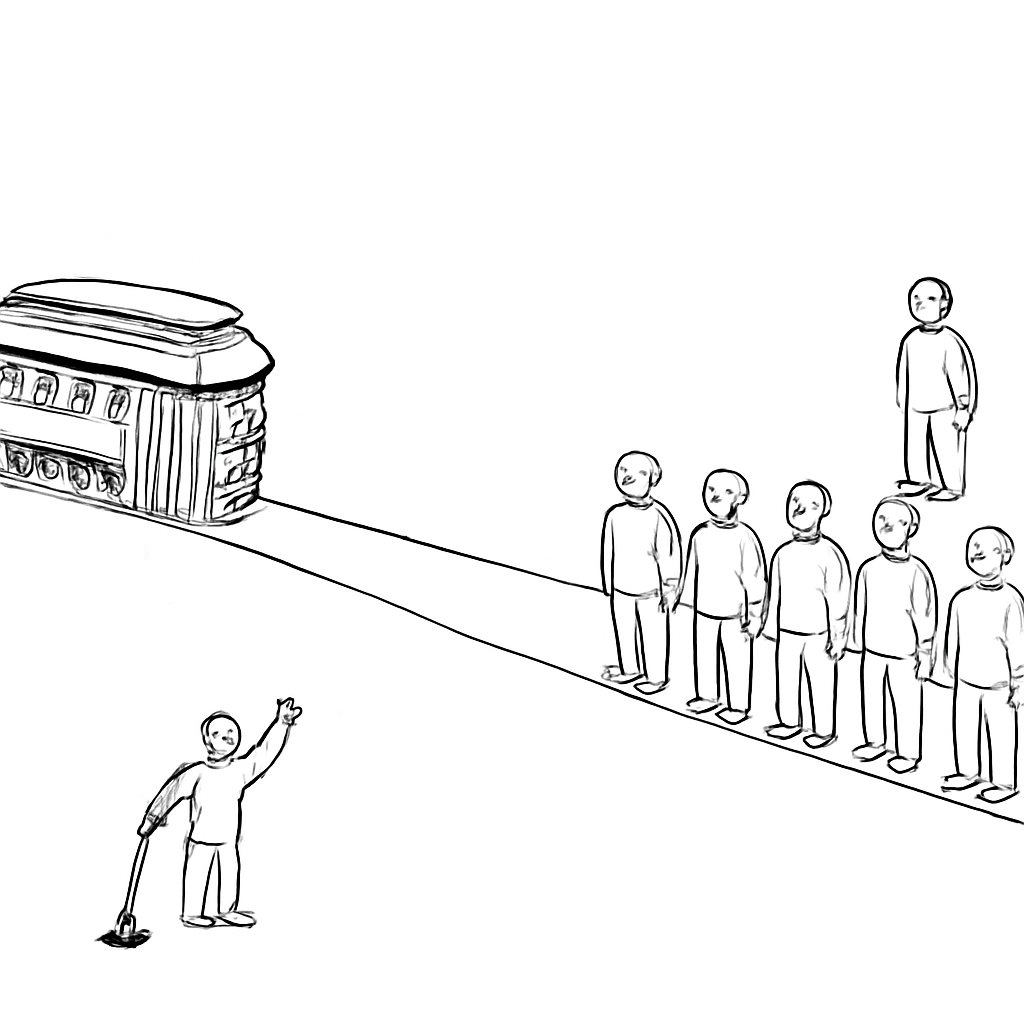

Trolleys

Trolleys

Trolleys

What does Railton want us to take away from this?

How would it feel to perform this action? Could I actually see myself doing it? What kind of person would perform it? What would others think, and could I face them” (Railton 2020, 18)

What’s good enough?

What do you need to learn in order to be a moral agent?

Is it sufficient simply to have motivation?

Or, further, must you be motivated to attend to features of social significance?

- Do you have to be able to generalize to tell what is, e.g., fair in a variety of scenarios?

Learning what, learning why

Does it matter that you are motivated or how (similar to people) you are motivated?

How, then, might artificial systems come to be appropriately sensitive to ethical concerns? (Railton 2020)

- We can’t all be selfish!

References

Railton, Peter. 2020. “Ethical Learning, Natural and

Artificial.” In Ethics of Artificial Intelligence,

edited by S. Matthew Liao, 0. Oxford University Press. https://doi.org/10.1093/oso/9780190905033.003.0002.