Aping our way to the moral high ground

Jared Moore and David Gottlieb

Objective

To explain to ourselves the wonderful achievement of our quotidian cooperation

Burning House

You’re on your way home from a hard day’s work at the station. At first you tell yourself it is nerves—smoke from the fires you’d been inhaling all day. After all, you’d made it a game with the kids how to open the flu, where to fetch water—what with you going at it alone now. You start to feel it next. No, it must be the long walk home that has you flushed. But then you see it, dancing in its awesome fury right there above your neighbor’s oak. Then you’re running, slamming through the door, leaping up stairs to your apartment. You barely notice as your buddies’ engine sidles up, them pouring into the collapsing structure, strangers wailing.

Who do you save first?

(Choices: strangers, buddies, kids.)

Sympathy

reciprocal altruism

In this view, emotional reciprocity is most accurately characterized as mutual investments among interdependent friends, who help one another not in order to pay back past acts but in order to invest in the future (Tomasello 2016)

Cheney and Seyfarth (2008)

natural selection has favored individuals who develop theories of social life. (Cheney and Seyfarth 2008, 117)

mutualism

Tomasello (2019)

Chimps let you cheat!

Namely, they would let you play “demand-9” on divide-the-dollar.

In all three studies the result was identical: subjects virtually never rejected any nonzero offers. Presumably they did not because they were not focused on anything like the fairness of the offer, only on whether or not it would bring them food (Tomasello 2016)

“Sharing”

http://www.becoming-human.org/public/html/chapter-8-1-v1.phtml

“Sharing”

http://www.becoming-human.org/public/html/chapter-8-1-v5.phtml

Perspective taking

Cheney and Seyfarth (2008)

Individual intentionality

This doesn’t give us morality as we know it. It doesn’t give us fairness.

The participants are not working together so much as they are using one another as “social tools” to maximize their own gains. (Tomasello 2019)

| Cooperation (in the context of competition) |

|

|---|---|

| Prosociality | Sympathy |

| Cognition | Individual Intentionality |

| Social interaction | Dominance |

| Self-Regulation | Behavioral Self-Regulation |

| Rationality | Individual Rationality |

Who do we save in the fire?

Tomasello (2016)

Why aren’t chimps moral agents?

They can tell what other chimps are thinking. They can be motivated to help each other. They can recognize each others emotions. They can control their impulses.

So what gives?

Fairness

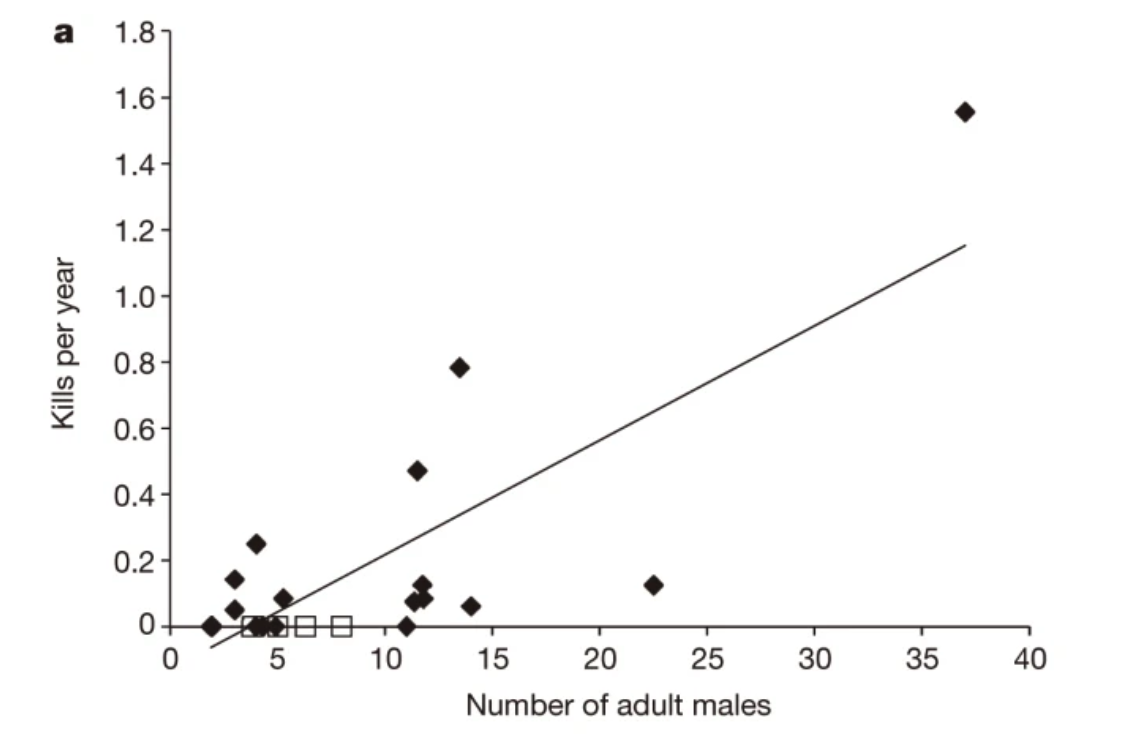

Are humans violent?

Chimpanzees are around 30 times more likely to die from homicide than the “most violent” humans.

Wilson et al. (2014)

Force is a physical power, and I fail to see what moral effect it can have (Jean-Jacques Rousseau: 1762/1968, p. 52).

Quoted in Tomasello (2016)

How did cooperation get started?

I wonder what other evidence that may be more convincing Tomasello could use to should how the primitive humans arrived at cooperation. (Eden)

The benefits of cooperation

[In a foraging society] once every 17 years, caloric deficits for nonsharers would fall below 50 percent of what was needed 21 days in a row, a recipe for starvation. By pooling their risk, the proportion of days people suffered from such caloric shortfalls fell from 27 percent to only 3 percent. (Hrdy 2009)

Tomasello describes how scavenging the kills of lions and hyenas is a “stag hunt.”

Hrdy (2009)

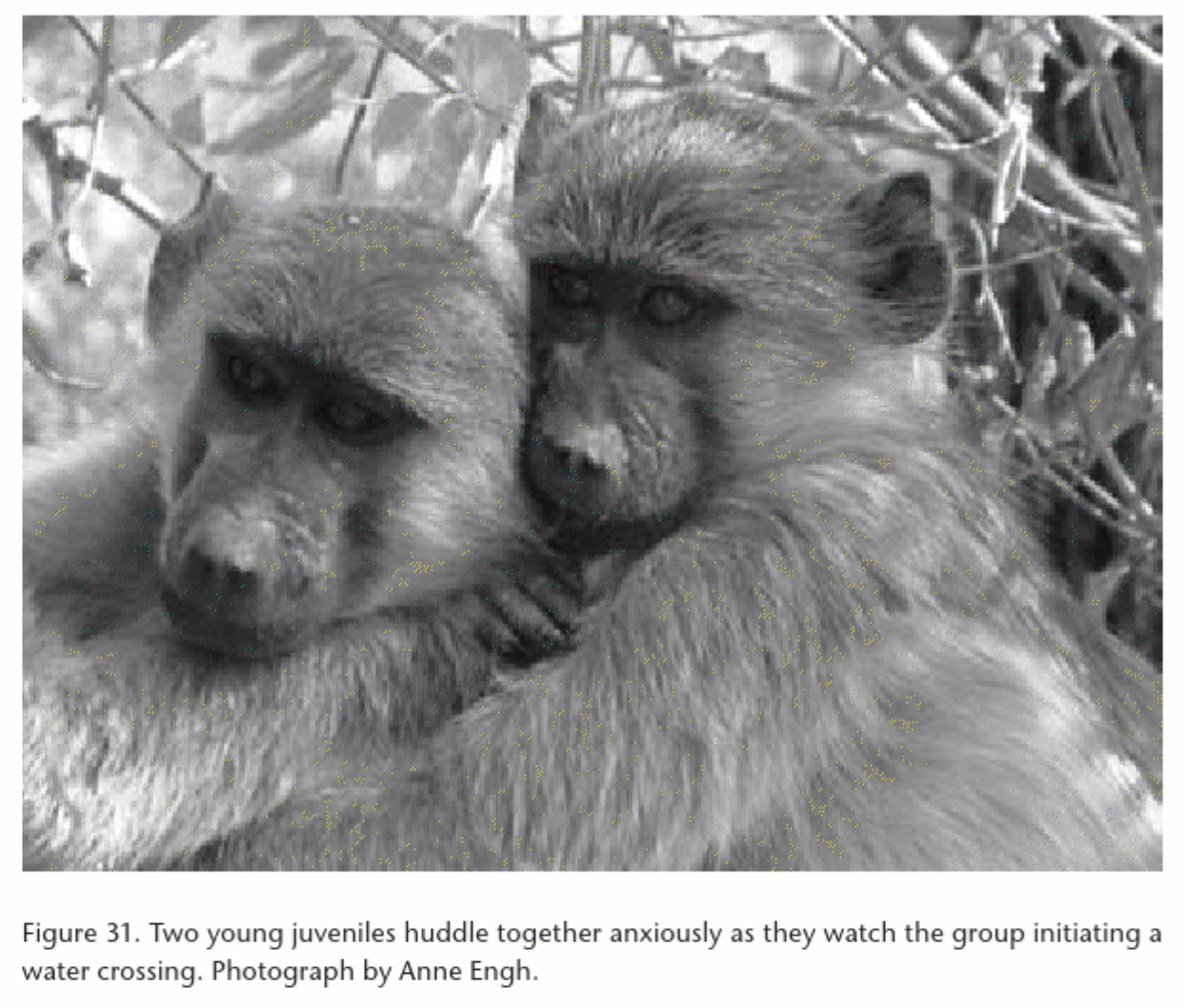

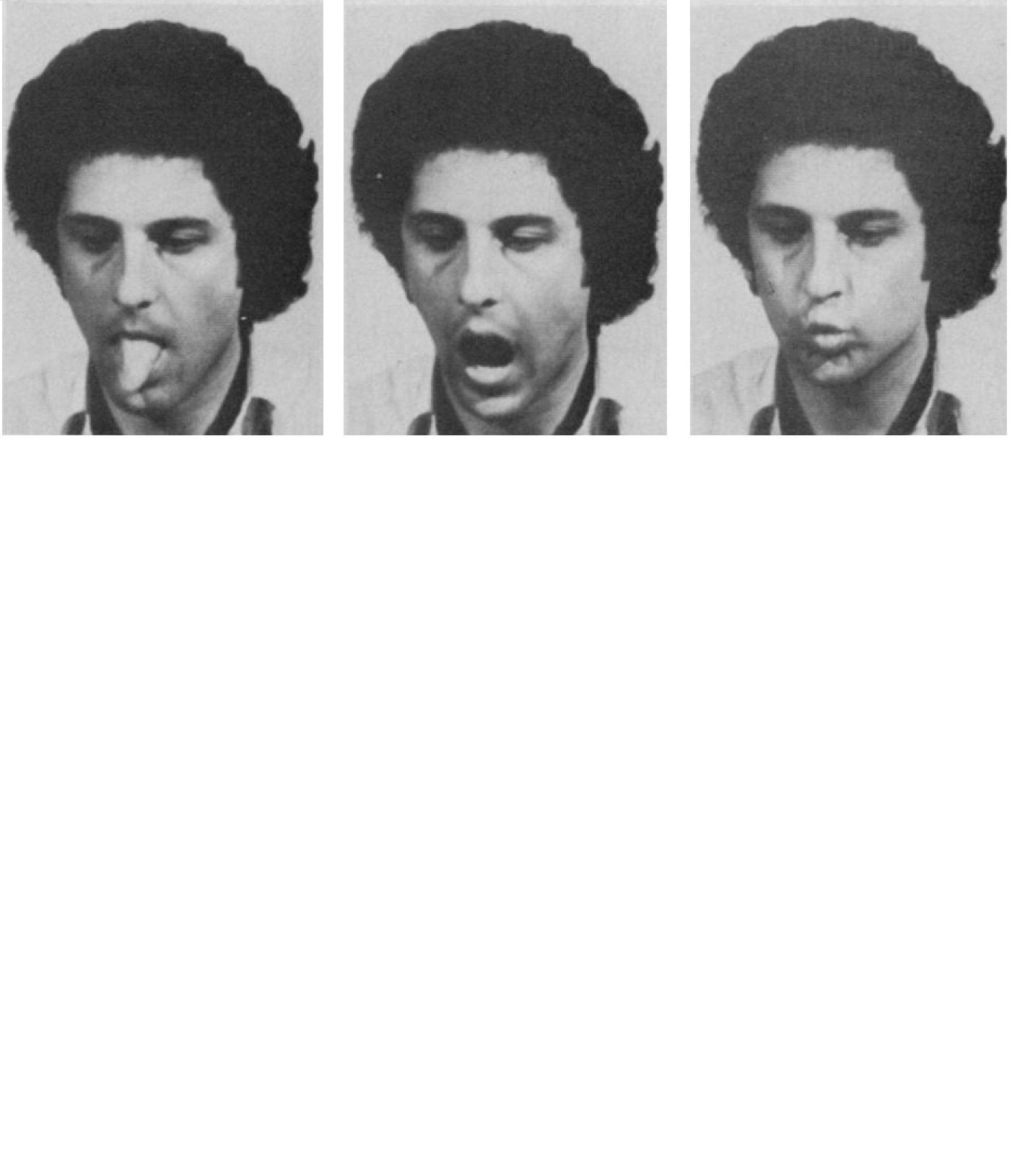

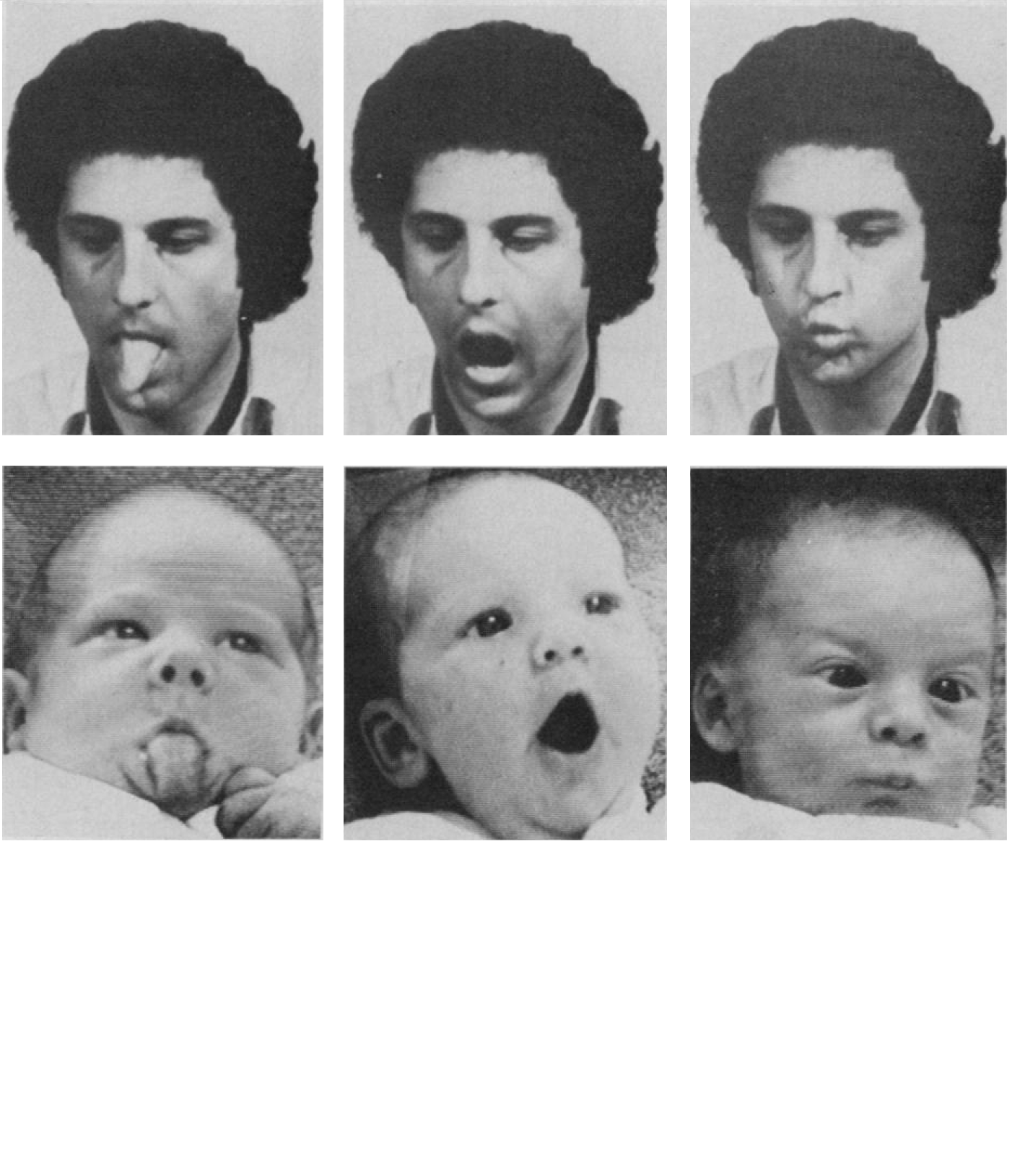

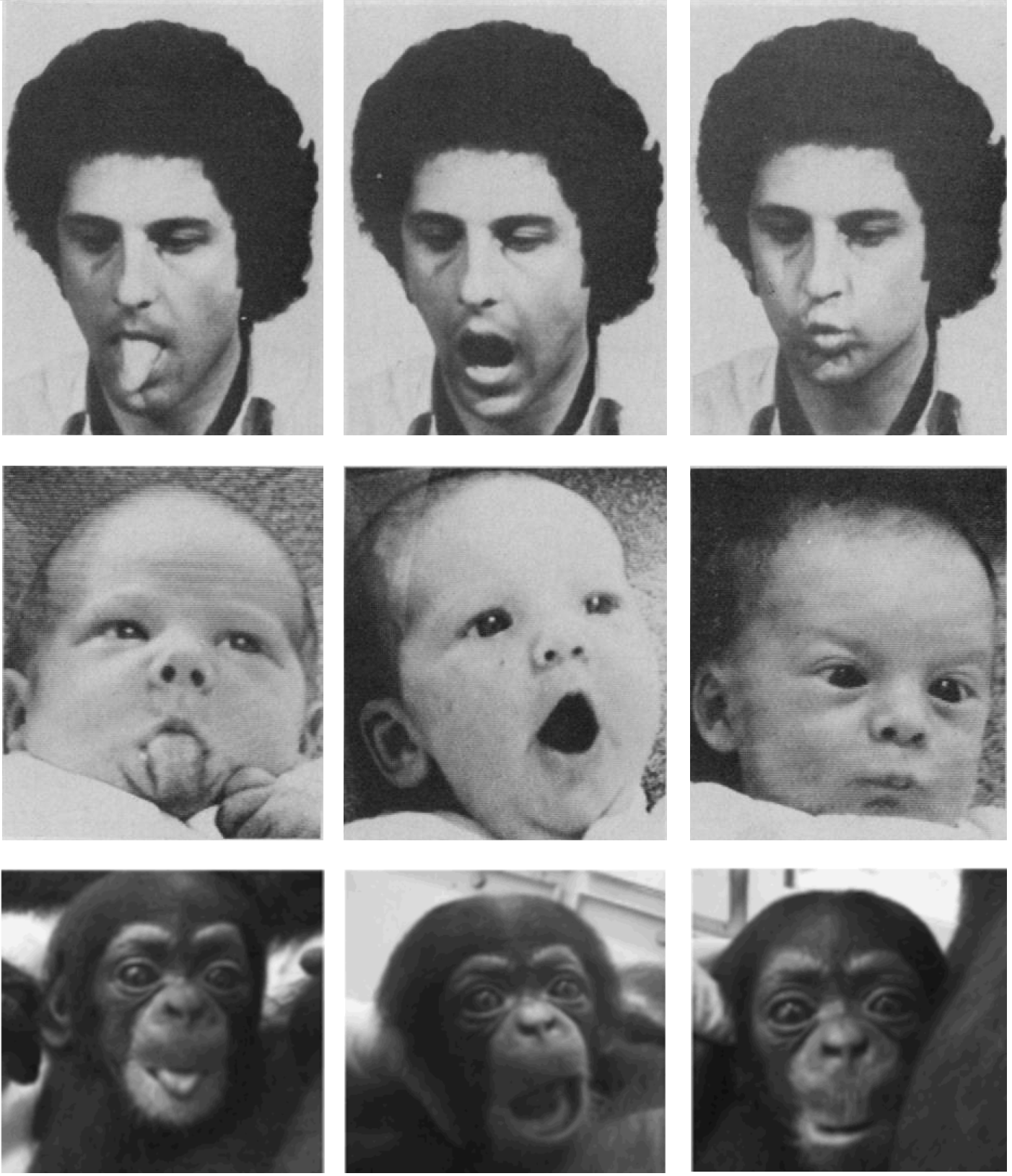

Imitation – Cooperative Childcare

A composite of Meltzoff and Moore (1977) and Myowa‐Yamakoshi et al. (2004)

Sympathy and Perspective Taking

How did you feel when watching that video?

What happened?

“Smithian” sympathy: How would I feel if I were in your shoes?

http://www.becoming-human.org/public/html/chapter-3-3-v2.phtml

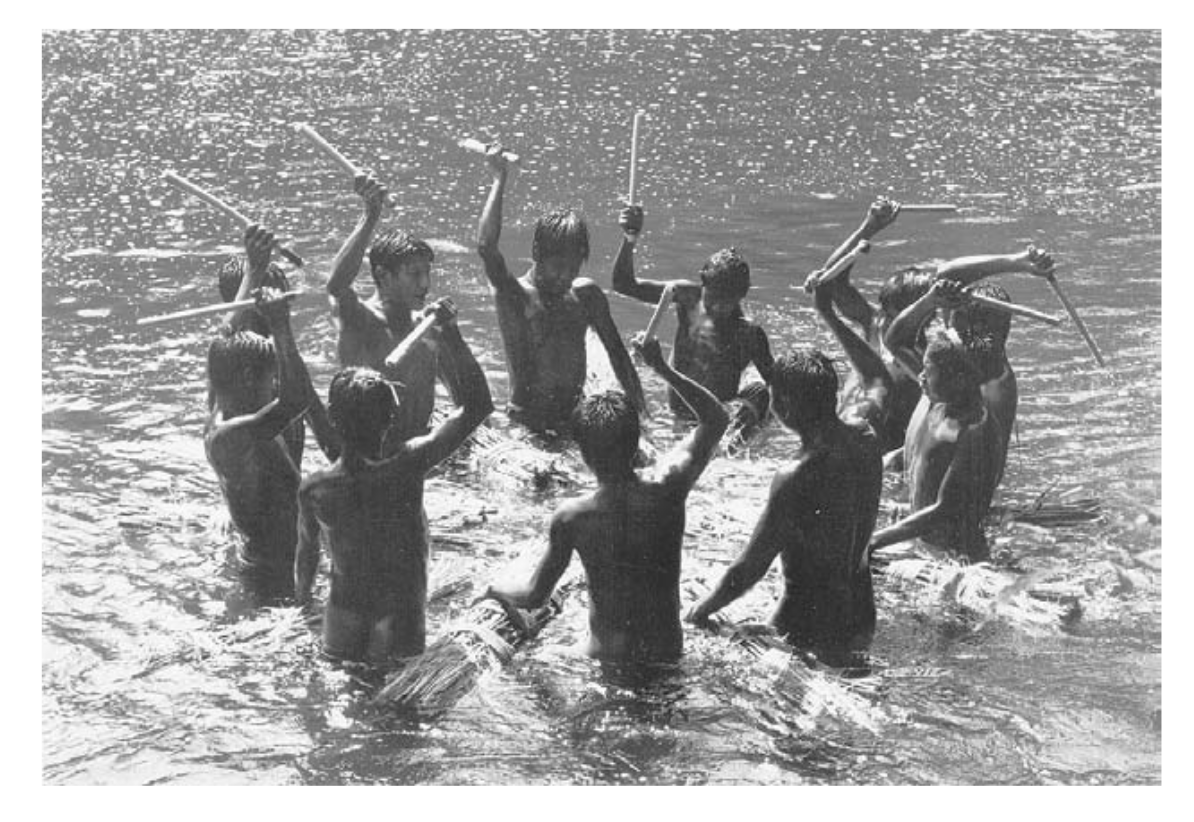

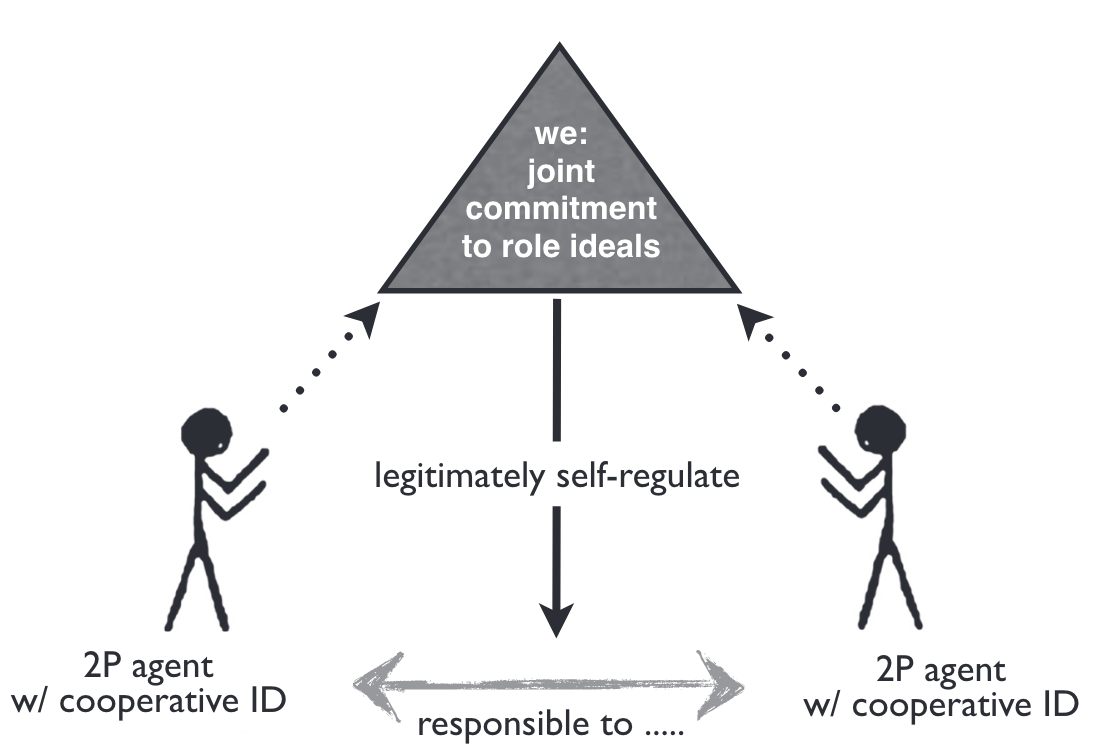

Joint Commitment

Notice the lack of violence!

It’s a good idea to cooperate with other cooperators

This is a world without language. I can’t say, “don’t play with him, he isn’t nice.” Besides your own experiences, you don’t have a sense of who would be good to gather food with versus who wouldn’t.

Hence you don’t stand to gain by being unfair. (Because then they won’t cooperate with you later and you need all the food you can get.)

Rational agents feel instrumental pressure to act toward their goals (desires) given their perceptions (beliefs), so each partner in a collaboration feels instrumentally rational pressure to help her partner as needed to further their joint enterprise (Tomasello 2016)

No free riding!

You can’t say you’re one of us if you would do that!

“we” was a moral force because both partners considered it legitimate, based on the fact that they had created it themselves specifically for purposes of self-regulation, and the fact that both saw their partner as genuinely deserving of their cooperation. (Tomasello 2016)

Notice the Kantianism here! We are applying a maxim. We are treating each other as ends

Recall:

How many times can you insult your friends until you become a bully?

Tomasello (2016)

| Cooperation (in the context of competition) |

Second-Personal Morality (obligate collaborate foraging w/ partner choice) |

|

|---|---|---|

| Prosociality | Sympathy | Concern |

| Cognition | Individual Intentionality | Joint Intentionality - partner equivalence - role-specific ideals |

| Social interaction | Dominance | Second-Personal Agency - mutual respect & deservingness - 2P (legitimate) protest |

| Self-Regulation | Behavioral Self-Regulation | Joint Commitment - cooperative identity - 2P responsibility |

| Rationality | Individual Rationality | Cooperative Rationality |

Who do we save in the fire?

Tomasello (2016)

Do we really have a choice?

[Tomasello says] the ultimate causation involved in evolutionary processes is independent of the actual decision making of individuals seeking to realize their personal goals and values”. [E.g. with] sex, “whose evolutionary raison d’être is procreation but whose proximate motivation is most often other things”, such as pleasure. […] [But] our proximate motivation for sex, i.e. pleasure, is caused by evolutionary selection. We didn’t choose to enjoy sex, we enjoy sex because evolution made it pleasurable. (Shreyas)

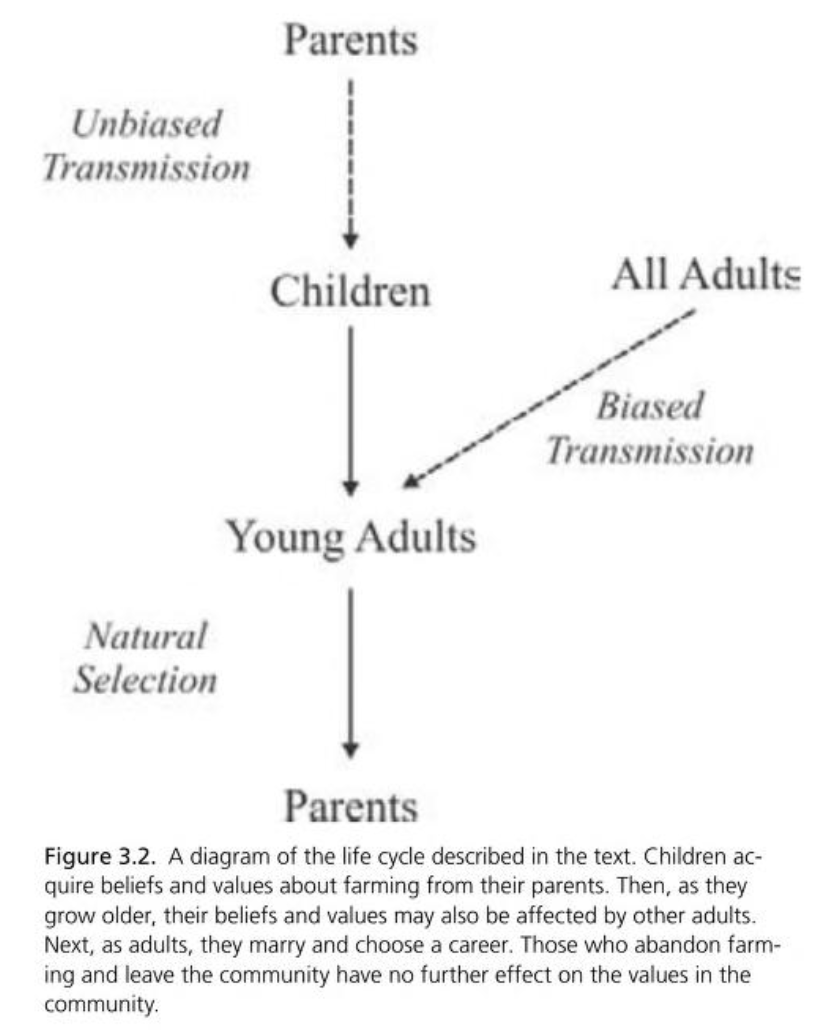

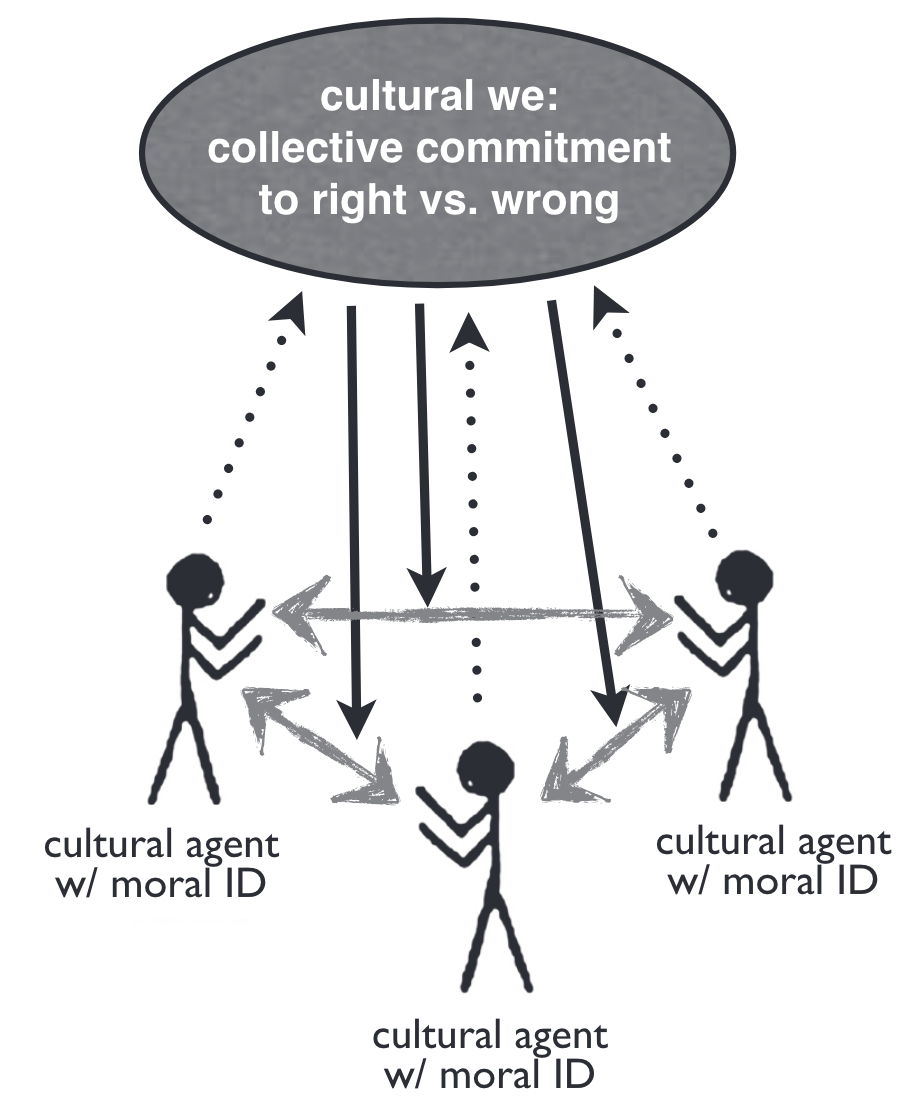

Culture (third-person morality)

Tomasello (2019)

Richerson and Boyd (2005)

Tomasello (2016)

| Cooperation (in the context of competition) |

Second-Personal Morality (obligate collaborate foraging w/ partner choice) |

“Objective” Morality (life in a culture) |

|

|---|---|---|---|

| Prosociality | Sympathy | Concern | Group Loyalty |

| Cognition | Individual Intentionality | Joint Intentionality - partner equivalence - role-specific ideals |

Collective Intentionality - agent independence - objective right & wrong |

| Social interaction | Dominance | Second-Personal Agency - mutual respect & deservingness - 2P (legitimate) protest |

Cultural Agency - justice & merit - third-party norm enforcement |

| Self-Regulation | Behavioral Self-Regulation | Joint Commitment - cooperative identity - 2P responsibility |

Moral Self-Governance - moral identity - obligation & guilt |

| Rationality | Individual Rationality | Cooperative Rationality | Cultural Rationality |

Who do we save in the fire?

Tomasello (2016)

Are we convinced by this account?

you only have standing to call someone out or feel obligated to them because you’ve already formed a “we” together through collaboration. That makes sense for partners, friends, or people in shared circles, but what about obligations to people you’ve never interacted with such as strangers, future generations, people outside your group entirely (Jolie)

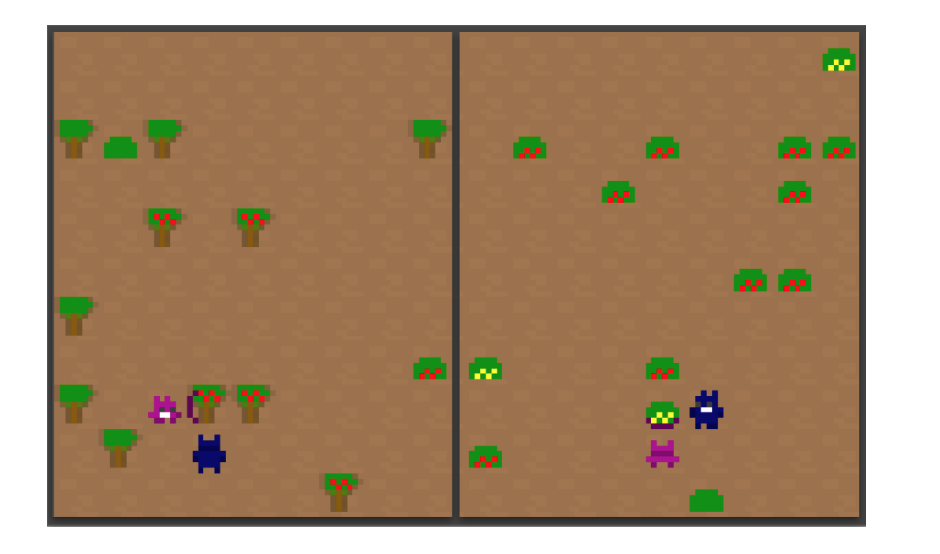

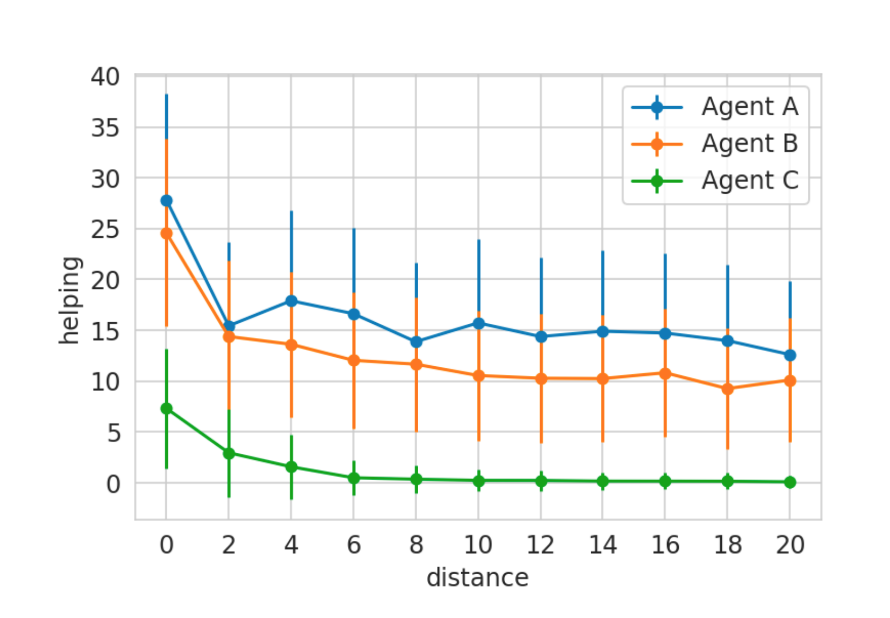

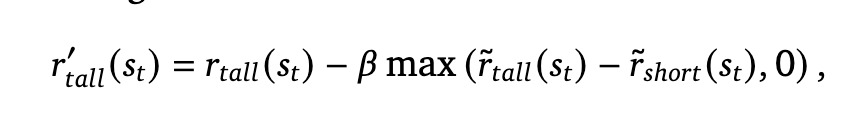

Mao et al. (2023)

Tomasello quotes from Hume that “individuals who have no need of others have no need for fairness and justice” (38). LLMs have no survival needs and depend on nothing because they don’t have “wants”. Can there be moral motivation without interdependence? (Fiona)

Do our models have to satisfy tests to…

- gaze follow

- help and share

- adopt the perspective of another, of a culture

- etc.?

What would it mean to satisfy those tests?

Exit Ticket

Guilt trip

Think of a time that you established a joint commitment with someone you know (not a stranger). Perhaps you both decided to play tennis. You went to a party together. You collaborated on a group project.

Furthermore, try to think of a time when that commitment was broken. Did the commitment end cleanly? Did you or the other party ask to end it? If not, did one of you protest (“guilt trip”) the other?

What happened? How did you feel?