Never forget where you came from

Jared Moore and David Gottlieb

Parfit recap

Nutshell

- We normally think it is rational to care more about our own future wellbeing than other people’s.

- Implicitly, we normally think personal identity is what matters.

- Cases like the Combined Spectrum and the

Branch Line show that personal identity can’t be what

rationalizes our special concern for our future selves.

- Combined Spectrum shows that there are cases where it is an empty question whether a future person will be me: they’re not me but they’re not not me.

- Branch Line shows that there are future persons (copies of me) who aren’t me but with regard to whom I have just as much reason for special concern.

- Accordingly, personal identity is not what matters.

Nutshell

- If anything justifies our special concern for our

own future selves, it’s psychological continuity and connectedness

(Relation R).

- The Moderate View says: our special concern is justified by Relation R.

- The Extreme View says: our special concern is not justified.

- While personal identity is an absolute

all-or-nothing relation, Relation R is a graded relation that can hold

more or less.

- Our own future selves become less and less R-related to us over time.

- We are related to other future people in ways similar to Relation R – e.g. by connections of shared memory and feeling.

- If anything justifies our special concern for our own future selves, it justifies a less-sharp distinction of concern between our future selves and other future people.

- We should rationally care more about other people than we normally do.

Smaller nutshell

- We naively think that the self is metaphysically deep.

- But it’s not.

- So “self-interest” doesn’t really make sense.

What about the biological self?

If the self is so unreal, how come trillions of organisms work with something like a “self” every day?

Parfit’s rejoinder:

Special concern for one’s own future would be selected by evolution. Animals without such concern would be more likely to die before passing on their genes. … [I]f some attitude has an evolutionary explanation, this fact is neutral. It neither supports nor undermines the claim that this attitude is justified. But there is one exception. This is the claim that, since we all have this attitude, this is a ground for thinking it justified. This claim is undermined by the evolutionary explanation. Since there is this explanation, we would all have this attitude even if it was not justified. (Parfit 1984, 308)

A reply to Parfit

- Parfit shows that personal identity is not a deep metaphysical reality that is robust across science fictional thought experiments.

- But what if it is (e.g.) a biological reality that is robust across realistic life events.

- When we are thinking about what is rational, …

- Why isn’t a theory that tells us what to do across realistic life events good enough?

- Why do we need one that tells us what to do in science fictional thought experiments?

- Especially if those thought experiments might be impossible?

Conceivable Selves

AI Teletransporter

It’s your first day as a crewmember of the famous Federation starship USS Enterprise! Time to report for duty by beaming aboard! As a reminder, this is how the transporter works. At the beginning of your journey, a computer scans your physical structure molecule-by-molecule. This process destroys your body. Then, a digital copy of the scan is sent to your destination. At your destination, a computer builds a new body that’s an exact copy of your original body. Then you can report for your exciting new duty! You’ve never been transported before. It’s your turn. Ready to come aboard?

Now imagine that “you” is some AI system.

How do our anwers to the thought experiment change, if at all?

What’s the good of a self?

When you try to implement selves, you either find yourself already committed—or sometimes it’s just a reasonable next step—to building out some of the main features of personal identity over time. (Millgram 2025)

…

“Evolution, in animals, puts a premium on coherent action, and from a certain point onward, the way to act effectively as a self is to have a sense of oneself, as a unit of that kind. This sense of self is entirely tacit or implicit at first, but can become less tacit as the complexity of behavior continues to evolve.” (Godfrey-Smith 2020, pg. 259)

“The view I’m defending here does, in a way, agree that minds exist in patterns of activity, but those patterns are a lot less”portable” than people often suppose; they are tied to a particular kind of physical and biological basis.” (Godfrey-Smith 2020, pg. 270)

Real Teletransporters

The claim is that there is no teletransporter that can produce an exact replica.

- Maybe: That there is a difference that matters between the teletransporter when applied to some digital system and when applied to a biological system. (Hence our intuition on the previous thought experiment.)

Further: Any conceivable transformation that results in a psychological connection (a qualitative one), one might argue, maintains a physical connection (a numerical one).

And so personal identity matters, at least in this biological world.

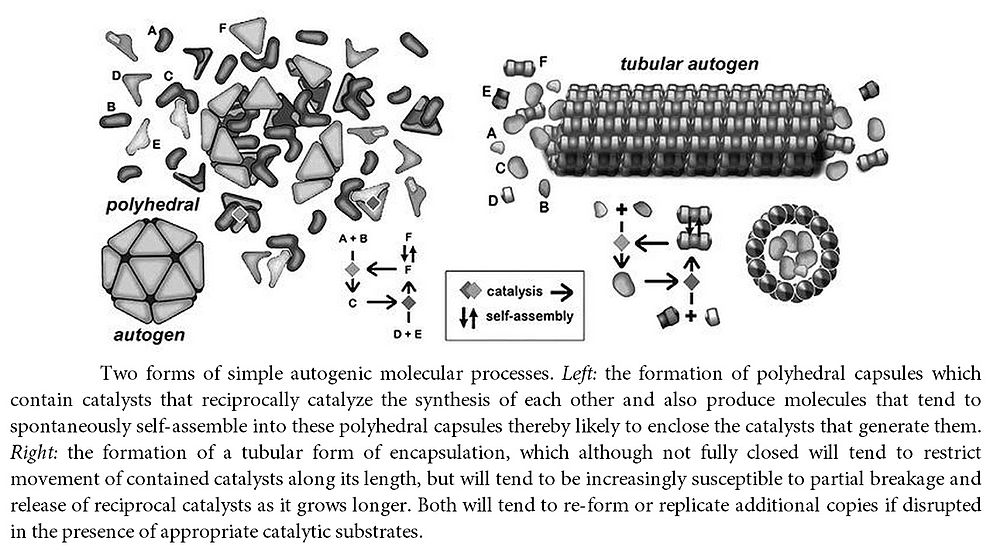

What counts as self organizing?

(As having a self)

(And does AI count?)

chemicals?

molecules?

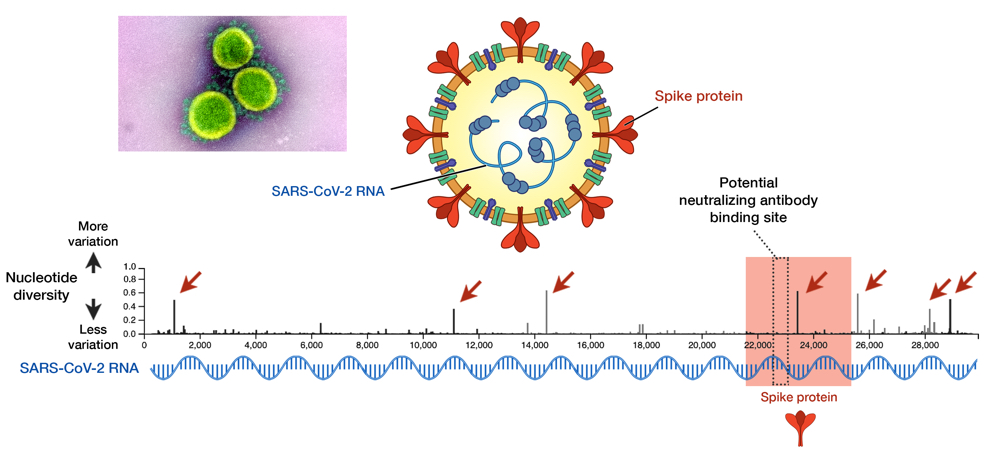

RNA?

single-cells?

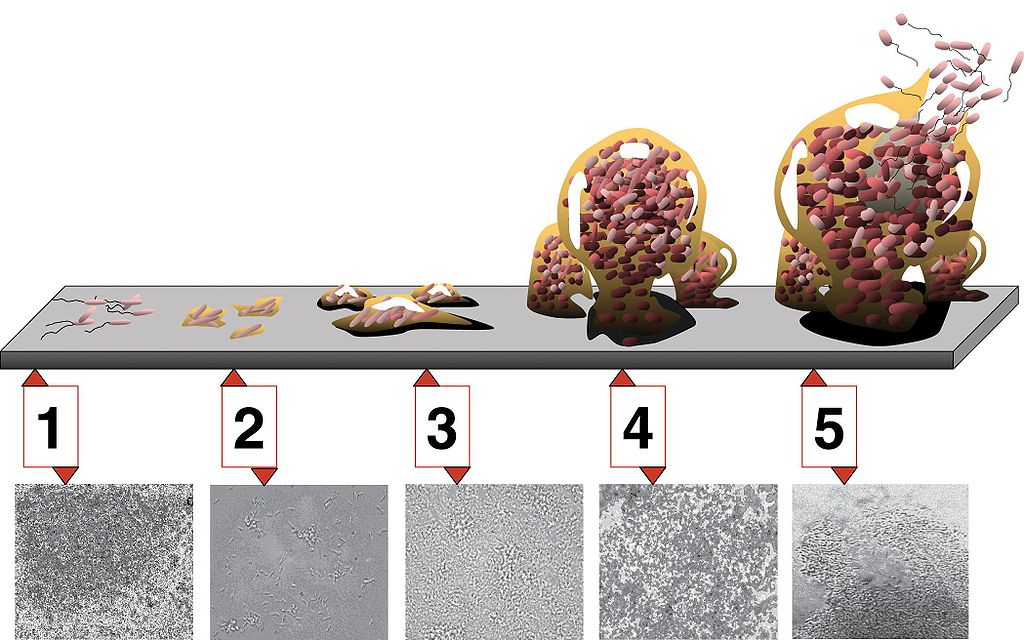

bio-film?

modular (bryzoan)?

unitary organisms / bodies (sponges)?

intercellular communication?

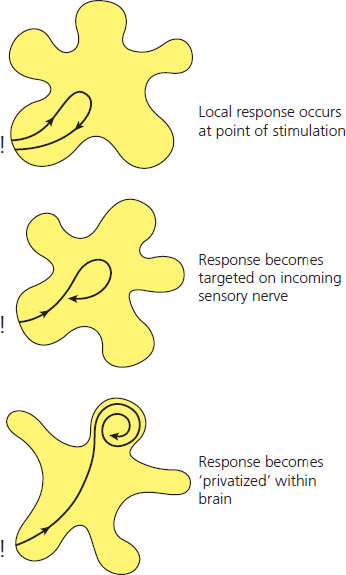

reafference?

What would a real teletransporter be?

In this way, we can take Godfrey Smith to argue that the only way to really do something like the teletransporter is to play with the degree of similarity between the systems we’re considering.

- What are conceivable changes to the systems we’re considering?

Our challenge: what is a thought experiment similar to the teletransporter but that is biologically possible?

Does this thought experiment license the same kind of conclusions that Parfit would want it to?

Does this tell us anything about whether AI systems have selves?

A real (?) self-splitter

(mathematically) (self) organizing

Primordial Soup

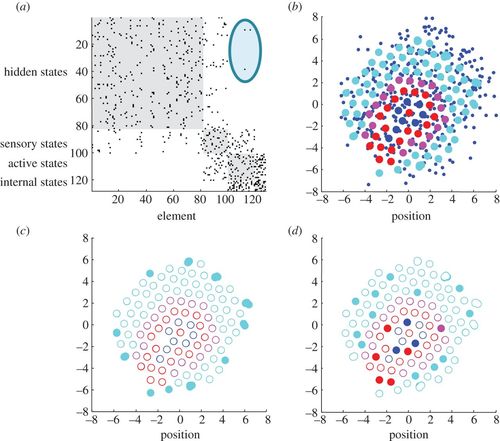

Light blue: hidden; Pink: sensory; Red: active; Blue: internal

Privatization of sensation

Humphrey, 2006

a) active (red) and hidden (light blue) states relate c) non-influential states d) slow subsystems

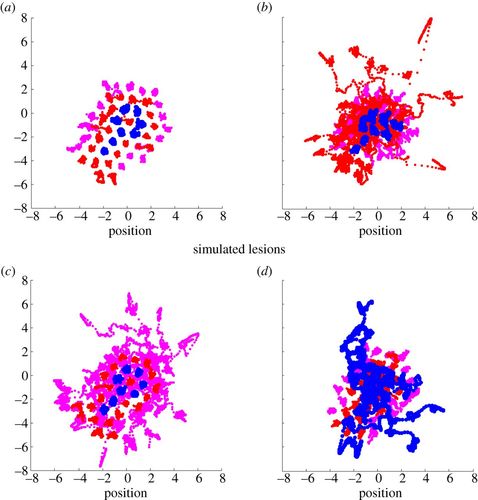

a) normal b) ablate active (red) c) ablate sensory (pink) d) ablate internal (blue)

Free Energy

“If biological systems must minimise their entropy, and entropy is average information, then it follows that they must keep the flow of information they process to a minimum.” (Solms 2021)

“Friston free energy is a quantifiable measure of the difference between the way the world is modeled by a system and the way the world really behaves.” (Solms 2021)