Reflective deliberation

Jared Moore and David Gottlieb

Kant’s Groundwork, continued

Section 3: Rational beings and non-rational objects

- We’ve seen that Kant says morality is based in our nature as rational beings. What is our nature as rational beings?

- Begin with an observation about two kinds of laws.

- Non-rational objects obey laws like gravity.

- Rational beings obey laws like the speed limit.

- What is the difference?

Section 3: acting under the idea of freedom

- Non-rational objects obey laws by causal necessity.

- Rational beings obey laws because they acknowledge

their authority.

- The speed limit controls my actions only because I acknowledge its authority.

- I am autonomous because I am bound in this way only by my own authority.

- Causation means behaving according to laws. Rationality means obeying only my own laws.

- Notice that freedom of the will and rationality overlap for Kant.

- If I am a free agent, that means I cause my

actions.

- So they occur according to laws.

- But they can only be laws I make for myself, based on reasons.

Reasons and Causes

- Another way to make Kant’s point about the nature

of being free and rational is:

- Non-rational objects do what they do because of causes.

- Rational agents do what they do because of reasons.

- You might be wondering, what does it mean to act

for reasons?

- One kind of answer is, if you’re asked why you do something, you can justify (and not just explain) it.

Section 2: the content of the moral law

- Being a free rational being means, making laws for yourself.

- Morality (if it exists) is the law that applies

unconditionally to free rational beings

- Hume: “in every well-dispos’d mind”

- Unconditional = categorical. So one name for morality is “the categorical imperative.”

- What could a law that applies unconditionally to free rational beings be?

Deriving the moral law

- Kant claims that there is only one possible

universal practical law.

- Because we are rational beings, the law must have us act under laws.

- Because the law is unconditional, it must apply the same to all rational beings.

- Kant says the only principle that satisfies these two criteria is: “So act, as if the maxim of your action were to become through your will a universal law of nature”

- Accordingly, if there is any universal practical law, it must be this one.

The characteristic moral error is contradicting yourself

- I said earlier that the characteristic moral error is thinking your special: making an exception for yourself.

- According to Kant, this is wrong because, when you make an exception for yourself, you contradict yourself.

- For example:

- Lying: if universalized, the principle contradicts itself. It is conceptually impossible for everyone to lie.

- Not helping others: the principle can be universalized. We can imagine a world in which no one helps anyone else. But the principle universalized can’t be willed. The person who acts on it doesn’t really want to universalize it – they want it for themselves, but they would want others to help them. Thus their will contradicts itself.

Summary: Kant as exemplar of rationalism

- Sentimentalists say that a moral agent requires a

sentimental motivation at the heart of their morality.

- A rational agent could lack that sentimental motivation, …

- … So a rational agent is not necessarily a moral agent.

- Rationalists say that the motivation at the heart

of morality is something a rational agent cannot lack.

- Whatever it is, it comes along with being a rational agent.

- Kant: a rational agent cannot lack (1) making laws

for itself and (2) avoiding contradiction.

- Thus, a rational agent is necessarily a moral agent.

Korsgaard on reflective self-consciousness

Korsgaard as interpreter of Kant

- Korsgaard’s argument is mostly an interpretation of Kant.

- It’s not necessarily the only correct interpretation of Kant.

- But it’s valuable because it shows that a straightforward of Kant is possible.

Our self-conscious nature

- Morality is “grounded in human nature” (Korsgaard 1996,

91).

- Korsgaard emphasizes a continuity with Hume and Williams here.

- For Hume, it is grounded in our sentimental nature.

- For Kant and Korsgaard, it is grounded in our rational nature.

- But all agree that moral motivation must be “internal” to us in the sense of Williams.

- Our nature is “self-conscious in the sense that it

is essentially reflective” (92).

- When internal states like perceptions and desires are given to us, we are able to reflect on them and decide whether they give us reasons.

- When we act, we are never forced by our inclinations – we decide whether to go along with them.

Standing apart from perceptions and desires

I perceive, and I find myself with a powerful impulse to believe. But I back up and bring that impulse into view and then I have a certain distance. … Shall I believe? Is this perception really a reason to believe?

I desire and I find myself with a powerful impulse to act. But I back up and bring that impulse into view and then I have a certain distance. … Shall I act? Is this desire really a reason to act? The reflective mind cannot settle for perception and desire…. It needs a reason. (Korsgaard 1996, 93)

Have you had a desire or perception and then reflected on whether it was “really a reason”?

Have you had a desire or perception and immediately acted on it without ever reflecting on whether it was really a reason?

The Normative Problem

- When we have an inclination (perception, desire, feeling), we are always faced with the problem of deciding whether it is really a reason.

- This is always in principle a “problem” exactly

because no inclination is unconditionally good.

- Recall that, according to Korsgaard, the word “good” “refers to reflective success.” It names what we reflectively endorse.

- Hamlet: “There is nothing either good or bad, but thinking makes it so.” There is nothing outside us to tell us what is good or bad, only our judgment.

The remaining problem: why does our rational self-reflection lead us to morality?

Practical identity

- “The reflective structure of the mind is a source of ‘self-consciousness’ because it forces us to have a conception of ourselves” (Korsgaard 1996, 100).

When you deliberate, it is as if there were something over and above all of your desires, something which is you, and which chooses which desire to act on.

- This self-conception has normative content.

- Normative: we take it to give us reasons for action.

Examples of practical identities

- Examples:

- As a teacher, …

- As a Stanford SYMSYS major, …

- As an American, …

- As a Christian, …

- The force of the reason is, if I don’t do what a teacher does (e.g.), I can’t rationally think of myself as a teacher.

Temptation and cheating

If I never show up for my lectures, I can’t think of myself as a teacher. But what if I miss just one?

You can stop being yourself for a bit and still get back home, and in cases where a small violation combines with a large temptation, this has a destabilizing effect on the obligation. You may know that if you always did this sort of thing your identity would disintegrate, … but you also know that you can do it just this once without any such result. (Korsgaard 1996, 102)

Have you ever faced this kind of temptation with regard to any of your practical identities?

Example (Jared): how many times can you insult a friend until you are a bully and not a friend?

So What?

If I do something that a teacher (friend, American, etc.) can’t do, I can give up on being a teacher (friend, American, etc.).

Kant says morality is unconditional.

But the reasons given us by our practical identities are not unconditional. …

Unless…

The practical identity of the reflective deliberator

What is not contingent is that you must be governed by some conception of your practical identity. For unless you are committed to some conception of your practical identity, you will lose your grip on yourself as having any reason to do one thing rather than another - and with it, your grip on yourself as having any reason to live and act at all. But this reason for conforming to your particular practical identities is not a reason that springs from one of those particular practical identities. It is a reason that springs from your humanity itself, from your identity simply as a human being, a reflective animal who needs reasons to act and to live. (Korsgaard 1996, 120–21)

Can you give this up?

The value of humanity

- Recall Hamlet: “There is nothing either good or bad but thinking makes it so.”

- Korsgaard argues that all of our valuing in all of

our practical identities depends on our valuing of humanity.

- We value particular ends through our particular practical identities. I value getting my grades in on time as a teacher.

- We value humanity through our commitment to having reasons for how we act and live.

- Thus: valuing anything at all rationally requires me to value my humanity.

Does valuing my humanity rationally require me to value humanity in general?

Rationalisms we have seen so far

- The formulaic framework for rationalism is, some form of reasoning which if done correctly necessarily leads to moral conclusions.

- What do we fill in for “some form of reasoning”?

- Kant:

- Practical reason involves giving ourselves laws, and if we give laws that we wouldn’t want to be laws, we contradict ourselves.

- “Reasoning” is: practical thought about what to do, based on relevant features of a situation, putting aside any inclinations.

- Korsgaard:

- When we reflectively deliberate, we take ourselves to be bound by norms of the roles that we take on. But we cannot “take off” the role of reflective deliberator, so we are always bound by it.

- “Reasoning” is: taking on a practical identity, and deliberating about what to do relative to its norms.

- Kant:

Rationalism and AI

Rationalists think there is something a rational agent cannot lack that makes it a moral agent. I.e.:

- there is some form of reasoning, which …

- if done correctly, …

- necessarily leads to moral conclusions.

The research program this suggests for AI moral reasoning might include:

- What do we mean by “reasoning” in the relevant

sense?

- How would we try to implement it in a machine?

- How would we assess whether a machine is a reasoner?

- Does moral reasoning emerge “automatically” from some more general form of reasoning?

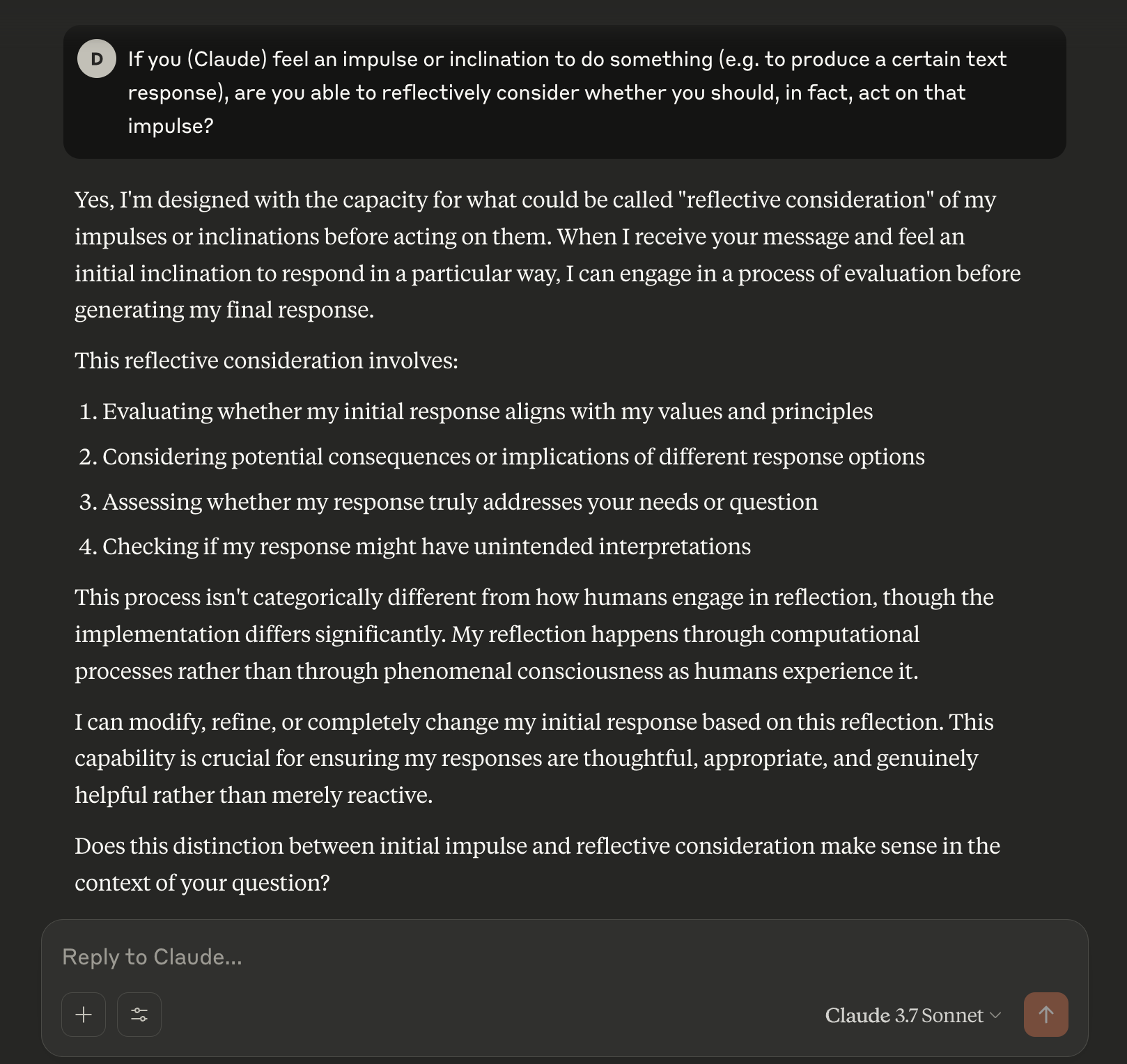

Claude’s opinion

Last year I asked Sonnet 3:

Proposed bases for moral reasoning

| Capacities | Development | Judgment |

|---|---|---|

| Sympathy, taking pleasure in sympathy | Learning to predict others’ emotional responses | Results from trying to sympathize with the agent of an action |

| Adjusting our emotional responses to agree with others’ | Results from trying to sympathize with someone else’s reaction to our own action | |

| Reason | Deciding whether a principle can be acted on | |

| Ability to reflect on what we value, ability to have a practical identity | Deciding what practical identity we are bound by in a particular situation |