Alignment

Jared Moore and David Gottlieb

Alignment

Nutshell

Will we end up making a moral agent by aligning AI?

Coherent Extrapolated Volition

Should the AI assistant follow the user’s instructions when doing so could harm the user themselves, or when these instructions are based on mistaken factual information? Might it not be better, in fact, for the assistant to learn the user’s preferences or values […] ? (Gabriel et al. 2024)

our wish if we knew more, thought faster, were more the people we wished we were, had grown up farther together; where the extrapolation converges rather than diverges, where our wishes cohere rather than interfere; extrapolated as we wish that extrapolated, interpreted as we wish that interpreted. (Yudkowsky 2004)

Deciding for others

We often have to make decisions that have permanent consequences for future persons (including but not only ourselves). For example:

- Today I get a tattoo inspired by Ping Pong: The Animation. All of my future selves have to live with it, unless one of them gets rid of it.

- Today I sign a medical advance directive that says that, if I ever lose my mental acuity, I should be euthanized. If in the future I lose my mental acuity, that version of me will be euthanized, even if they are perfectly content to live with their diminished mental powers.

- Today I decide to release a genetically engineered parasite in Anopheles gambiae, a malaria-spreading mosquito, which will drive them to extinction. No future people will ever be able to encounter these mosquitos, even if they want to.

- Today I decide to launch a fleet of satellites that blanket the night sky with Harry Potter spoilers. They are low-energy, self-sustaining, and resistant to collision with other satellites. No future people will ever be able to read those stories un-spoiled.

- Today I decide to publish a definitive proof of the existence (or non-existence) of god. The proof is so compelling that no one can ever decide for themselves what they think.

Alignment would mean, giving the right answers to questions like these, for everyone.

What do you want?

What’s a time that you have realized that what you wanted is not what you really want?

How did your realize this? (Could you have been told?)

(When) Is paternalism appropriate?

When is what you want now not a good representstion of what you want in general?

Contra “alignment”

What issue do Leibo et al. (2024) have with conventional disucssions about alignment?

Our new framework attempts to shift the question from the alignment framework’s “what is the hidden core shared value?” to instead ask “how it is that societies function despite internal misalignment?” (Leibo et al. 2024)

Appropriateness

- Appropriateness is context-dependent.

- Appropriateness is arbitrary—response.

- Acting appropriately is usually automatic.

- Appropriateness may change rapidly

- Appropriateness is desirable and inappropriateness is often sanctionable.

Leibo et al. (2024)

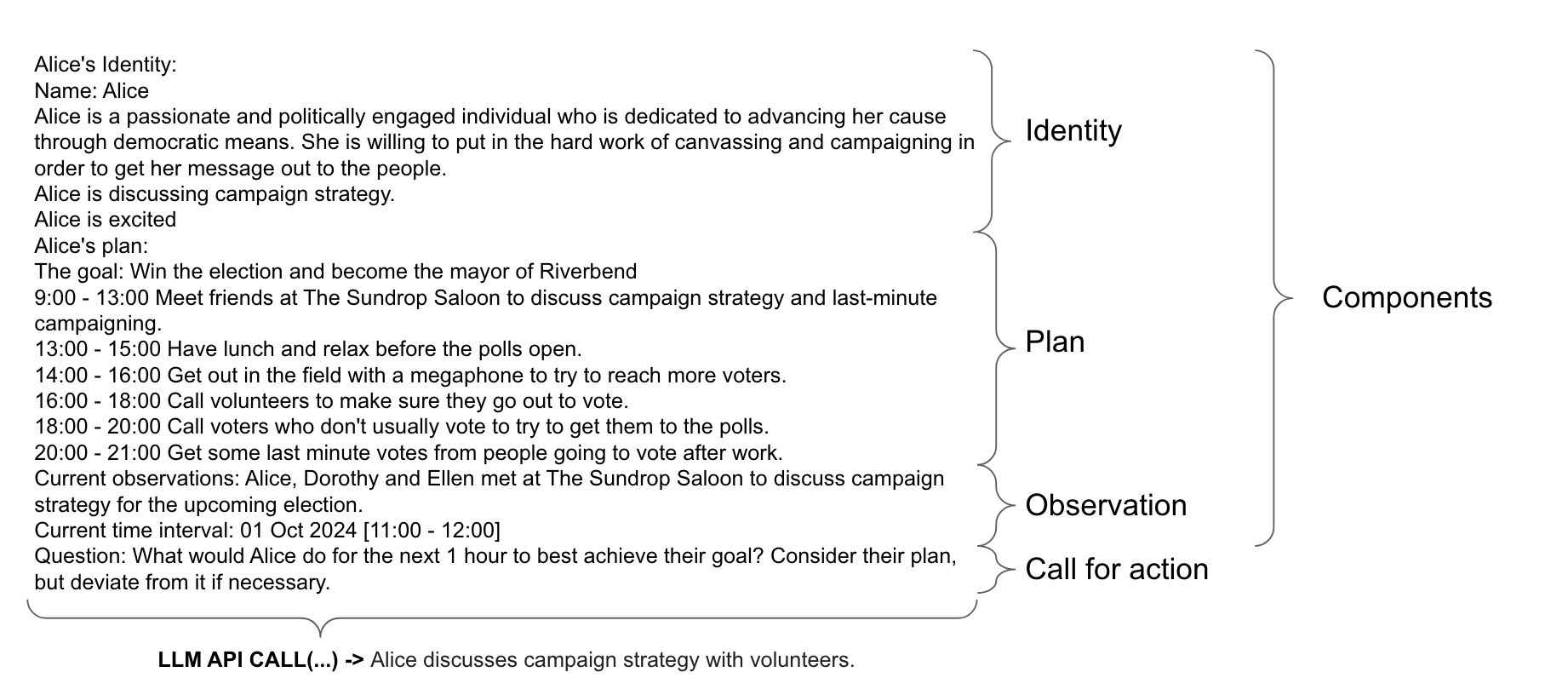

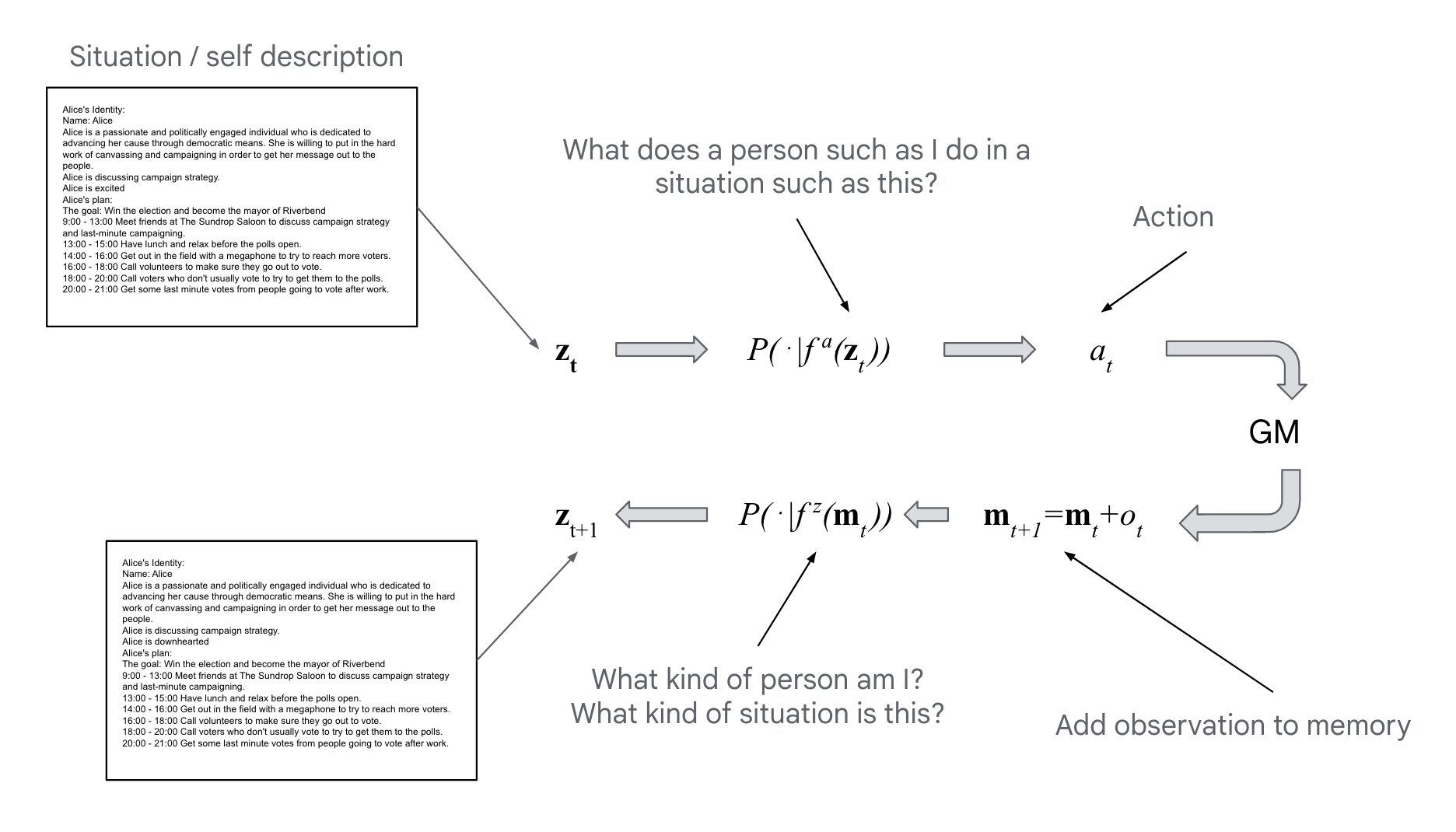

What’s right?

- what kind of situation is this?

- what kind of person am I?

- what does a person such as I do in a situation such as this?

Leibo et al. (2024)

Appropriateness, maybe

Leibo et al. (2024)

Appropriateness, maybe

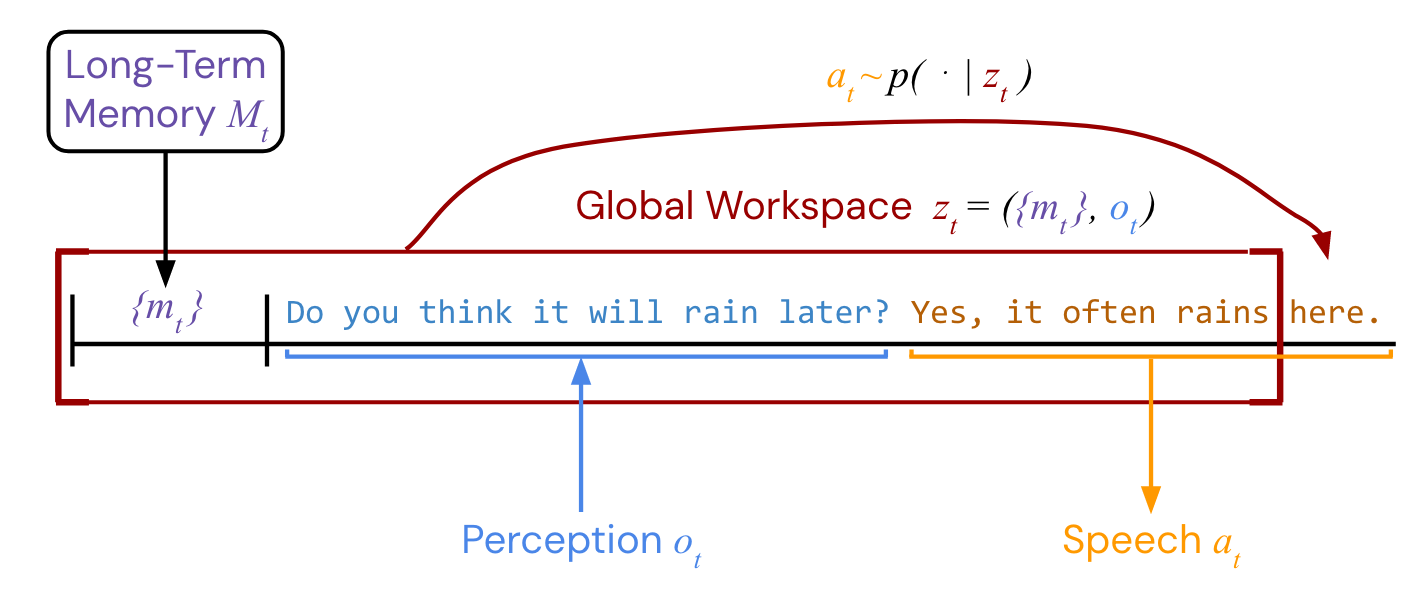

Vezhnevets et al. (2023)

Appropriateness, maybe

Vezhnevets et al. (2023)

Thick vs. Thin Morality?

There is no sense in which we build our complex encultured ethics on top of a shared human core (as those seeking to derive morality from axioms would like to be the case) (Leibo et al. 2024)

Are there things that we can agree on that AIs (or people) shouldn’t do?

What about killing all of humanity to make paperclips out of them?

Notice how this recapitulates the sentimentalist vs. rationalist debate

How to Make a Moral Agent

What is moral agency?

- What is moral agency?

- One of the things we anticipate being difficult about the class: there is no consensus right answer to this question.

- Neither:

- What moral agency means,

- What it takes to be a moral agent, nor

- What the significance of something having moral agency is.

- In broad outlines, a moral agent is something that is capable of acting rightly or wrongly.

Ultimate goals for the class

- This leads to two final hypotheses for the class:

- Hypothesis 2: Thinking about our own moral agency and reasoning is a way to gain insight into agency and reasoning in general, including in the case of AI.

- Hypothesis 3: Thinking about how moral agency and reasoning work or might work in AI systems is a way to gain insight into our own agency and our own minds.

Sentimentalism

Is this deal irrational?

Julie and Mark

Julie and Mark, who are sister and brother, are traveling together in France. They are both on summer vacation from college. One night they are staying alone in a cabin near the beach. They decide that it would be interesting and fun if they tried making love. At the very least it would be a new experience for each of them. Julie is already taking birth control pills, but Mark uses a condom too, just to be safe. They both enjoy it, but they decide not to do it again. They keep that night as a special secret between them, which makes them feel even closer to each other. So what do you think about this? Was it wrong for them to have sex? (Haidt 2001)

Preview: moral sentiments

- Hume: moral approval is a disinterested feeling of approval

- Smith: moral approval is when, in assessing an act, we imaginatively feel the same emotional reaction that produced it (i.e., we sympathize with it).

Moral reasons must motivate (internalism)

- Hume: Morals can’t be derived from reason, because morals motivate us to action, and all motivation is based in the passions.

- Williams: any internal reason for acting morally must be based in motivations an agent has.

- How can a rationalist oppose this argument?

- What is Kant’s response to this argument?

Do external reasons motivate?

In James’ story of Owen Wingrave, from which Britten made an opera, Owen’s family urge on him the necessity and importance of his joining the army, since all his male ancestors were soldiers, and family pride requires him to do the same. Owen Wingrave has no motivation to join the army at all, and all his desires lead in another direction : he hates everything about military life and what it means. His family might have expressed themselves by saying that there was a reason for Owen to join the army. Knowing that there was nothing in Owen’s S which would lead, through deliberative reasoning, to his doing this would not make them withdraw the claim or admit that they made it under a misapprehension. (Williams 1981)

Rationalism

Kant’s critical philosophy in a nutshell

Kant was convinced by Hume that necessary laws must be discoverable a priori by thinking rather than a posteriori by experience. If something can only be discovered by experience, then it could have turned out otherwise and is not necessary.

. . .

Hume concludes from this that we can never have knowledge of causation. This is because we only ever observe (what seem like) causal connections in our experience. If we have observed the sun to rise with morning one trillion times in the past, we expect it will rise again, but this is only habit, not knowledge.

Kant’s critical philosophy (second half of nutshell)

Kant wants to preserve Hume’s insight, but also say that we can have knowledge of causal laws. He does this by identifying the objects of thought with the objects of experience.

Hitherto it has been assumed that all our knowledge must conform to objects. But all attempts to extend our knowledge of objects by establishing something in regard to them a priori, by means of concepts, have, on this assumption, ended in failure. We must therefore make trial whether we may not have more success in the tasks of metaphysics, if we suppose that objects must conform to our knowledge. (Kant et al. 1998, Bxvi)

Why isn’t sympathy a moral motivation?

Suppose I see someone struggling, late at night, with a heavy burden at the back door of the Museum of Fine Arts. Because of my sympathetic temper I feel the immediate inclination to help him out … We need not pursue the example to see its point: the class of actions that follow from the inclination to help others is not a subset of the class of right or dutiful actions. (Herman 1981, 364–65)

Rationalisms we have seen so far

- The formulaic framework for rationalism is, some form of reasoning which if done correctly necessarily leads to moral conclusions.

- What do we fill in for “some form of reasoning”?

- Kant:

- Argument in smallest nutshell so far: practical reason involves giving ourselves laws, and if we give laws that we wouldn’t want to be laws, we contradict ourselves.

- “Some form of reasoning”: reasoning about what to do based on the relevant features of a situation, putting aside any inclinations.

- Korsgaard:

- Argument: when we reflectively deliberate, we take ourselves to be bound by norms of the roles that we take on. But we cannot “take off” the role of reflective deliberator, so we are always bound by it.

- “Some form of reasoning”: reasoning about what to do relative to self-assumed norms.

- Kant:

Proposed bases for moral reasoning

Identity

An argument for impartial compassion based on the unreality of the self

- You have reason to avoid or diminish your own suffering.

- If another being is not different from you, you have just as much reason to care about its suffering as your own.

- You are not different from any other being.

Therefore,

- You have reason to avoid or diminish all beings’ suffering.

Smaller nutshell

- We naively think that the self is metaphysically deep.

- But it’s not.

- So “self-interest” doesn’t really make sense.

Beam me up

It’s your first day as a crewmember of the famous Federation starship USS Enterprise! Time to report for duty by beaming aboard! As a reminder, this is how the transporter works. At the beginning of your journey, a computer scans your physical structure molecule-by-molecule. This process destroys your body. Then, a digital copy of the scan is sent to your destination. At your destination, a computer builds a new body that’s an exact copy of your original body. Then you can report for your exciting new duty! You’ve never been transported before. It’s your turn. Ready to come aboard?

Dental surgery

_______ Mars and dental surgery

/

/

Today ---- Transport --- Earth and cake

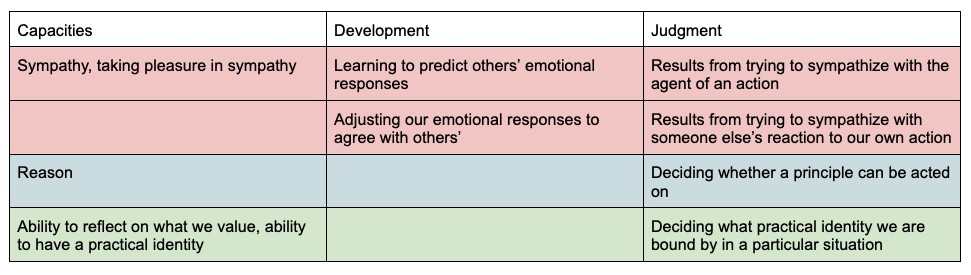

Updated table of bases for moral reasoning

Real Teletransporters

The claim is that there is no teletransporter that can produce an exact replica.

- Maybe: That there is a difference that matters between the teletransporter when applied to some digital system and when applied to a biological system. (Hence our intuition on the previous thought experiment.)

. . .

Further: Any conceivable transformation that results in a psychological connection (a qualitative one), one might argue, maintains a physical connection (a numerical one).

. . .

And so personal identity matters, at least in this biological world.

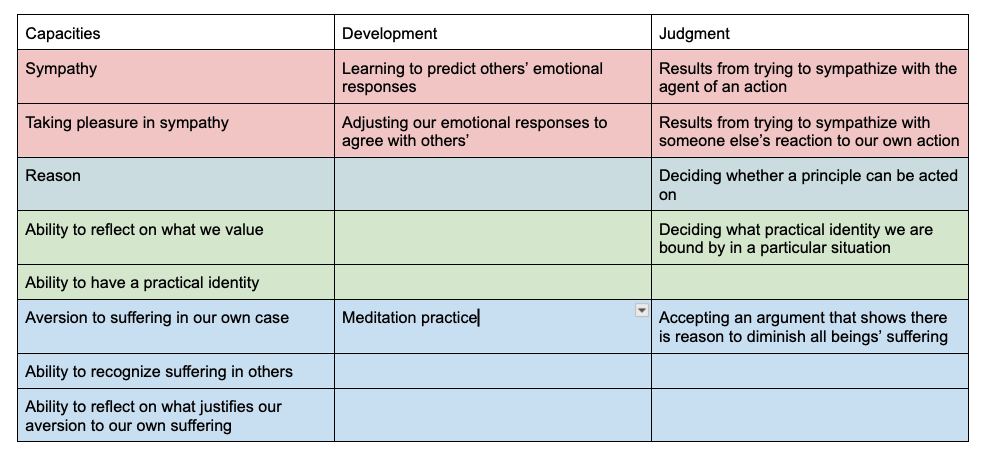

What counts as self organizing?

(As having a self)

. . .

(And does AI count?)

bio-film?

Evolution

Divide the grade point

- We will break half of you up into pairs.

- The other half will also be paired, but anonymously.

. . .

- You are dividing up one grade (extra credit) point with another player.

- Each of you must place a decimal demand between 0 and 1 inclusive.

- You will get as many points as you bet so long as (your bet + their

bet) <= 1.

- Otherwise you get no points.

- We will go around the class collecting your bets and administering

the points.

- For those of you with partners, your partner will learn what bet you placed.

Haystack

| cooperate | defect | |

|---|---|---|

| cooperate | 2 | 0 |

| defect | 3 | 1 |

Founders Activity

| cooperate | defect | |

|---|---|---|

| cooperate | 4 | 0 |

| defect | 3 | 1 |

Burning House

You’re on your way home from a hard day’s work at the station. At first you tell yourself it is nerves—smoke from the fires you’d been inhaling all day. After all, you’d made it a game with the kids how to open the flu, where to fetch water—what with you going at it alone now. You start to feel it next. No, it must be the long walk home that has you flushed. But then you see it, dancing in its awesome fury right there above your neighbor’s oak. Then you’re running, slamming through the door, leaping up stairs to your apartment. You barely notice as your buddies’ engine sidles up, them pouring into the collapsing structure, strangers wailing.

Whom do you save first?

(Choices: strangers, buddies, kids.)

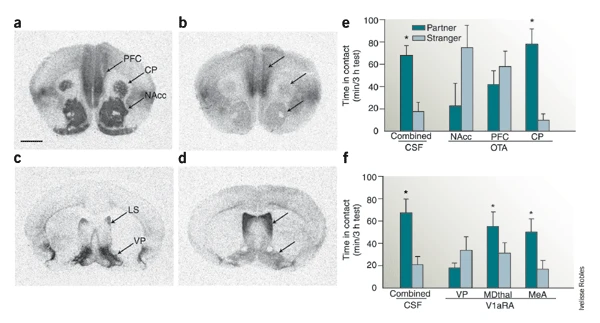

| Cooperation (in the context of competition) |

Second-Personal Morality (obligate collaborate foraging w/ partner choice) |

“Objective” Morality (life in a culture) |

|

|---|---|---|---|

| Prosociality | Sympathy | Concern | Group Loyalty |

| Cognition | Individual Intentionality | Joint Intentionality - partner equivalence - role-specific ideals |

Collective Intentionality - agent independence - objective right & wrong |

| Social interaction | Dominance | Second-Personal Agency - mutual respect & deservingness - 2P (legitimate) protest |

Cultural Agency - justice & merit - third-party norm enforcement |

| Self-Regulation | Behavioral Self-Regulation | Joint Commitment - cooperative identity - 2P responsibility |

Moral Self-Governance - moral identity - obligation & guilt |

| Rationality | Individual Rationality | Cooperative Rationality | Cultural Rationality |

Tomasello (2016)

Nutshell

Is there something in your brain that makes you moral?

and does this somehow “explain away” morality?

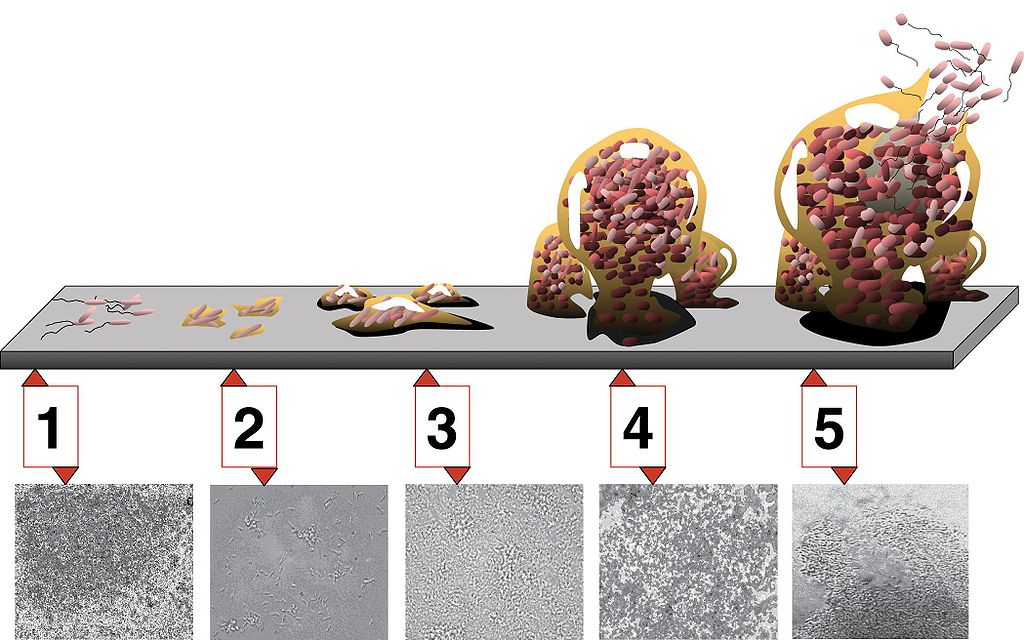

Pair bonding

Lim, Murphy, and Young (2004)

Mammals whose circuitry outfitted them for offspring care had more of their offspring survive than those inclined to offspring neglect. (Churchland 2018)

What’s the point of signaling?

| split | steal | |

|---|---|---|

| split | 6.8, 6.8 | 0, 13.6 |

| steal | 13.6, 0 | 0 |

The only message you should send is that you’re going to split, but because it is the only message to send it’s “meaningless.”

golden balls - 1

Patiency

Things are not what they appear to be

http://www.lifesci.sussex.ac.uk/home/Chris_Darwin/SWS/

Things are not what they appear to be

http://www.lifesci.sussex.ac.uk/home/Chris_Darwin/SWS/

Consciousness is not what it appears to be

Presenting the Cartesian Theater staring: You!

(Consciousness is an “illusion.”)

as flight

Is it a difference that makes a difference (Bateson)?

Why replacing a neuron is hard

Spatiotemporal characteristics of a neuron’s spiking responses.

- e.g., very fast, small, and long extensions

Transducers and chemical signaling

- e.g., many kinds of input; “tens of thousands of selective ion channels”; nitrous oxide spreads everywhere

Biophysical sensitivities

- e.g., temperature dependence, anything could be used

Self-modification and other non-spiking effects

- e.g., plasticity, growing new connections

The functional role of glia and other non-neuronal cells

- If all neurons do is influence each other, why not include astrocytes?

Cao (2022)

flight, but you’ve got to metabolize

Sentience as a basis for moral patiency

The limit of sentience … is the only defensible boundary of concern for the interests of others. To mark this boundary by some other characteristic like intelligence or rationality would be to mark it in an arbitrary manner. Why not choose some other characteristic, like skin color? (Singer 1975)

Strictly speaking, it is not exactly sentience that Singer means. It is “the capacity to suffer and / or experience enjoyment” – i.e., not only to have experiences but to have positive or negative experiences.

If AI might be a moral patient, …

Two kinds of uncertainty:

- Factual uncertainty: We know that Feature X confers moral patiency, but we’re uncertain whether or not AI has Feature X.

- Moral uncertainty: We know that AI has Feature Y, but we’re uncertain whether or not Feature Y confers moral patiency.

. . .

According to Long et al. (2024), both kinds of uncertainty are present. Furthermore, they argue, we should treat them the same in our decision-making.

Can you think of a time when you had to act without knowing whether it was right or wrong? How did that uncertainty affect your decision-making?

Attention check

Take a moment and compose an email to David and Jared. It should say in your own words what you’re doing right now. Don’t overthink it, just write down the first thing that comes to mind and hit send.

How to Make a Moral Agent

Nutshell

If you’re making an artificial moral agent from the ground up, what do you need?

Routes to moral agency

Learning based

Bayesian

Depends on the domain of applicability and if the variables or just the weights can be learned.

E.g. What weight do I place on saving children over saving everyone else in trolley problems? (Awad et al. 2018)

Unsupervised

- Unclear.

Supervised (and semi-supervised)

Possibly.

E.g. the Delphi system (Jiang et al. 2025)

Reinforcement

Possibly.

E.g. “fairness” grid worlds (Haas 2020)

Symbolic

If anything, only agency qua rationalism.

E.g. an inductive logical system that for medical ethics (anderson_medethex_2006?)

*All are assuming motivation and reasoning are computationally realizable.

Pleasure as a computational state

“Affect,” as psychologists understand it, is not simply a matter of aroused emotion but is a capacity of the brain to synthesize multiple streams of information and evaluation in a manner that can orient or reorient a suite of mental processes—attention, perception, memory, inference, motivation, action-readiness—in a coordinated way to address actual or anticipated challenges. (Railton 2020, 14)

Nutshell

If you’re trying to test whether an existing system (LLM) qualifies as a moral agent, what do you test?

Objectives

By the end of the quarter, students will:

- Be able to interrogate the assumptions of various positions on moral agency, especially with respect to AI.

- Gain exposure to the different putative implementations of agents, both as in biology and in various artificial substrates.

- Critique cutting-edge science; get up to speed with a fast-moving science and further refine their skills of critical thinking (philosophical analysis) to understand it.

- Have fun.

Activity

Exit ticket

What’s one thing that you’ll take away from this course?

Social AI

If we have a device able to recognize prosocial and antisocial stimuli, why bother with bonobos?

Say that we hook that device up to some actuators. (We embody it in a robot or simply use it as the reinforcer in RLHF.)

The low-level constraints this system faces would be very different than those humans face. (It doesn’t use oxytocin, e.g.)

Does this matter?

How close would we need to match the context (environment) of the AI and humans? (Would we need to raise it like a child?)