[1] 1Correlation

Outline

Scatterplot: a display of two continuous variables

Key concept: correlation, a numerical summary of the scatter plot.

Reading: Chapter 6 of Intro Stats

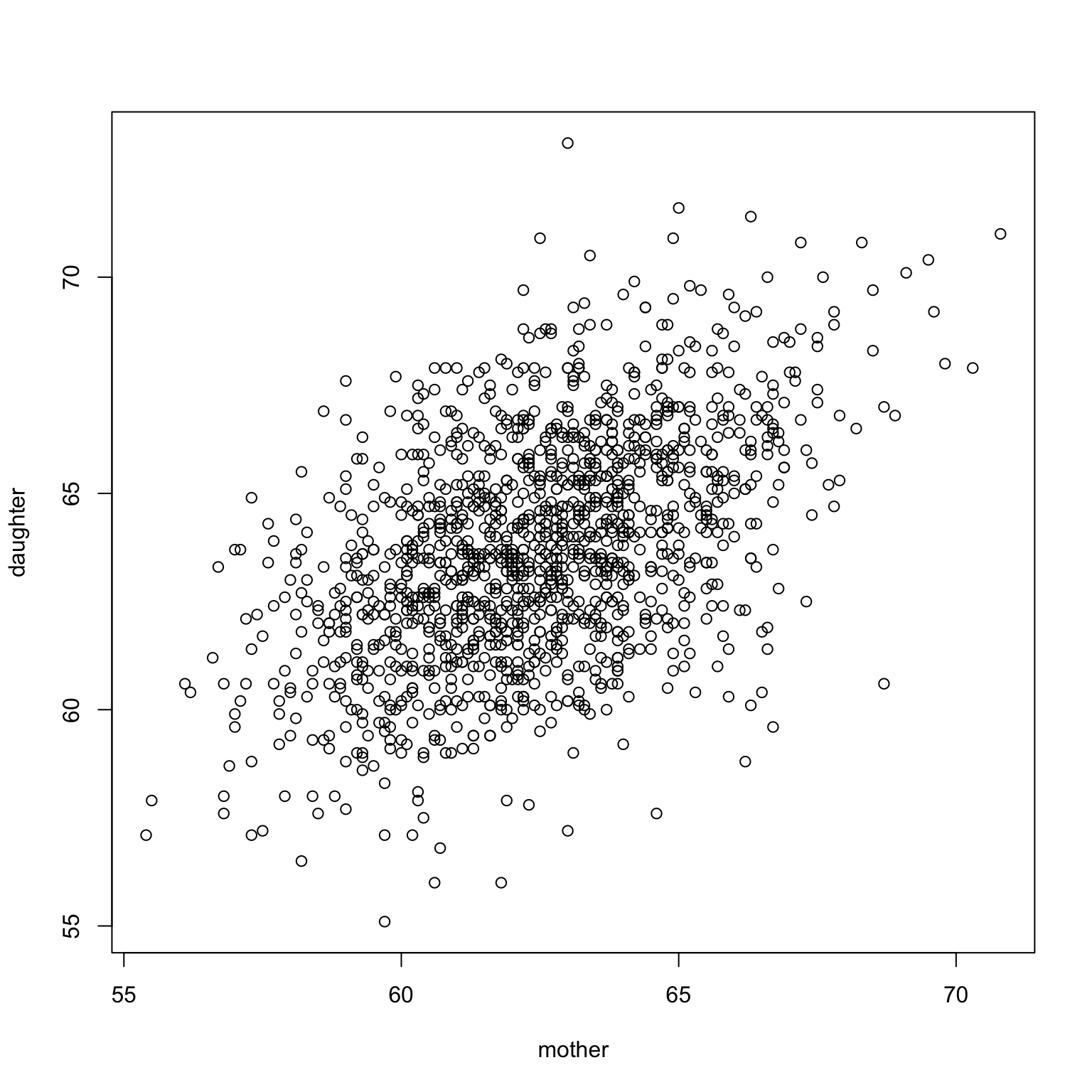

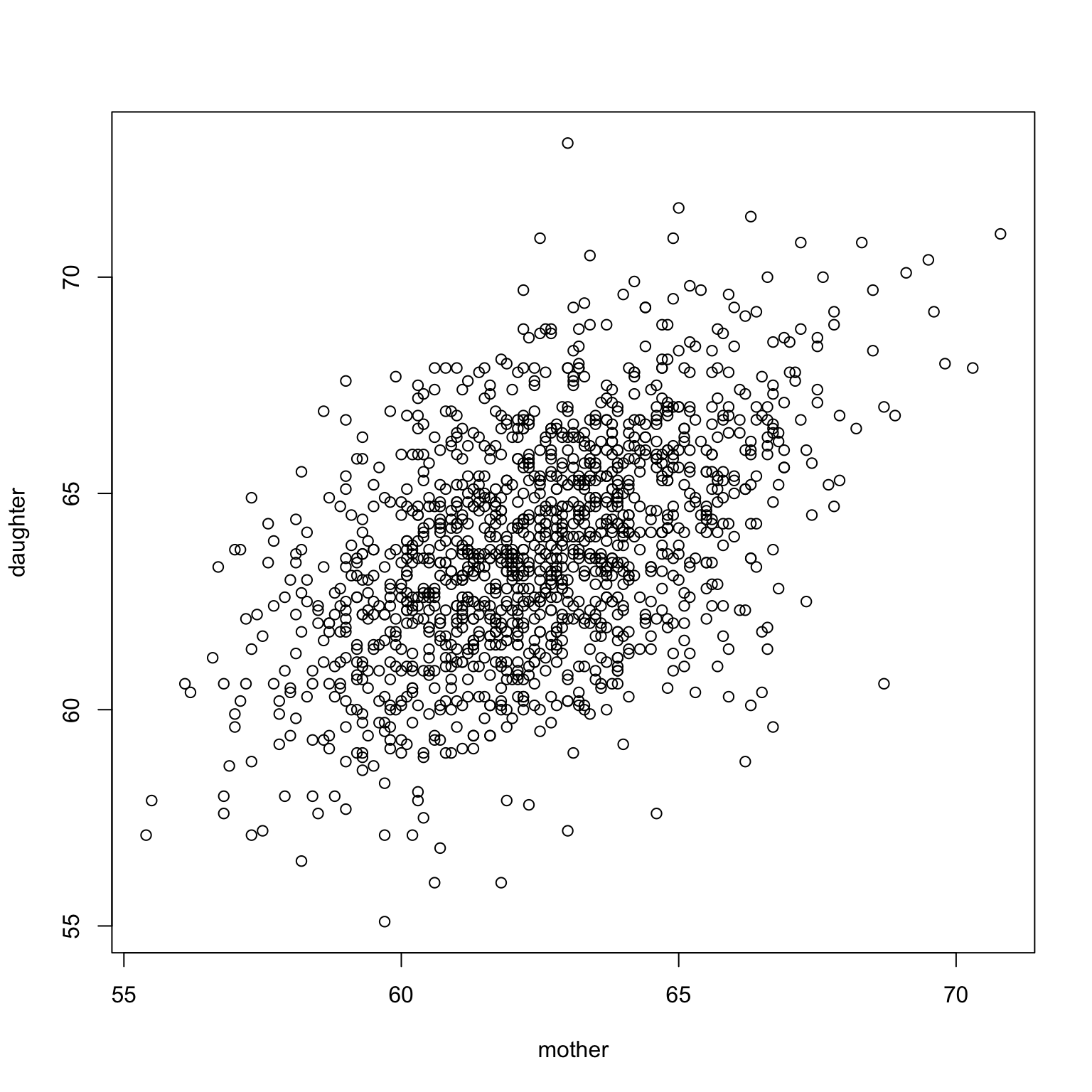

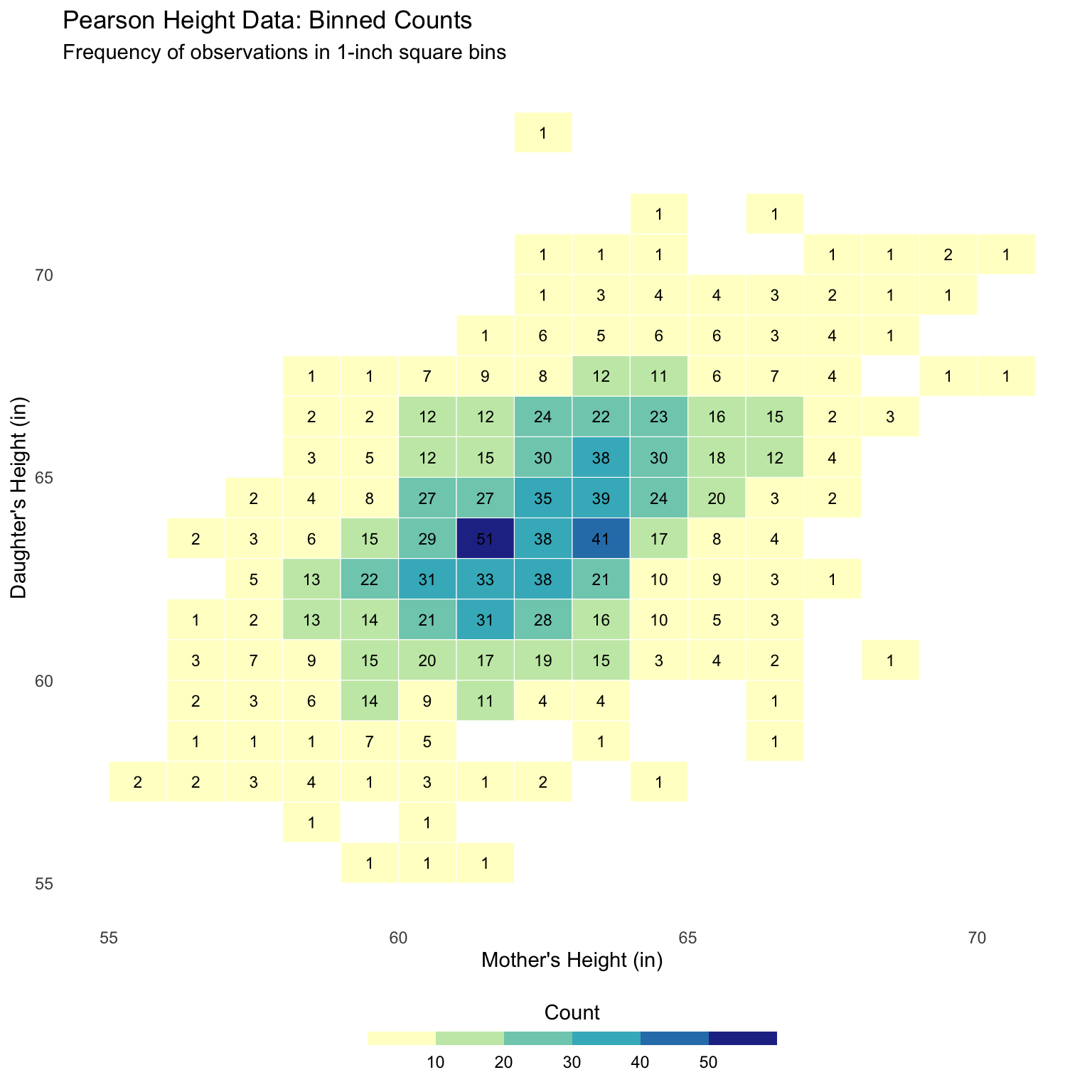

Scatter plot

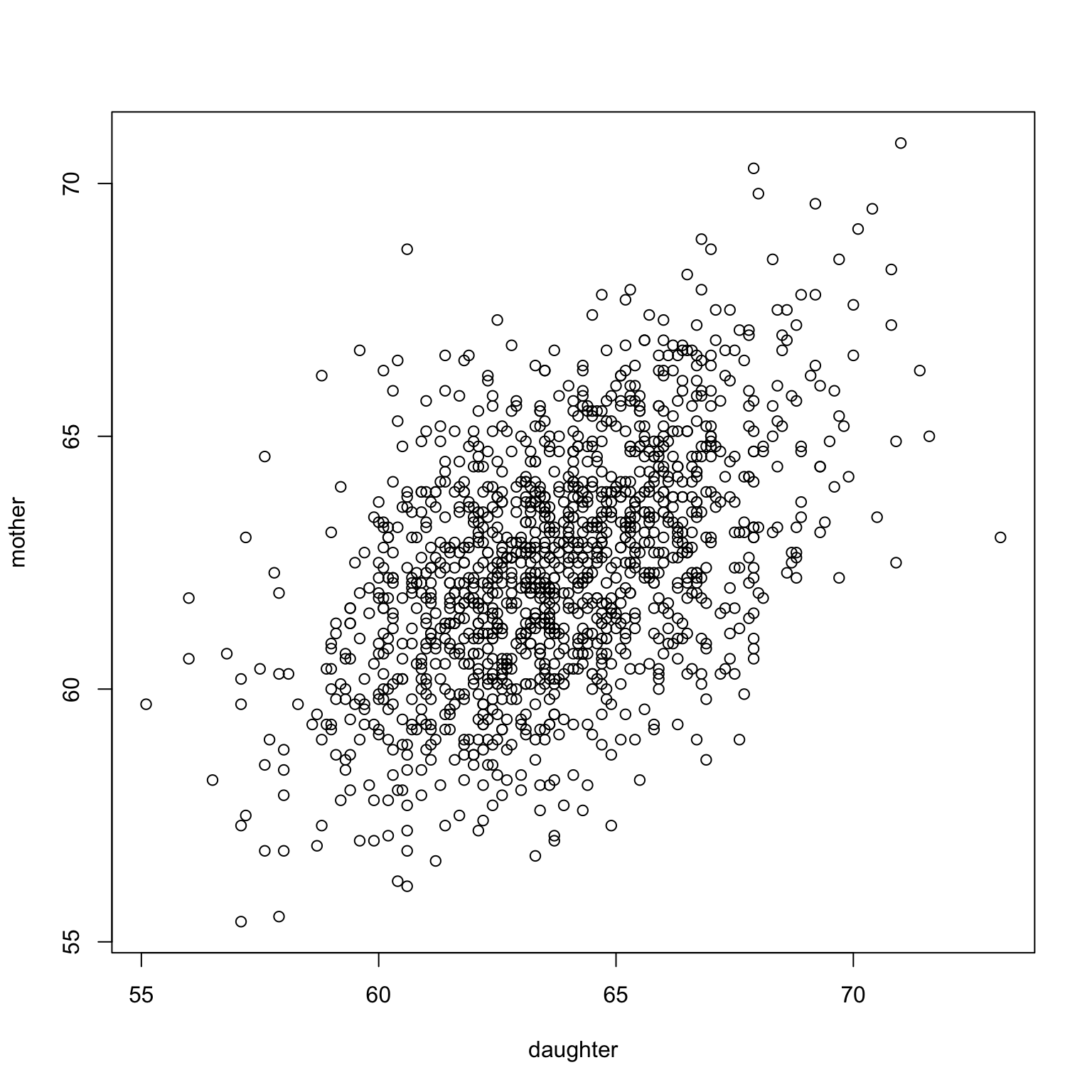

Pearson’s data again: heights of mothers and daughters recorded in early 20th century

Clear pattern visible from the plot: mothers who are taller often give birth to taller daughters.

Scatter plot

A plot with two axes:

X-axis is the independent variable;

Y-axis is the dependent variable.

Graphical representation of the relationship between two variables.

What can scatter plot tell us?

- There is a positive association between the

motheranddaughter: from the plot, daughters born to taller mothers tend to be taller.

Note

There could be a negative relationship.

There could be no relationship.

There could be a nonlinear relationship.

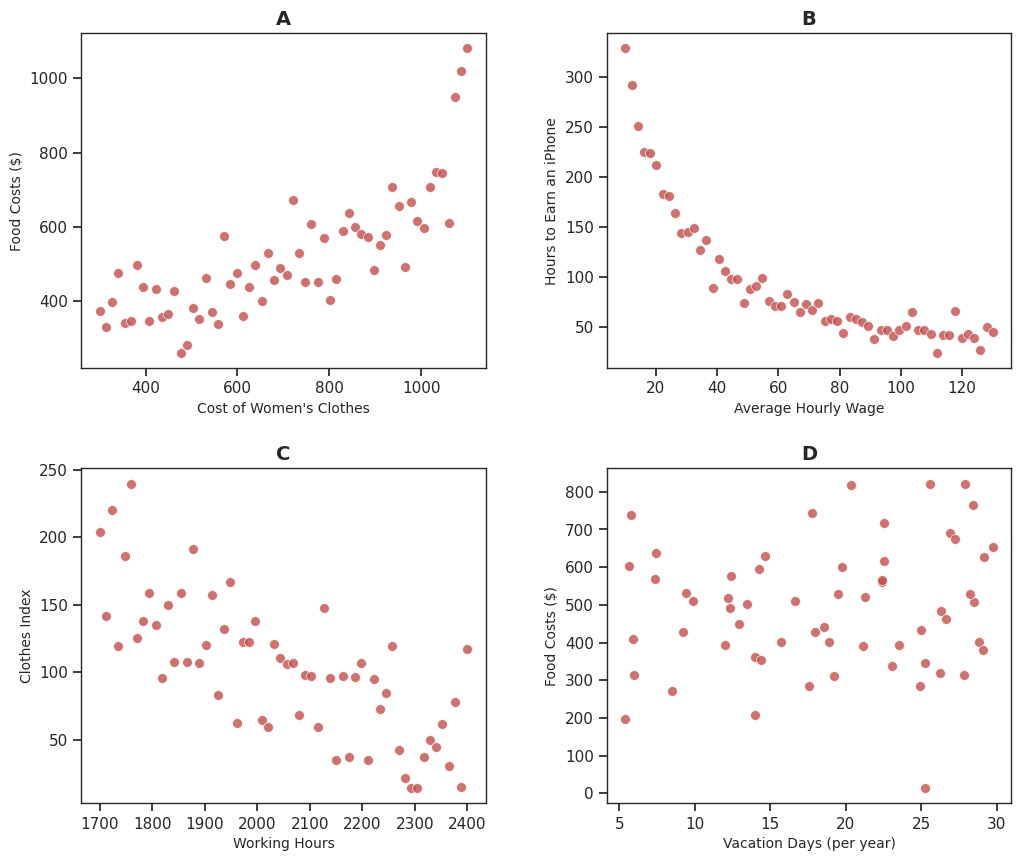

Different patterns

Correlation

A numerical summary of a scatterplot, i.e. a pair of datasets.

Captures linear association between the datasets.

If there is a strong association between two variables, then knowing one can help a lot in predicting the other.

When there is a weak association, information about one variable does not help much in guessing the other.

Correlation coefficient

The correlation coefficient, \(r\), is a measure of the strength of this association.

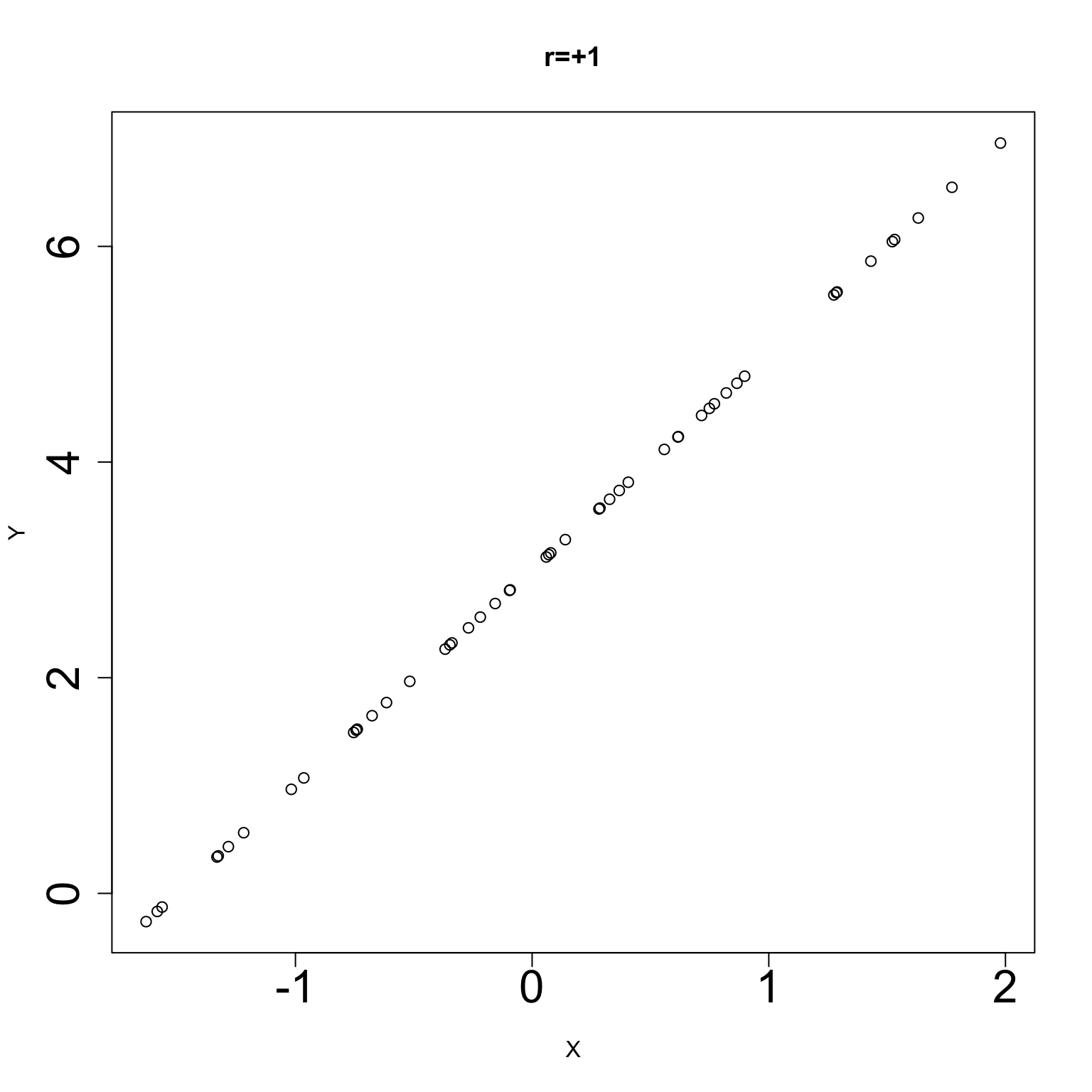

\(r=+1\) if the variables are perfectly positively associated.

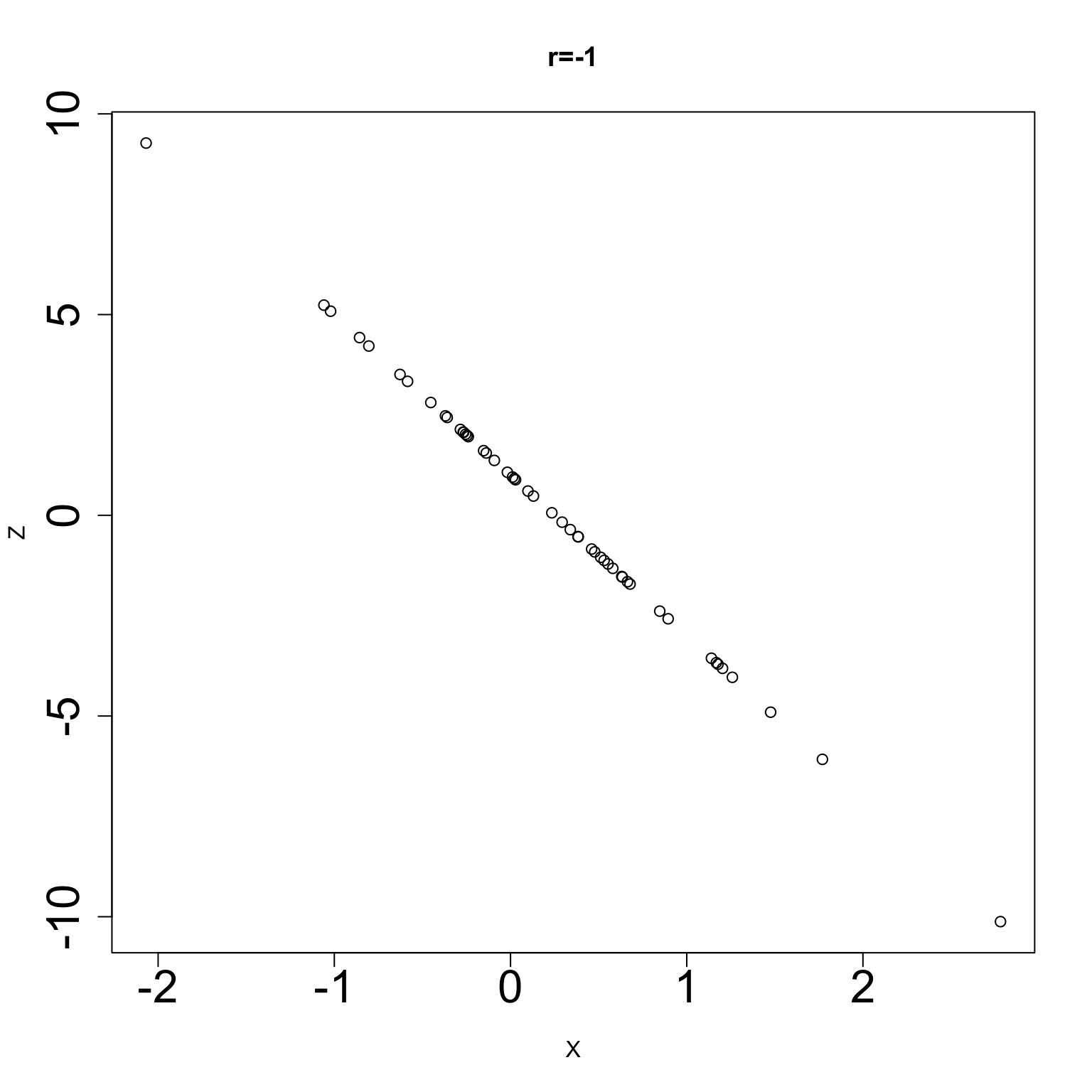

\(r=-1\) if the variables are perfectly negatively associated.

Perfectly positively correlated, \(r=+1\)

Perfectly negatively correlated, \(r=-1\)

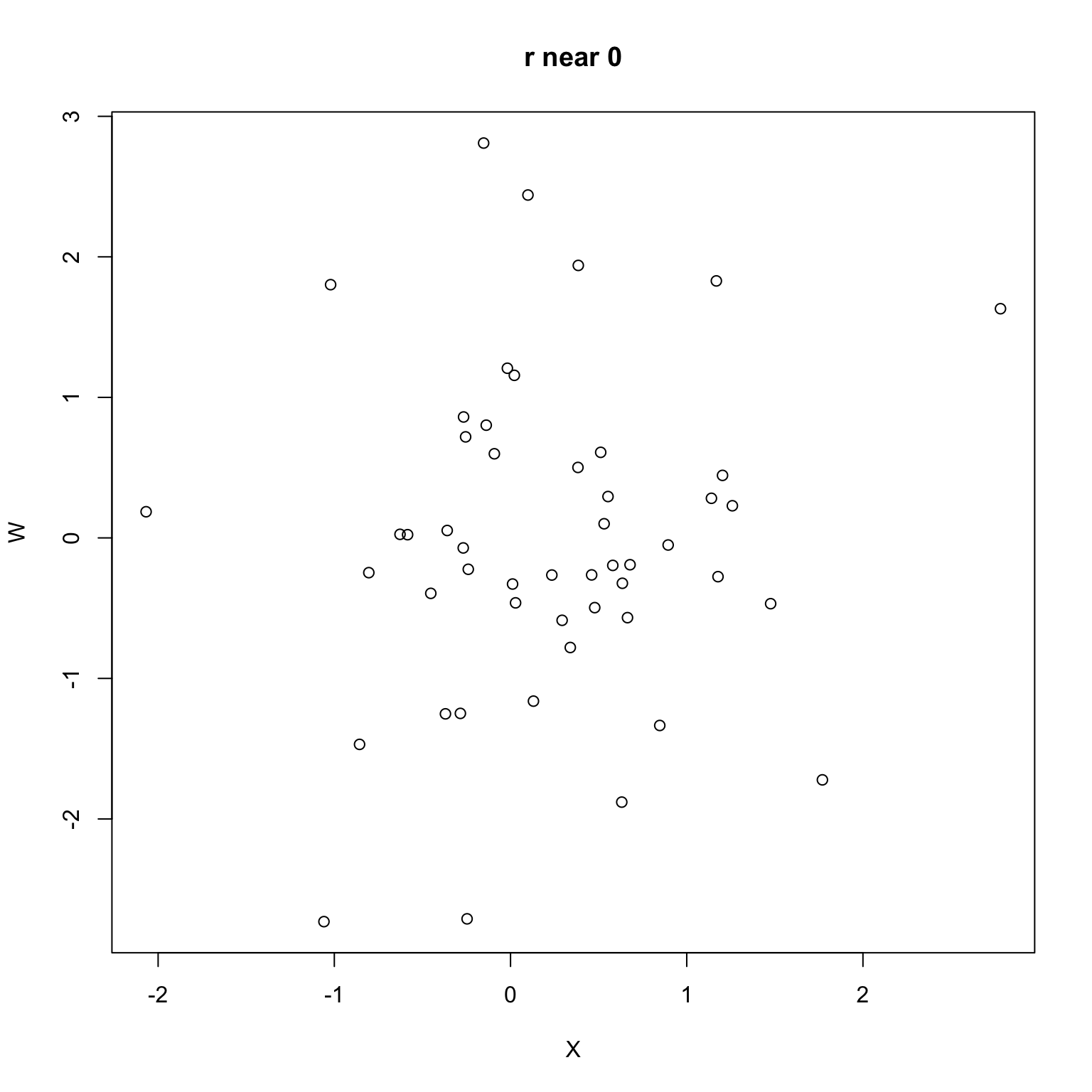

Uncorrelated variables (no relation) \(r\) is near 0

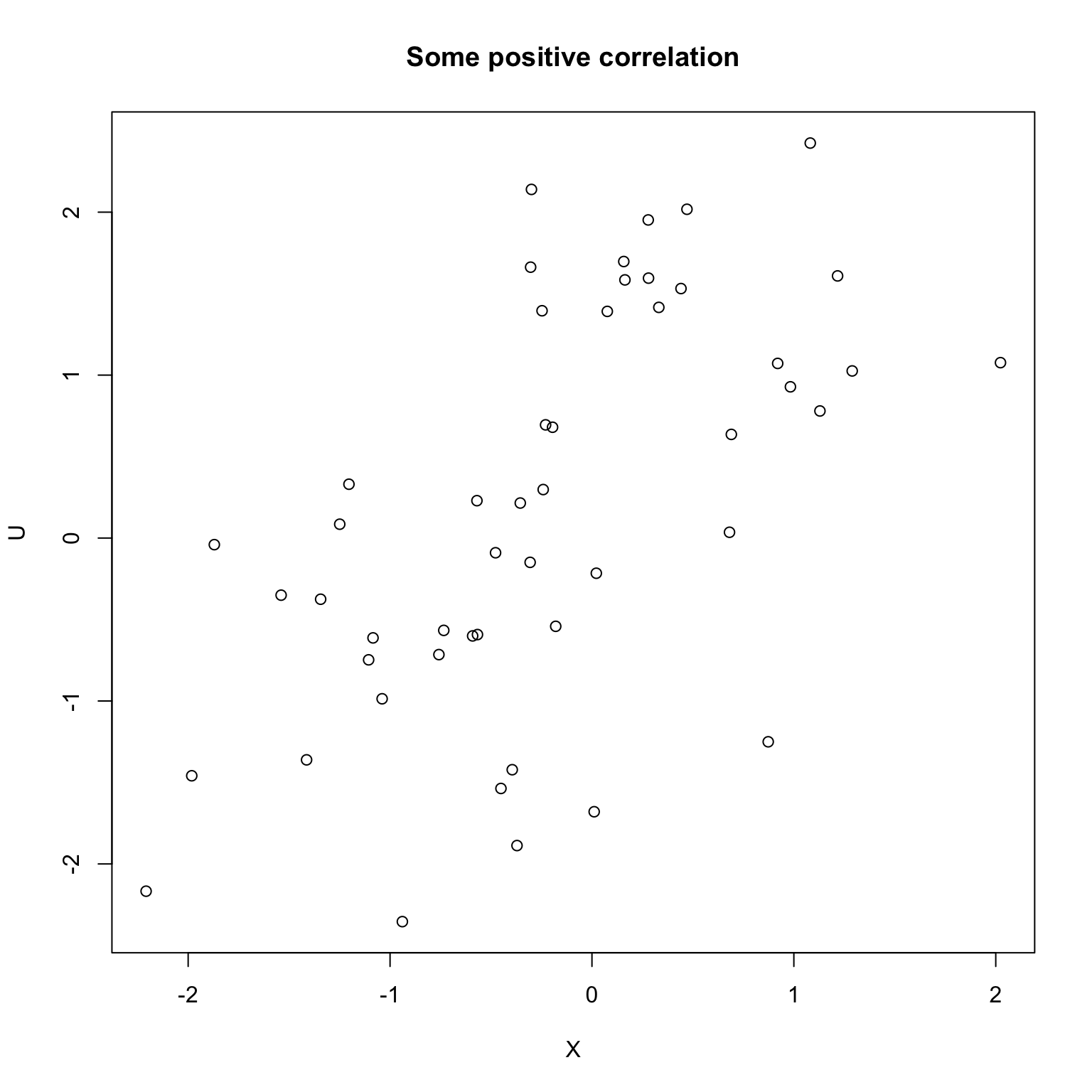

Positive but not perfect

Standardized units

- Given a list \(X\), we define the standardized list as the list with its mean removed and scaled to have

sdof 1

Computing \(r\), the correlation coefficient

The formula for correlation is easily expressed in terms of the standardized variables.

The correlation of

XandYis (almost) the average of the productZ_XandZ_Y

\[ r = \frac{1}{n-1} \sum_{i=1}^n Z_{X,i} * Z_{Y,i} \]

Why isn’t it exactly 1?

- Recall \(X\) and \(Y\) were perfectly correlated

Doh! It’s the denominator again…

- The last line should really be dividing by \(n-1\) as well

Why bother with \(n\) vs \(n-1\)?

There are good reasons statisticians do this, but you’ll have to trust us (i.e. statisticians…)

Most noticable when \(n\) is not very big…

We won’t emphasize this \(n\) vs \(n-1\) in the denominator too much, but it will come up again in regression.

Summation notation

- The entries of the lists \(Z_X, Z_Y, Z_{XY}\) are:

\[\begin{aligned} Z_{X,i} &= \frac{X_i - \bar{X}}{\text{SD}(X)} \\ Z_{Y,i} &= \frac{Y_i - \bar{Y}}{\text{SD}(Y)} \\ Z_{XY,i} &= Z_{X,i} \times Z_{Y,i} \end{aligned}\]

- Then,

\[r = r(X,Y) = \frac{1}{n-1} \sum_{i=1}^n Z_{XY,i}.\]

- Another way:

\[r = r(X,Y) = \frac{\frac{1}{n-1} \sum_{i=1}^n (X_i - \bar{X}) (Y_i - \bar{Y})}{\text{SD}(X) \text{SD}(Y)}\]

- Yet another way:

\[ r(X, Y) = \frac{\sum_{i=1}^n (X_i-\bar{X})(Y_i - \bar{Y})}{\sqrt{\sum_{i=1}^n (X_i - \bar{X}^2)} * \sqrt{\sum_{i=1}^n (Y_i - \bar{Y}^2)}} \]

- One more way:

\[r = r(X,Y) = \frac{\frac{n}{n-1} (\overline{XY} - \bar{X} * \bar{Y})}{\text{SD}(X) \text{SD}(Y)}\]

Example

- Suppose our datasets are

X = [1,4,6,9,3],Y = [-2,2,8,0,1].

\[\begin{aligned} \bar{X} &= 4.6 & \text{SD}(X) &= 3.05 \\ \bar{Y} &= 1.8 & \text{SD}(Y) &= 3.77 \\ \end{aligned}\]

- The only new thing to compute is \(\overline{XY}\)

\[XY = [-2,8,48,0,3], \qquad \overline{XY}=(-2+8+48+3)/5=11.4\]

Therefore (note the 5/4…) \[ r = \frac{\frac{5}{4}(11.4 - 4.6 * 1.8)}{3.05 * 3.77} \approx 0.34\]

Properties of correlation

Correlation is unitless.

Changing units of \(X\) or \(Y\) does not change the correlation.

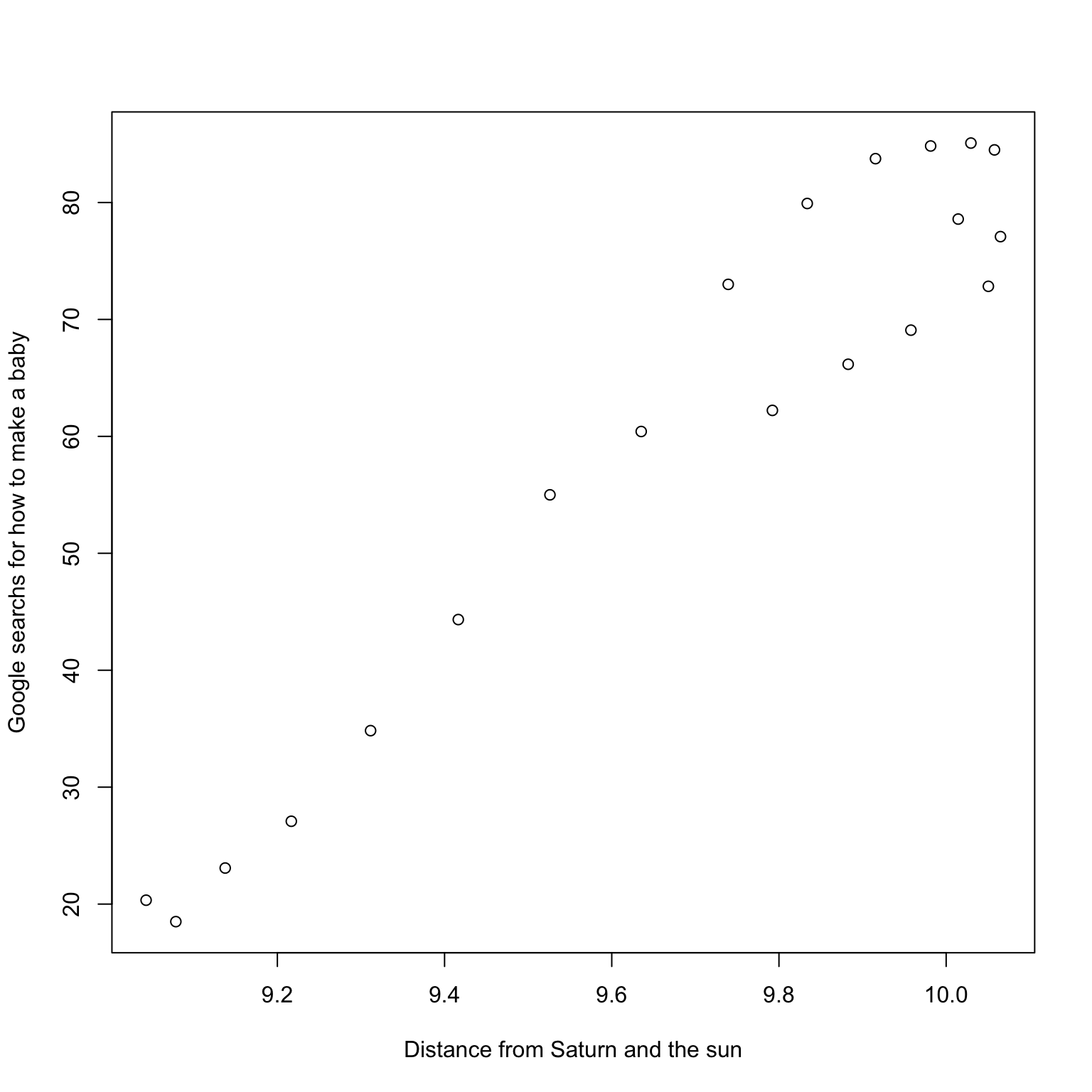

Correlation does not change if we interchange \(X\) and \(Y\): it is symmetric.

- Like

meanandsd,coris not robust to outliers.

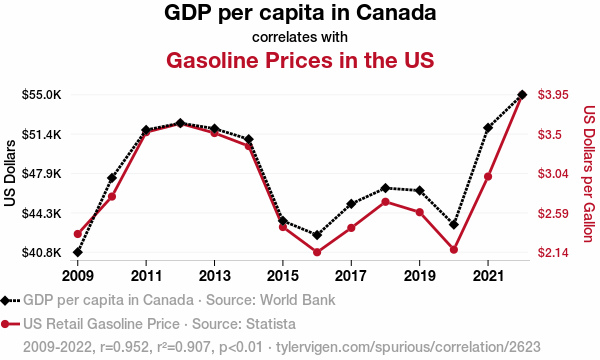

Correlation is not causation

mother, Y=daughter

daughter, Y=mother- Swapping the \(X\) (independent) and \(Y\) (dependent) axes does not change the fundamental relation.

Correlation is not causation

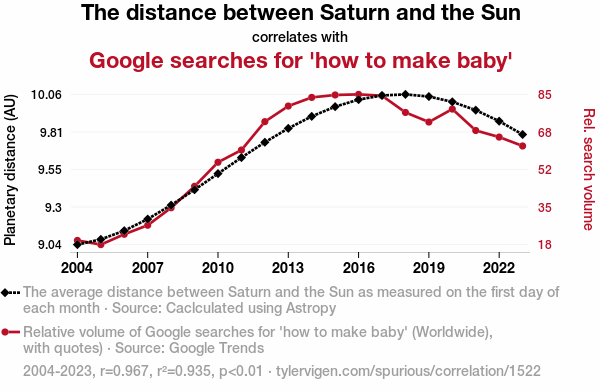

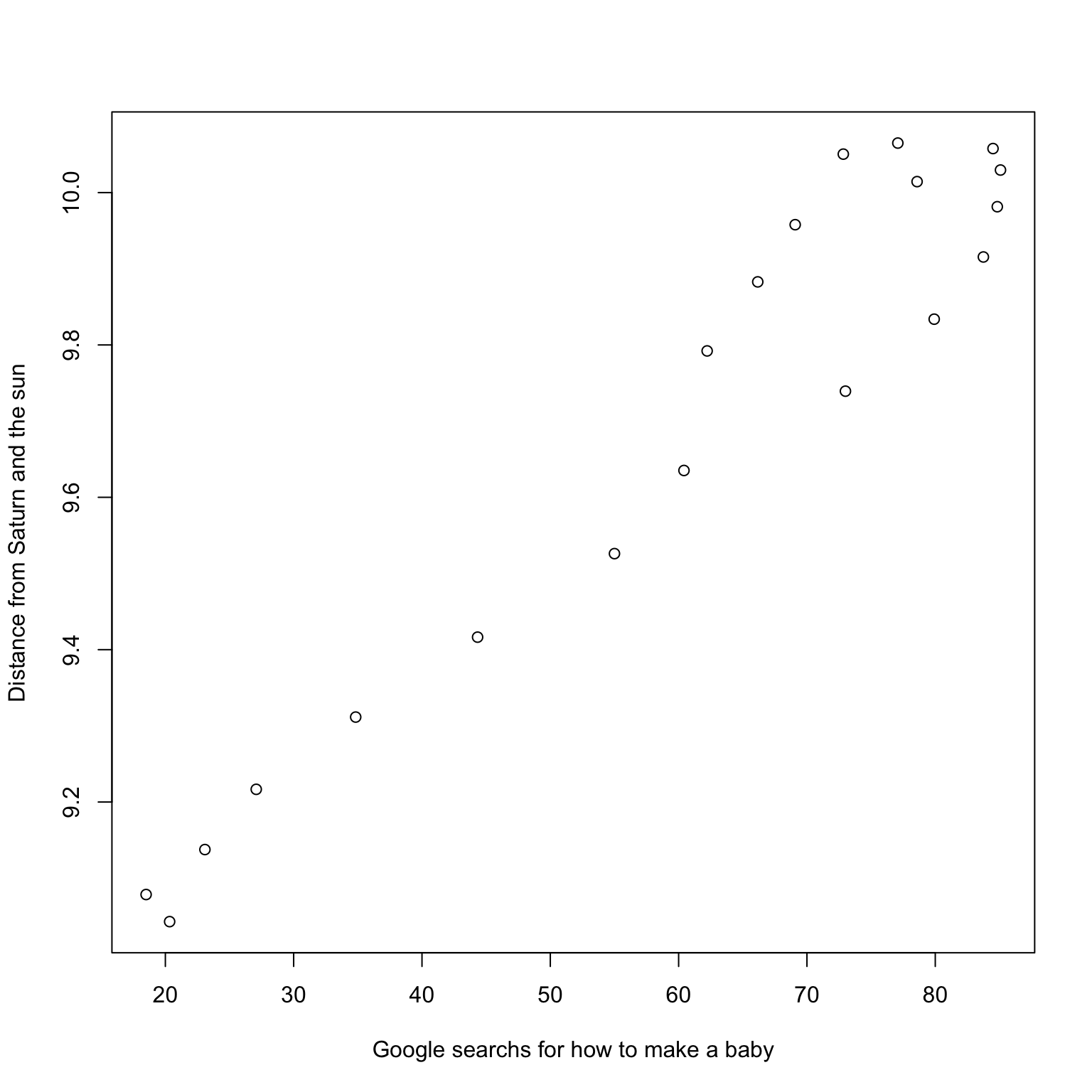

Scatter plot for Saturn and “How to make a baby”

Another fun one

Correlation and causation

- These last two examples above were found by searching through many variables: they clearly demonstrate correlation is not causation.

Example Shoe Size and Reading Ability

Within schools in a large school district, researchers collected students’ reading ability as measured by some standardized test. They also collected their shoe size.

Do we expect

Shoe SizeandReading Abilityto be uncorrelated? positively correlated? negatively correlated?Explain.

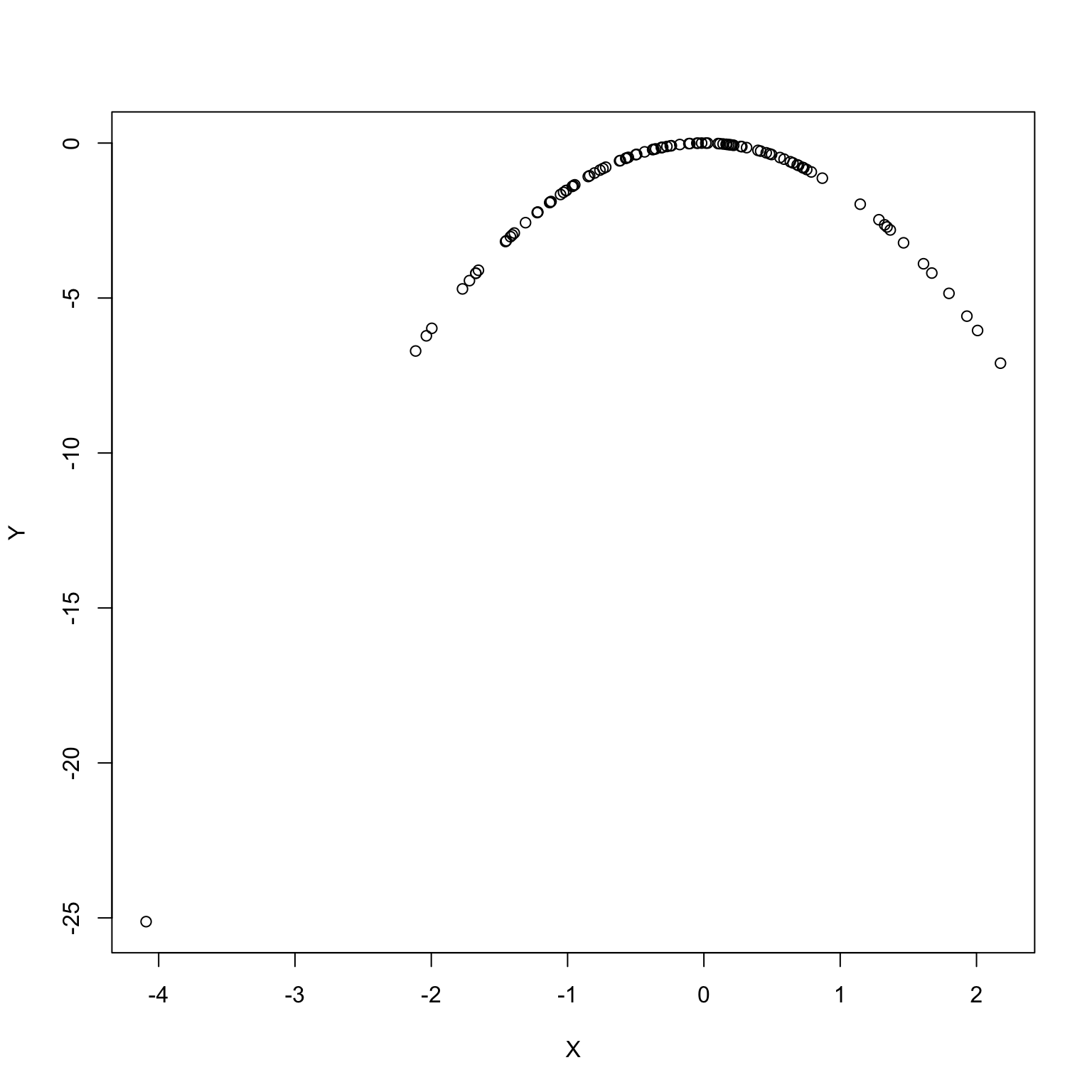

Correlation captures linear behavior

- Variables can be associated without being linearly associated.

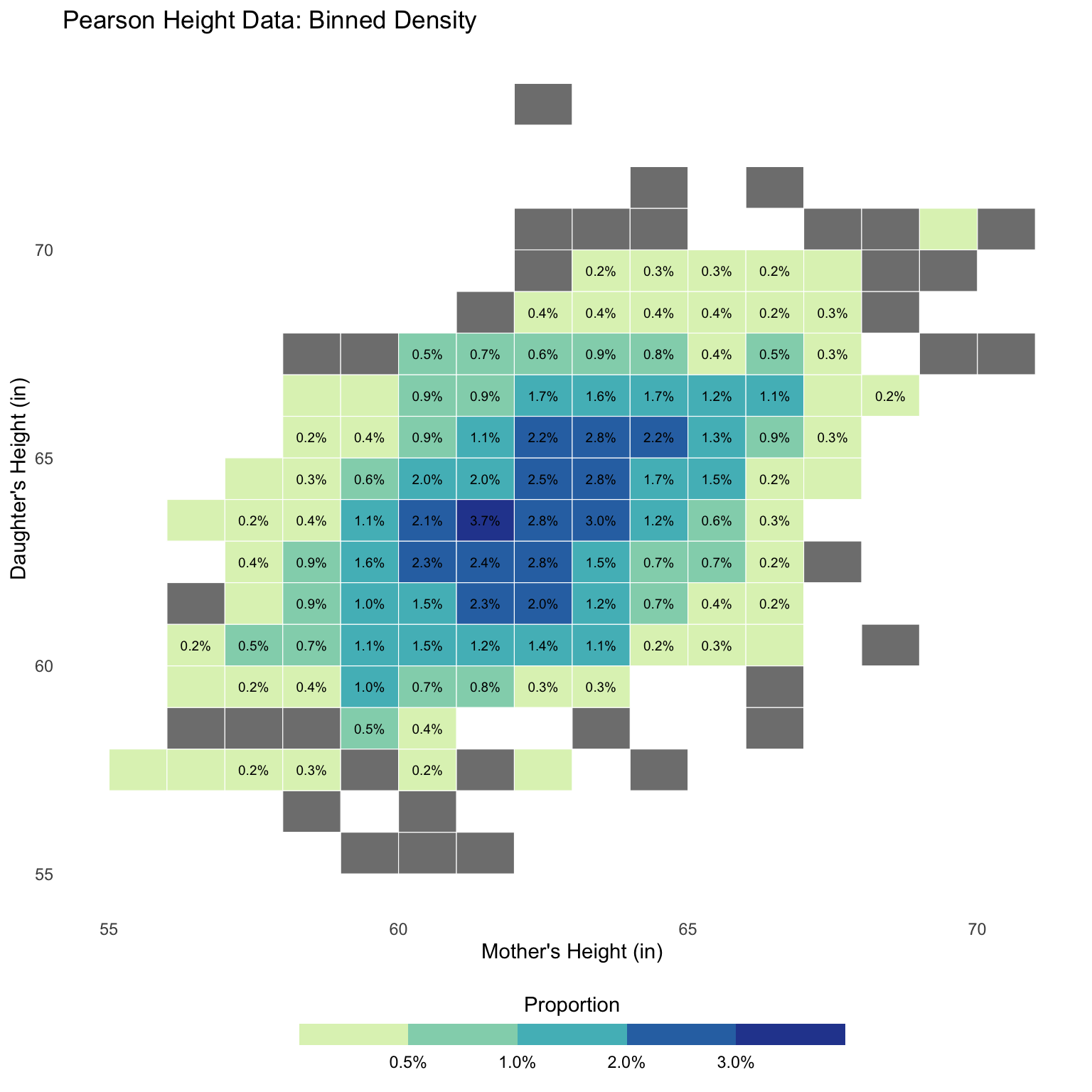

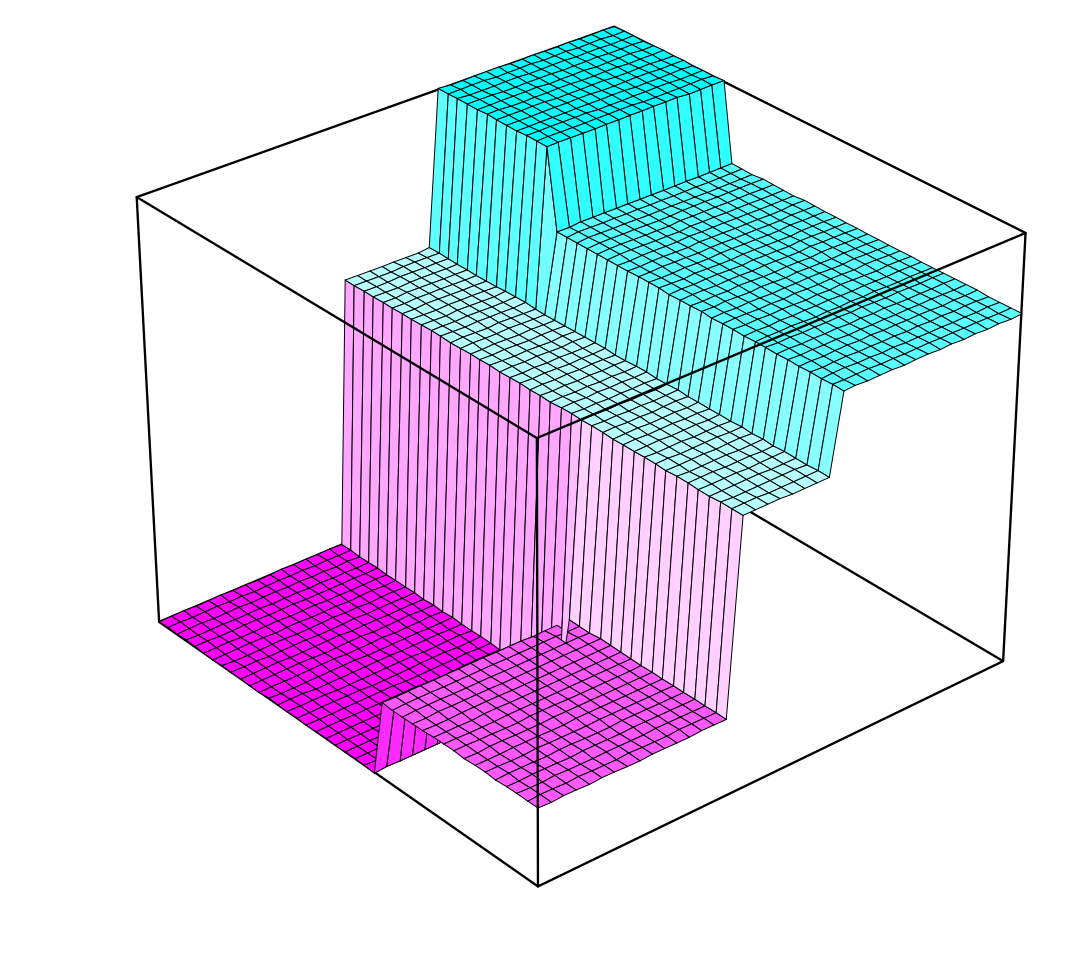

Bivariate histogram

All of our numeric summaries so far can be computed on datasets OR histograms

Correlation is no different, but we need to define a bivariate histogram.

Bivariate histogram: breaking the plane into bins

Bivariate histogram: assign proportions to bins

Bivariate histogram: volumes vs. areas

Replace bars with columns

\(\implies\) volumes are proportions now…

Correlation of a (bivariate) histogram

Just like

mean, median, sd, quantilewe could computecorfrom such a bivariate histogram…We will not dwell on the details (multivariate calculus…)

Moral: yet again, a histogram captures a lot of interesting things about the data…

Summary

We introduced correlation, a unitless numerical summary of a scatterplot of

XandY.When the two variables

XandYare linearly related, their correlation quantifies the strength of this linear association.Can be computed based on the standardized variables

scale(X)andscale(Y).