Tests and confidence intervals in regression

Outline

Regression refresher

Tests related to the slope in regression

Confidence interval for the slope in regression

Other examples of confidence intervals

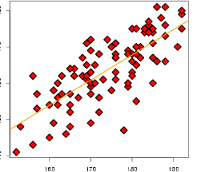

Mother & daughter data

Reminder: regression

Let \(X_i=\texttt{mother}_i\) and \(Y_i=\texttt{daughter}_i\):

The regression line is the line

\[ y = \hat{\beta}_0 + \hat{\beta}_1 * x \]

where \((\hat{\beta}_0, \hat{\beta}_1)\) are computed found by

\[ \text{minimize}_{\beta_0, \beta_1} \sum_{i=1}^n (Y_i - (\beta_0 + \beta_1 X_i))^2 \]

Best fitting line

A hypothesis test

- If a line captures relationship between \(\texttt{mother}\) (\(X\)) and (\(Y\)), we might ask: ** Is the slope of the true regression of \(Y\) on \(X\) line (\(>,<,\neq\)) 0?**

What do we mean by true regression line?

- Just like our other testing problems, we need a model (what we’ve been calling a box) to make sense of this question.

Regression model

A model for how the \(\texttt{(mother, daughter)}\) pairs are generated:

A value for \(\texttt{mother}\) is drawn from \(\texttt{box}_{\texttt{mother}}\) (i.e. a distribution with its own probability histogram)

A value \(\epsilon\) for the error is drawn from a \(\texttt{box}_{\epsilon}\)

For some true slope and intercept we draw the value for \(\texttt{daughter}\):

\[\texttt{daughter} = \beta_0 + \beta_1 * \texttt{mother} + \epsilon\]

There are two boxes (distributions / probability histograms):

The box for \(\texttt{mother}\)

The box for \(\epsilon\)

There are two parameters:

\(\beta_0\): intercept

\(\beta_1:\) slope

A note on our regression model

This is not the only model for regression, but it’s (relatively) simple…

The important part of this model is the way \(\texttt{daughter}\) is generated after having fixed \(\texttt{mother}\): the mean (\(\beta_0 + \beta_1 * \texttt{mother}\)) plus an error independent of \(\texttt{mother}\).

Another model

Assume there is some big box (e.g. a population) of pairs of \((\texttt{mother}, \texttt{daughter})\) heights.

We draw a simple random sample from this population (which will be close to sampling with replacement).

For some populations our first model will still be reasonable.

- Since scatterplot is football shaped it is a reasonable assumption for this data.

For other populations our model won’t be appropriate. This will mostly affect our estimate of \(\text{SE}(\hat{\beta}_1)\)…

Parameter estimates

Slope estimate

If our regression model is appropriate, then:

\(E[\hat{\beta}_1] = \beta_1\)

\(\text{SE}(\hat{\beta}_1) \approx \sqrt{\frac{1}{n} \frac{\texttt{MSE}(\texttt{box}_{\epsilon})}{\texttt{MSE}(\texttt{box}_{\texttt{mother}})}}\)

The probability histogram of \(\hat{\beta}_1\) is approximately a normal curve.

Testing slope is 0

\[ Z = \frac{\hat{\beta}_1 - 0}{\text{SE}(\hat{\beta}_1)} \]

- To carry out the test, use the usual rules for one and two-sided

Carrying out the test

- Most software packages will compute \(\text{SE}(\hat{\beta}_1)\)

Finding the \(Z\) score in the output of the model

Most software packages will also compute this \(Z\) score for you

Most software will refer to Student’s \(T\) distribution instead of standard normal… with \(n\) large this distinction is moot.

Sometimes, testing 0 isn’t of interest

- We might want to test the hypothesis \(\beta_1=1\):

\[ Z = \frac{\hat{\beta}_1 - 1}{\text{SE}(\hat{\beta}_1)} \]

- Usual rules for one and two-sided

Plausible values for \(\beta_1\)

- When there is no particular value of interest for the slope, it is common to summarize results with a confidence interval

95% confidence interval

\[ CI_{0.95} = \hat{\beta}_1 \pm 2 * \text{SE}(\hat{\beta}_1) \]

- This is an interval of plausible values for the true parameter \(\beta_1\): captures our estimate of the parameter and its (estimated) variability.

Confidence intervals are random

The interval \(CI_{0.95}\) is random: different samples will give different intervals…

Let’s make some data for which our model holds:

- We can compute confidence intervals using

confintfor a randomly generated dataset

Illustration of confidence intervals

- Let’s create 100 data sets from the same model and look at the confidence intervals…

Interpretation of confidence intervals

Assuming our regression model holds, there is true (unknown) slope \(\beta_1\)

For every \(CI_{0.95}\) we can ask whether \(\beta_1\) is in the interval: this is an event, we can compute its probability

\[ P(\beta_1 \in CI_{0.95}) = P(\beta_1 \in \hat{\beta}_1 \pm 2 * \text{SE}(\hat{\beta}_1)) \approx 95\%. \]

Relation to 2 SE rule

- Our 2 SE rule says that

\[ P(\hat{\beta}_1 \in \beta_1 \pm 2 * \text{SE}(\hat{\beta}_1)) \approx 95\% \]

Almost the same statement, but here the interval is not random.

Note: we are blurring the lines a little here between true SE and our estimate of SE…

Relationship between confidence intervals and tests

If you know the 95% confidence interval for \(\beta_1\), it is easy to check whether you would reject the hypothesis the slope is 1, or any other value \(V\).

If \(V\) is in \(CI_{0.95}\) then we do not reject the null hypothesis the slope is \(V\) at 5%…

Said differently, the interval \(CI_{0.95}\) are all the values we would fail to reject at level 5%.

Confidence intervals for mean(box)

- Going back to our first testing problems, we can compute a 95% CI for \(\texttt{mean(box)}\):

\[ CI_{0.95} = \bar{X} \pm 2 * \sqrt{\texttt{MSE(box)}/n} \]

Example: athletic training program

Group A:

40 athletes: data is stored in

groupAinRmean(groupA) = 6.1sd(groupA) = 1.2

Group B:

50 athletes: data is stored in

groupBinRmean(groupB) = 6.3sd(groupB) = 1.3

Confidence intervals for mean(boxA) - mean(boxB)

General form of 95% confidence interval

- We saw several tests with \(Z\) scores

\[ Z = \frac{\texttt{observed test statistic} - E_{\texttt{null}}[\texttt{test statistic}]}{\text{SE}(\texttt{test statistic})} \]

- There is often a corresponding 95% confidence region:

\[ \texttt{observed test statistic} \pm 2 * \text{SE}(\texttt{test statistic}). \]