TurtleBot 2 – Autonomous Robot

MIE433: Mechatronics Systems Design and Integration - University of Toronto

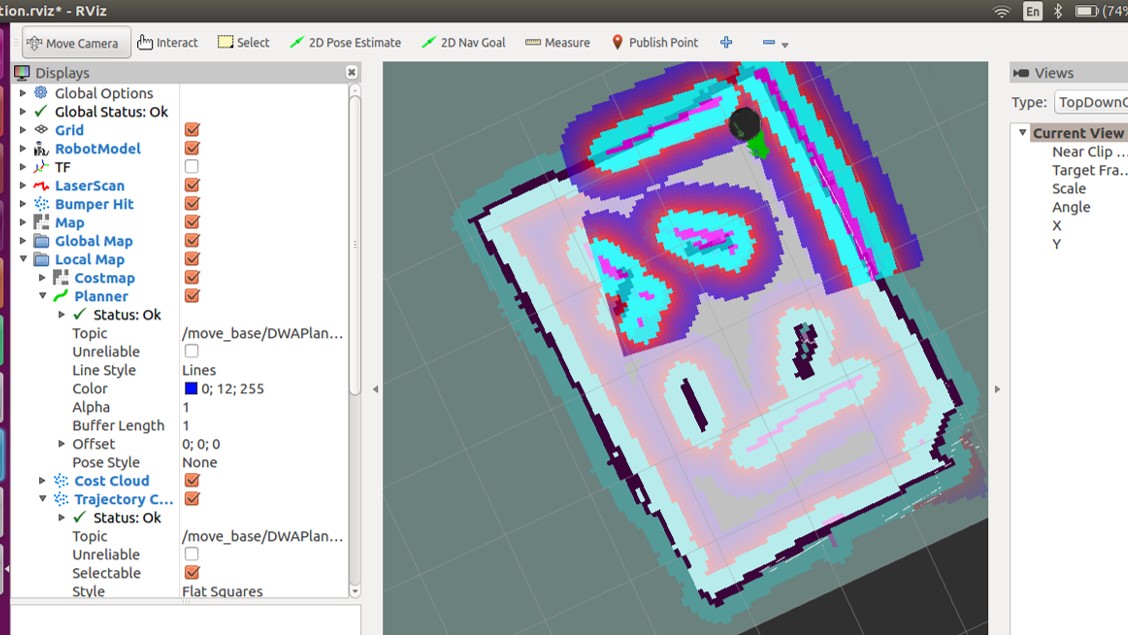

To demonstrate an understanding of mechatronics design principles, I worked with a Turtlebot 2 platform, which uses a Kobuki robot base (similar to a Roomba) for movement, and a Kinect RGB-D camera for vision. The robot was required to autonomously explore and map its environment, find the shortest path to 5 targets, and correctly identify the images on each of the targets. This project is focused on robot localization, mapping, navigation, path-planning, image processing, and computer vision.

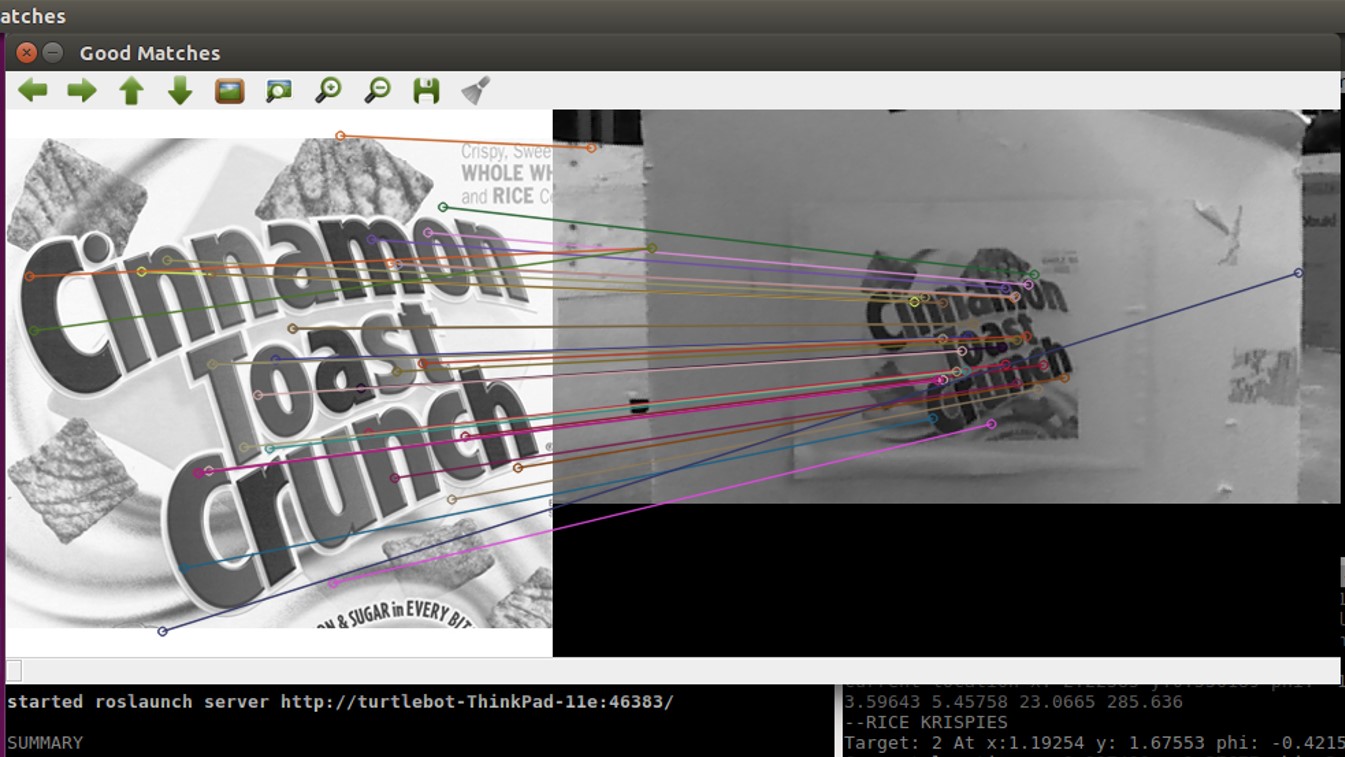

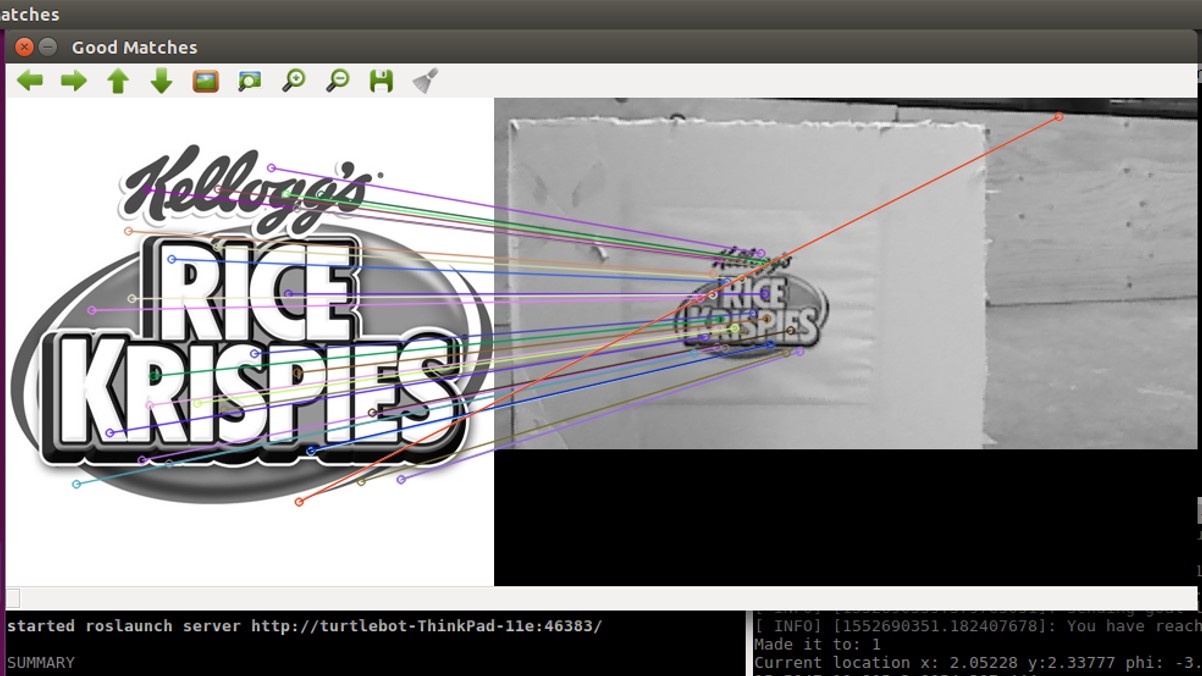

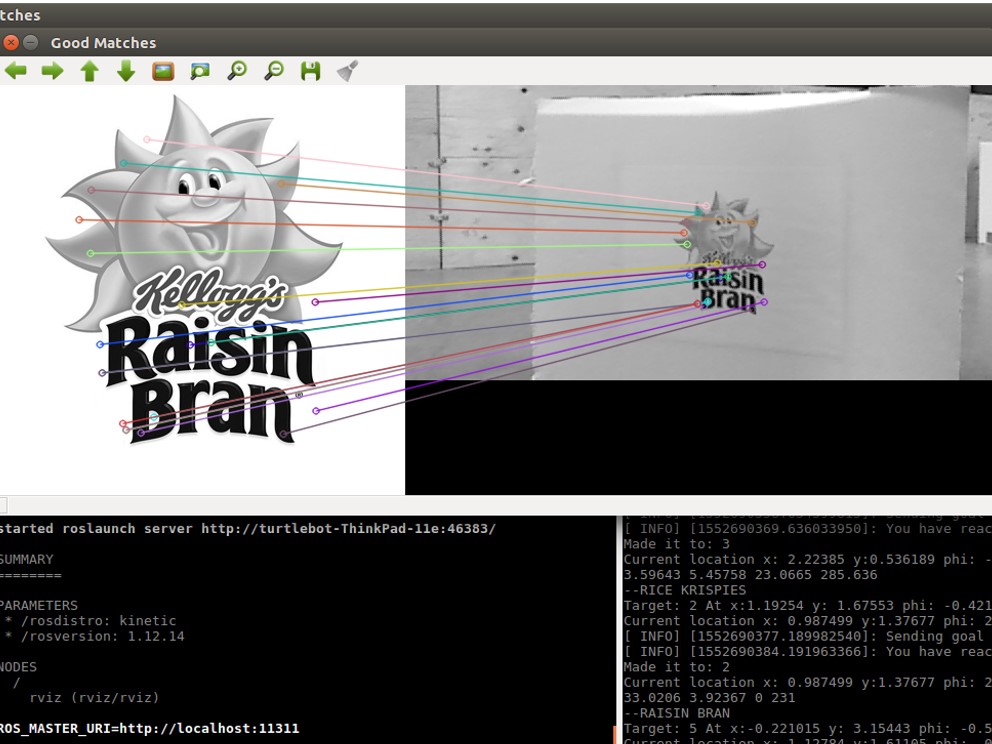

The image recognition module was handled using a two-stage pipeline. First, the video feed was pre-processed to remove environmental noise, cropped for the area of interest, and had filters applied to enhance relevant features. Then, using a simple SURF algorithm on the pre-processed video feed with empirically determined thresholds, the robot was able to recognize the images with 100% accuracy.

Contributions

- Wrote software modules to implement SURF for image recognition in C++

- Designed an image processing pipeline to increase accuracy to 100%

- Improved navigation using SLAM in ROS

Skills

- Programming in C++

- Working in a UNIX environment

- Robot Operating System (ROS)

- Simulation in Gazebo

- Computer vision

- Image processing

Check out a demo of the robot's image recognition at work here.