Our first example will be colorblindness which we study in conjunction with gender. But to begin with we need an extra new concept.

Suppose that you have to guess

the suit of a playing card drawn

from a ![]() pack at random,

you probability of guessing

correctly is

pack at random,

you probability of guessing

correctly is ![]() .

Now suppose I tell you that it is red.

You then have a

.

Now suppose I tell you that it is red.

You then have a ![]() chance, because there

are less choices, you can use the additional information

to restrict the space of possible outcomes.

chance, because there

are less choices, you can use the additional information

to restrict the space of possible outcomes.

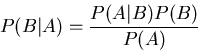

We write the probability of A given that A happened

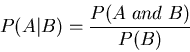

as the probability of A given B: ![]() .

.

Definition of the conditional probability

of ![]() given

given ![]() :

:

Intuitive definition:

Knowing that another event B occurs does not affect

the probability of the event A of occurring, this

means that A and B are independent.

As a formula:

![]()

The only inequality that is always true is

Example of how the conditional probabilities can both

be bigger or smaller:

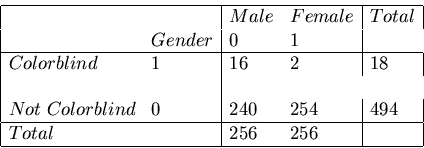

Types and description and statistics

Study the world of of the colorblind

We rounded the numbers for a large group

of people tested in the US,

(results vary from region to region in the world,

there is an island in micronesia with

most people color blind

).

).

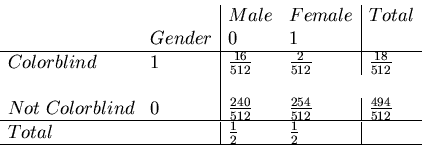

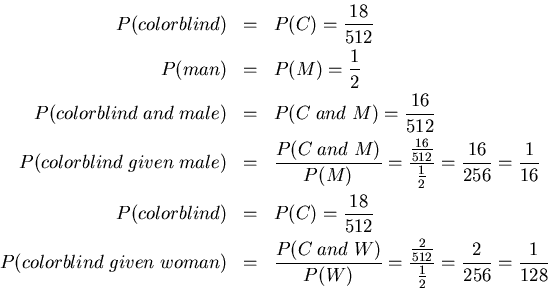

In considering colorblindedness, suppose I consider the binary random variables associated to color blindness and gender (associate 0 if male, 1 if female), these are called indicator variables, we can tabulate the probabilities of all 4 possible pairs of outcomes as:

When ![]() and

and ![]() are not independent

are not independent

Sometimes we have

![]() ,

,

Sometimes we have

![]() ,

,

When two events are independent the probability of them both occurring is just the product of their probabilities.

The probability of throwing a double three with two dice is the result of throwing three with the first die and three with the second die. The total possibilities are, one from six outcomes for the first event and one from six outcomes for the second, Therefore (1/6) * (1/6) = 1/36.

The two events are independent, since whatever happens to the first die cannot affect the throw of the second, the probabilities are therefore multiplied, and remain 1/36.

Definition:Two events ![]() and

and ![]() are said to be independent

if

are said to be independent

if

Examples:

We draw two cards one at a time from a

shuffled deck of ![]() cards.

cards.

In class we looked at the following two events:

We saw that

Usually it is quite useful when two variables are not independent, because we can predict one from another.

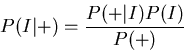

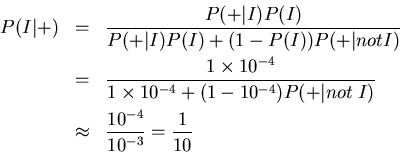

I is a set of people ill with the disease.

(+) the set of people who test positive for the disease.

There is a test for

this disease which has no false negatives:

if you have the disease,

you will test positive ![]() .

However, there are

occasionally false positives; 1 person in 1000 who doesn't have the

disease (is healthy, H) will test positive anyway

.

However, there are

occasionally false positives; 1 person in 1000 who doesn't have the

disease (is healthy, H) will test positive anyway

![]() .

.

We want to know the probability that someone who has a positive test is actually ill.

Let's replace B in Bayes' rule with ``is ill'' (I)

and A with ``tests positive'' (+). Then

| True state | |||

| Ill-Disease | Not Ill | ||

| (+) | Error= False Positive | ||

| Prediction | |||

| (-) | Error= False Negative | ||

|

The probability of seeing such a high value for the

![]() statistic is tiny,

(much smaller than

statistic is tiny,

(much smaller than ![]() , so we can safely reject the

null hypothesis of independence between hair color

and eye color.

, so we can safely reject the

null hypothesis of independence between hair color

and eye color.

+-------------------------------------------------------------------+ | | | Sex Age POISON GAS HANG DROWN GUN JUMP | | | | M 10-20 1160 335 1524 67 512 189 | | M 25-35 2823 883 2751 213 852 366 | | M 40-50 2465 625 3936 247 875 244 | | M 55-65 1531 201 3581 207 477 273 | | M 70-90 938 45 2948 212 229 268 | | | | F 10-20 921 40 212 30 25 131 | | F 25-35 1672 113 575 139 64 276 | | F 40-50 2224 91 1481 354 52 327 | | F 55-65 2283 45 2014 679 29 388 | | F 70-90 1548 29 1355 501 3 383 | | | +-------------------------------------------------------------------+

Want to play by chunks of a thousand?