Project Description

Purpose

The treatment of patients with complex facial and neck trauma is one

of the most challenging multidisciplinary tasks in surgery. Simulation

technology based on 3D data of an individual patient will have a

critical impact on surgical planning and training. Repair of

maxillofacial fractures involves aligning fragments of bone with

accuracy so that aesthetics and function are restored. Surgery is

often lengthy and hindered by inability to fully view the fractures

from all angles due to anatomic difficulties such as muscular

attachments, vascular supply and critical nerve supply.

On-site and remotely accessible virtual environments capable of

simulating interactions with patient-specific anatomy will allow

surgeons to plan and rehearse operations and to retrain skills for

infrequent procedures. Selection of type of plate, its length,

alignment and screw length is currently done intraoperatively and

several alternatives might need to be considered in the operating

room. The ability to shift these decisions to a pre-operative planning

stage would decrease the length of surgery and improve confidence in

the accuracy of repair.

Commercially available maxillofacial surgery planning software allows

for interactive realignment of fractures based on segmented,

pre-operative CT images, but are limited to the interactions of

keyboard and mouse. This limited ability to control orientation of the

bone fragments and lack of force feedback on contact leads to low

perceived confidence of the resulting surgical plan.

Our goal is to overcome the limitations of current software by

designing and implementing a haptics-enabled maxillofacial surgery

rehearsal environment that requires little training and provides a

direct high-fidelity immersive experience for the operator. The system

would support six degree-of-freedom (6-DOF) haptic interaction for

bone fragments and plate alignment for pre-surgical planning to treat

mandibular fractures. Our aim is to evolve a design of real utility to

surgeons and, at the same time, ensure that it can be realized in

implementation by identifying and addressing the technological and

interaction design challenges through conceptual and technical

prototypes.

Methods

The design process has followed a user centered design method

concurrently with development of state-of-the-art collision detection

and haptic rendering algorithms. This dual process allows for

identification of requirements that may be met with currently

available technology as well as opportunities for improvements in

certain essential technological areas of particular value to this

project. The design is informed and iteratively improved by field

studies, lo-fi and hi-fi prototypes, scenarios and co-operative

evaluation with oral/maxillofacial surgeons.

During the design process we are iteratively implementing two main

prototypes, following the concepts of a vertical (few features, but

hi-fi interaction) and a horizontal (conceptually all features of the

system, but lo-fi interaction) prototype. The horizontal prototype

allows the surgeon to execute a typical usage scenario from beginning

to end. While not all features are fully implemented, and some may

only be mock-ups, the purpose of the horizontal prototype is to elicit

feedback and modify the prototype and scenario to identify the most

important aspects of the system. The risk with a horizontal-only

prototype is that the designer might use materials and technologies

that are infeasible or even impossible to implement. We balance this

risk with vertical prototypes that fully implement critical components

requiring new technologies, which act as proof of concept and informs

the overall design of what can be built.

One essential property of the rehearsal environment is the possibility

of bi-manual six degree of freedom positioning and orientation of

fractured mandibular bone in a way that looks and feels reassuring,

without requiring the user to learn a complex CAD-like system.

Real-time visualization of the dental occlusion and haptic feedback

conveying accurate contact forces while manipulating fractured bone

fragments are essential components of the system, and thus novel

technological development in these areas is required.

Results

The steps and interactions we identified include loading a segmented

CT-scan of a trauma patient, viewing it in a stereoscopic display

co-located with a bi-manual haptic interface, manipulating the bone

fragments, and perceptualizing the occlusion between maxilla and

mandible both visually and haptically through force-feedback. In

addition, the surgeon may lock the fragments into key positions, view

them from different directions, decide on plate placement and screw

sizes, and finally generate a report of the resulting surgical plan.

The form of our initial prototype was a pure mock-up (figure 1), and

was used as a conversation artifact to improve the design and build

common ground between surgeons and engineers.

Our studies indicate that the success of such a system is highly

dependent on the fidelity of the haptic rendering. For the rehearsal

environment to be intuitive, the interaction must follow the direct

intention of the operator with smooth and accurate force feedback. A

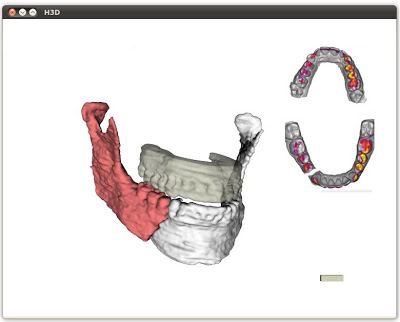

vertical prototype (figure 2) has been developed to focus on the

haptic rendering aspect of the system. It extends our group’s recent

work to formulate a new algorithm for rendering 6-DOF haptic

interaction between high-resolution volumetric representations of

objects (e.g. bone fragments from CT images). In addition to the

prototypes, we will report the results of an formative evaluative

study, which will include the grading of perceived usefulness and

fidelity of the interaction.

Conclusion

We show a maxillofacial surgery rehearsal environment that was developed

using an iterative design process with feedback from surgical specialists. The

system will permit surgeons to plan, simulate and rehearse complex repair of

maxillofacial fractures, including bone fragment and bone plate alignment in

three-dimensional space. The benefit of a haptics-enabled system for obtaining

accurate surgical results, reducing operating time, and for enabling a

platform to enhance surgical training in infrequent operations is considered.

Figure 2. Screenshot from the interactive prototype. Each of the two

mandibular fracture segments can be moved with a left and a right haptic

device respectively.

Project Staff

Status

Active since 2010.

Funding Sources

Funded through VA Grant Number.