Image: Do Pham, Stanford |

|

|

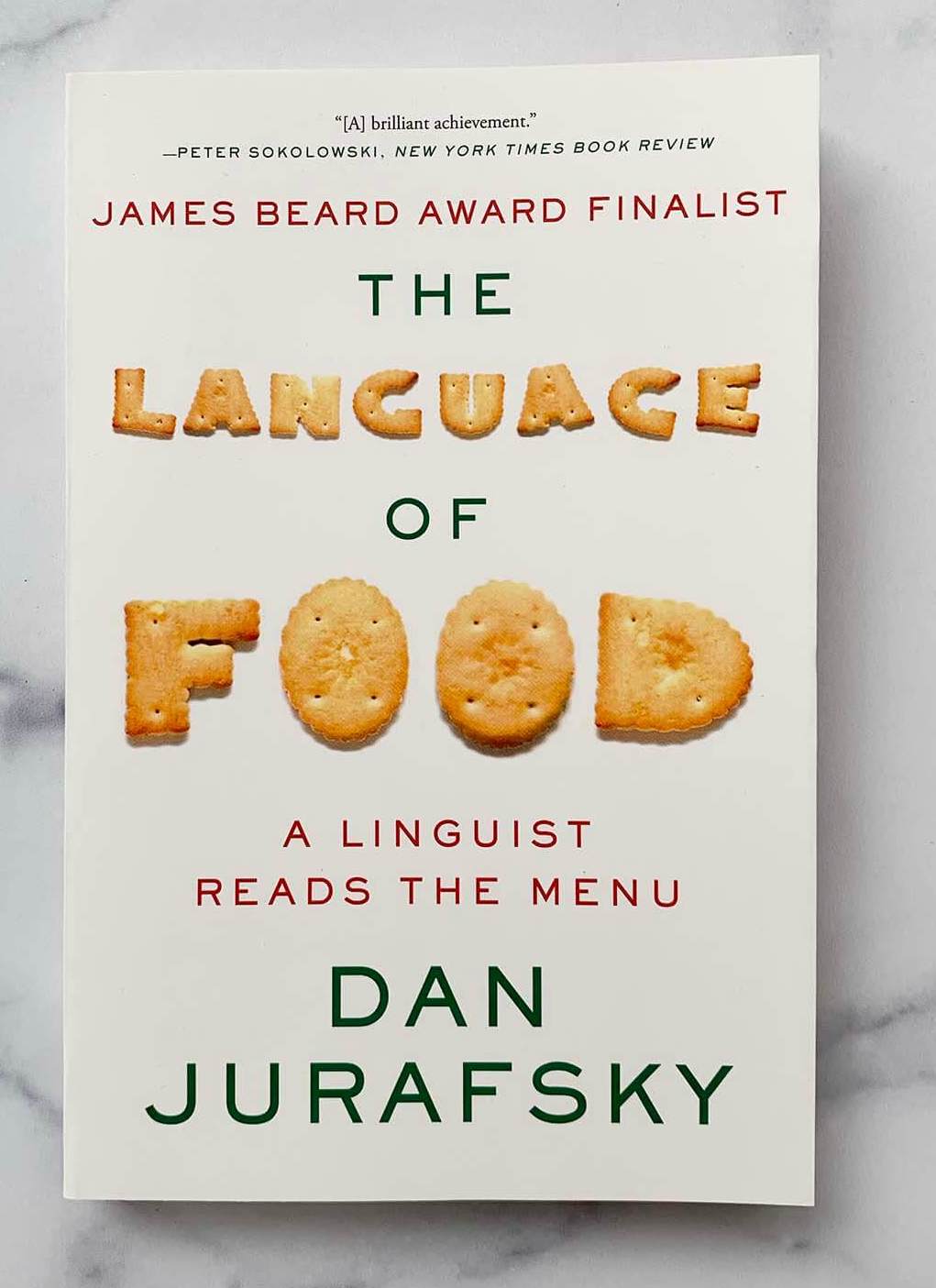

jurafsky@stanford.edu Margaret Jacks 117 Stanford CA 94305-2150 BIO X/BLUESKY: jurafsky, @jurafsky CV PEOPLE NLP group WHERE'S DAN? LANGUAGE OF FOOD blog seminar class articles |

TEACHING THIS YEAR AUTUMN 2025 CS 329R: Race and Natural Language Processing (co-taught with Jennifer Eberhardt) Tue 1:30-4:00 PM WINTER 2026 cs124/ling180: From Languages to Information Tu/Thu 3:00 PM - 4:20 PM, Hewlett 200 SPRING 2026 OSPMADRD65M: The Language of Food at Stanford's Madrid campus! FOLLOWING YEAR On Sabbatical for 2026-2027 FOLLOWING FOLLOWING YEAR CS124 probably in Winter 2028 EARLIER COURSES |

|

|

|

2026 ARTICLES [ALL PUBS] [GOOGLE SCHOLAR]

Preprints

Myra Cheng, Isabel Sieh, Humishka Zope, Sunny Yu, Lujain Ibrahim, Aryaman Arora, Jared Moore, Desmond Ong, Dan Jurafsky, Diyi Yang. 2026. Verbalizing LLMs' assumptions to explain and control sycophancy arXiv:2604.03058

Christine Zhang, Dan Jurafsky and Chen Shani. 2026. Concept Training for Human-Aligned Language Models. (ArXiv 2026)

2026

Myra Cheng, Cinoo Lee, Pranav Khadpe, Sunny Yu, Dyllan Han, Dan Jurafsky. Sycophantic AI Decreases Prosocial Intentions and Promotes Dependence. , Science,

Vol. 391, No. 6792.

Press: NY Times, AP, Scientific American.

Kaitlyn Zhou, Kristina Gligorić, Myra Cheng, Michelle S. Lam, Vyoma Raman, Boluwatife Amin, Caeley Woo, Michael Brockman, Hannah Cha, and Dan Jurafsky. Attention to Non-Adopters. Findings of ACL 2026.

Myra Cheng, Robert D. Hawkins, Dan Jurafsky. 2026. Accommodation and Epistemic Vigilance: A Pragmatic Account of Why LLMs Fail to Challenge Harmful Beliefs. To appear, ACL 2026. Press: IEEE Spectrum.

Bianca Datta, Markus J. Buehler, Yvonne Chow, Kristina Gligoric, Dan Jurafsky, David L. Kaplan, Rodrigo Ledesma-Amaro, Giorgia Del Missier, Lisa Neidhardt, Karim Pichara, Benjamin Sanchez-Lengeling, Miek Schlangen, Skyler R. St. Pierre, Ilias Tagkopoulos, Anna Thomas, Nicholas J. Watson, Ellen Kuhl. 2025. AI for Sustainable Future Foods. Draft to appear, Nature Food.

Isabel O. Gallegos, Chen Shani, Weiyan Shi, Federico Bianchi, Izzy Gainsburg, Dan Jurafsky, Robb Willer. 2026. Labeling messages as AI-generated does not reduce their persuasive effects. PNAS Nexus, Volume 5, Issue 2, February 2026, pgag008.

Moran Mizrahi, Chen Shani, Gabriel Stanovsky, Dan Jurafsky, Dafna Shahaf. 2026 Cooking Up Creativity: A Cognitively-Inspired Approach for Enhancing LLM Creativity through Structured Representations. In press , TACL 2026.

Doumbouya, Moussa Koulako Bala, Dan Jurafsky, and Christopher D. Manning. 2026. Tversky Neural Networks: Psychologically Plausible Deep Learning with Differentiable Tversky Similarity. To appear, ICLR 2026

Myra Cheng*, Sunny Yu*, Cinoo Lee, Pranav Khadpe, Lujain Ibrahim, Dan Jurafsky. ELEPHANT: Measuring and Understanding Social Sycophancy in LLMs.. ICLR 2026. [code] Press coverage by MIT Technology Review and VentureBeat.

Chen Shani, Dan Jurafsky, Yann LeCun, Ravid Shwartz-Ziv. 2026. From Tokens to Thoughts: How LLMs and Humans Trade Compression for Meaning. ICLR 2026.

*Martijn Bartelds, *Nandi, Ananjan, Doumbouya, Moussa K. B., Jurafsky, Dan, Hashimoto, Tatsunori, and Karen Livescu (2026). CTC-DRO: Robust Optimization for Reducing Language Disparities in Speech Recognition. To appear, ICLR.

Mirac Suzgun, Mert Yuksekgonul, Federico Bianchi, Dan Jurafsky, James Zou. 2026. Dynamic Cheatsheet: Test-Time Learning with Adaptive Memory. EACL 2026.

Laya Iyer; Pranav Somani; Alice Guo; Dan Jurafsky; Chen Shani. 2026. Beyond Tokens: Concept-Level Training Objectives for LLMs. EACL 2026