Table of contents

Keywords: forward-hook, activations, intermediate layers, pre-trained

As a researcher actively developing deep learning models, I have come to prefer PyTorch for its ease of usage, stemming primarily from its similarity to Python, especially Numpy. However, it has been surprisingly hard to find out how to extract intermediate activations from the layers of a model cleanly (useful for visualizations, debugging the model as well as for use in other algorithms). I am still amazed at the lack of clear documentation from PyTorch on this super important issue. In this post, I will attempt to walk you through this process as best as I can.

For the sake of an example, let’s use a pre-trained resnet18 model but the same techniques hold true for all models — pre-trained, custom or standard models.

If you’d like to follow along with code, post in the comments below. I will post an accompanying Colab notebook.

A Deep Network model – the ResNet18

from PIL import Image

import torch

from torchvision.models import resnet18

from torchvision import transforms as T

# input (single)

image = Image.open('cat.jpg')

transform = T.Compose([T.Resize((224, 224)), T.ToTensor()])

X = transform(image).unsqueeze(dim=0).to(device)

# original model

device = torch.device('cuda') if torch.cuda.is_available() else torch.device('cpu')

model = resnet18(pretrained=True)

model = model.to(device)

# forward pass -- getting the outputs

out = model(X)

Let’s dig into the architecture of the model here, shall we?

Open up the black box ⬇

print(model)

> ResNet(

(conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2),

padding=(3, 3), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True,

track_running_stats=True)

(relu): ReLU(inplace=True)

(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1,

dilation=1, ceil_mode=False)

(layer1): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

)

(1): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

)

)

(layer2): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 128, kernel_size=(3, 3),

stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(64, 128, kernel_size=(1, 1),

stride=(2, 2), bias=False)

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

)

)

(layer3): Sequential(

(0): BasicBlock(

(conv1): Conv2d(128, 256, kernel_size=(3, 3),

stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(128, 256, kernel_size=(1, 1),

stride=(2, 2), bias=False)

(1): BatchNorm2d(256, eps=1e-05,

momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

)

)

(layer4): Sequential(

(0): BasicBlock(

(conv1): Conv2d(256, 512, kernel_size=(3, 3),

stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(256, 512, kernel_size=(1, 1),

stride=(2, 2), bias=False)

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(512, 512, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3),

stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1,

affine=True, track_running_stats=True)

)

)

(avgpool): AdaptiveAvgPool2d(output_size=(1, 1))

(fc): Linear(in_features=512, out_features=1000, bias=True)

)

Accessing a particular layer from the model

Let’s say we want to access the batchnorm2d layer of the sequential downsample block of the first (index 0) block of layer3 in the ResNet model. We can just use the conventional way of accessing a class’s public functions or attributes via the “.” operator.

model.layer3[0].downsample[1]

Note that any named layer can directly be accessed by name whereas a Sequential block’s child layers needs to be access via its index. In the above example, both layer3 and downsample are sequential blocks. Hence their immediate children are accessed by index.

print(model.layer3[0].downsample[1])

> BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

Extracting activations from a layer

Method 1: Lego style

- A basic method discussed in PyTorch forums is to reconstruct a new classifier from the original one with the architecture you desire. For instance, if you want the outputs before the last layer (

model.avgpool), delete the last layer in the new classifier.

# remove last fully-connected layer

new_model = nn.Sequential(*list(model.children())[:-1])

To summarize:

- Get all layers of the model in a list by calling the

model.children()method, choose the necessary layers and build them back using theSequentialblock. You can even write fancy wrapper classes to do this process cleanly. However, note that if your models aren’t composed of straightforward, sequential, basic modules, this method fails.

Issues:

- What if you want the outputs of multiple intermediate layers? How do we go about doing this here? To give a concrete example for

ResNet, let’s say we want the concatenated outputs ofmodel.maxpool, our old friendmodel.layer3[0].downsample[1], themodel.avgpooland the popular layer that feeds into the softmax (i.e.,model.fc) for every forward pass of an image.model.fcis what the original model returns anyway. So we’re set.model.maxpoolis easy. Just get it as before formodel.avgpool.

# outputs after the max-pool layer

new_model = nn.Sequential(*list(model.children())[:4])

- What about the

model.layer3[0].downsample[1]outputs? Nope. That’s it! Can’t be done using this method.

Method 2: Hack the model

- The second method (or the hacker method — most common amongst student researchers who’d rather just rewrite the model code to get what they want instead of wasting time to make PyTorch work for them) is to just modify the

forward()block of the model and if needed, the Model class itself. Hey, don’t get me wrong. This works and gives you complete freedom and is much quicker fix. Let me illustrate how you would do this here.

First, let’s take a look at the source code for resnet18:

def resnet18(pretrained=False, progress=True, **kwargs):

"""ResNet-18 model from

"Deep Residual Learning for Image Recognition"

<https://arxiv.org/pdf/1512.03385.pdf>_

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

progress (bool): If True, displays a progress bar of the download to stderr

"""

return _resnet('resnet18', BasicBlock, [2, 2, 2, 2], pretrained, progress, **kwargs)

Hm. Not too useful. A step lower maybe.

def _resnet(arch, block, layers, pretrained, progress, **kwargs):

model = **ResNet**(block, layers, **kwargs)

if pretrained:

state_dict = load_state_dict_from_url(model_urls[arch],

progress=progress)

model.load_state_dict(state_dict)

return model

Still doesn’t give us what we want. Onwards!

Open up to see the barebones ⬇

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000, zero_init_residual=False,

groups=1, width_per_group=64, replace_stride_with_dilation=None,

norm_layer=None):

super(ResNet, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

self._norm_layer = norm_layer

self.inplanes = 64

self.dilation = 1

if replace_stride_with_dilation is None:

# each element in the tuple indicates if we should replace

# the 2x2 stride with a dilated convolution instead

replace_stride_with_dilation = [False, False, False]

if len(replace_stride_with_dilation) != 3:

raise ValueError("replace_stride_with_dilation should be None "

"or a 3-element tuple, got {}".format(replace_stride_with_dilation))

self.groups = groups

self.base_width = width_per_group

self.conv1 = nn.Conv2d(3, self.inplanes, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = norm_layer(self.inplanes)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2,

dilate=replace_stride_with_dilation[0])

self.layer3 = self._make_layer(block, 256, layers[2], stride=2,

dilate=replace_stride_with_dilation[1])

self.layer4 = self._make_layer(block, 512, layers[3], stride=2,

dilate=replace_stride_with_dilation[2])

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, (nn.BatchNorm2d, nn.GroupNorm)):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

# Zero-initialize the last BN in each residual branch,

# so that the residual branch starts with zeros, and each residual block behaves like an identity.

# This improves the model by 0.2~0.3% according to https://arxiv.org/abs/1706.02677

if zero_init_residual:

for m in self.modules():

if isinstance(m, Bottleneck):

nn.init.constant_(m.bn3.weight, 0)

elif isinstance(m, BasicBlock):

nn.init.constant_(m.bn2.weight, 0)

def _make_layer(self, block, planes, blocks, stride=1, dilate=False):

norm_layer = self._norm_layer

downsample = None

previous_dilation = self.dilation

if dilate:

self.dilation *= stride

stride = 1

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

conv1x1(self.inplanes, planes * block.expansion, stride),

norm_layer(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample, self.groups,

self.base_width, previous_dilation, norm_layer))

self.inplanes = planes * block.expansion

for _ in range(1, blocks):

layers.append(block(self.inplanes, planes, groups=self.groups,

base_width=self.base_width, dilation=self.dilation,

norm_layer=norm_layer))

return nn.Sequential(*layers)

def _forward_impl(self, x):

# See note [TorchScript super()]

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.fc(x)

return x

def forward(self, x):

return self._forward_impl(x)

Aha! Jackpot. The forward function or in this case, the private method self._forward_impl. This is what we need to modify to give us intermediate outputs.

Here’s one way to do it:

from types import MethodType

def _forward_impl_MY(self, x):

# See note [TorchScript super()]

intermediate_outputs = []

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

intermediate_outputs.append(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

intermediate_outputs.append(x)

x = torch.flatten(x, 1)

x = self.fc(x)

return x, intermediate_outputs

# Now to replace the original function with our function

model._forward_impl = MethodType(_forward_impl_my, model)

Of course, if it’s your own model that you are editing, this becomes a lot simpler.

So now we’ve got model.fc, model.maxpool and model.avgpool. But yet again, model.layer3[0].downsample[1] can’t be obtained without breaking abstraction. For this, we need to modify the _make_layer method. I will not go into the details of that here for lack of space, but know that it can be done.

Issues:

- This is quite tedious and messy especially if you’re not an advanced programmer, requiring you to edit the forward block and most likely the model itself. You would probably have to maintain multiple copies of the model class — the minimalistic one that has all the abstraction used for training and the other that reveals all the hidden abstractions useful for debugging and evaluation or at the very least, have them as different methods in the class. It involves a fair amount of copying and pasting and/or git branching and version control.

- When you’re dealing with standard downloaded pre-trained models or pre-trained models that you’ve obtained from someone else’s work, it is quite cumbersome to get the corresponding model definition code and make changes to the forward block as you can see from the example above. You have to spend a fair amount of time understanding their code and making it work for you, and in the process, almost entirely rewriting the entire class.

Method 3: Attach a hook

Whaa?

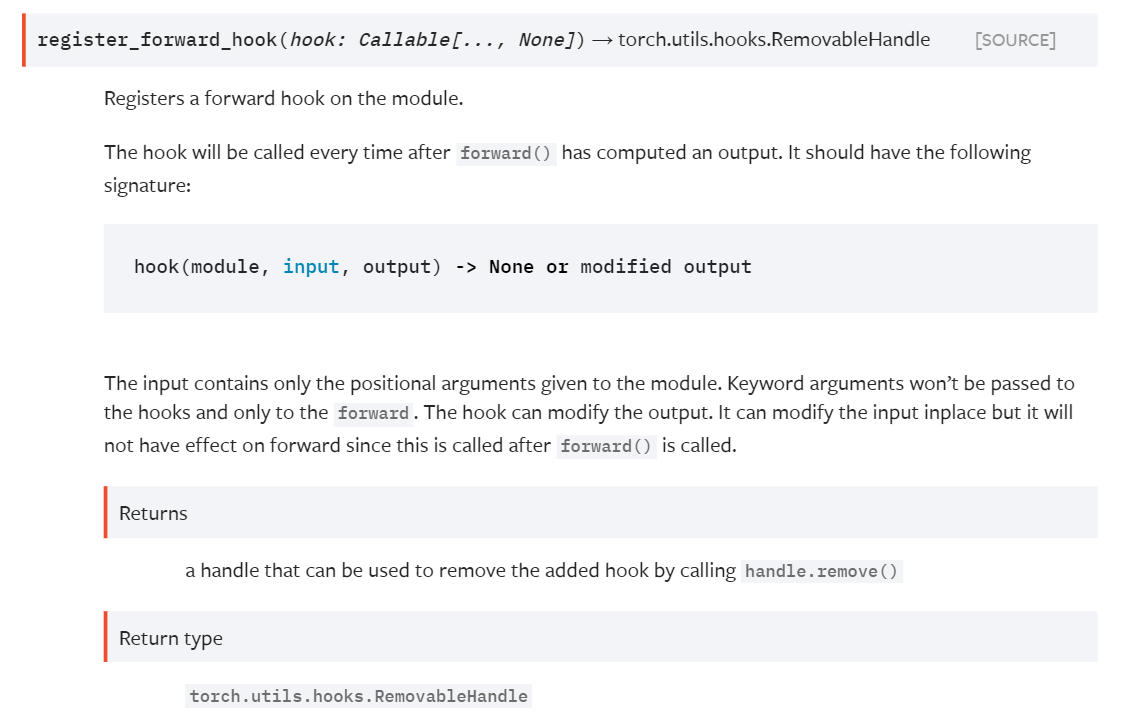

- The third and my personal favourite method for extracting activations during the forward pass of the model is by attaching a “forward_hook”. Yes, you read that right. Not heard of it? Not surprising. Heard of it but don’t know what it does? Still not surprising. Trying to find more about it but meeting a severe lack of documentation? Welcome to my world. For some reason, forward_hooks are seriously underdocumented for the functionality they provide. In PyTorch documentation, here’s the method

register_forward_hookunder thenn.Moduleclass definition.

register_forward_hook

Forward Hooks 101

Hooks are callable objects with a certain set signature that can be registered to any nn.Module object. When the forward() method is triggered in a model forward pass, the module itself, along with its inputs and outputs are passed to the forward_hook before proceeding to the next module. Since intermediate layers of a model are of the type nn.module, we can use these forward hooks on them to serve as a lens to view their activations.

Using the forward hooks

Coming back to our example, how do we use forward hooks to get to the layers we want? Simple.

- Have a function to call the hook signature and store the outputs in a dictionary

# a dict to store the activations

activation = {}

def getActivation(name):

# the hook signature

def hook(model, input, output):

activation[name] = output.detach()

return hook

- Register forward hooks on the layers you want.

h = model.layer-name.register_forward_hook(getActivation(name))

- Detach the hooks after the forward pass

h.remove()

Let’s put a complete picture together.

from PIL import Image

import torch

from torchvision.models import resnet18

from torchvision import transforms as T

# input (single)

image = Image.open('cat.jpg')

transform = T.Compose([T.Resize((224, 224)), T.ToTensor()])

X = transform(image).unsqueeze(dim=0).to(device)

# original model

device = torch.device('cuda') if torch.cuda.is_available() else torch.device('cpu')

model = resnet18(pretrained=True)

model = model.to(device)

# a dict to store the activations

activation = {}

def getActivation(name):

# the hook signature

def hook(model, input, output):

activation[name] = output.detach()

return hook

# register forward hooks on the layers of choice

h1 = model.avgpool.register_forward_hook(getActivation('avgpool'))

h2 = model.maxpool.register_forward_hook(getActivation('maxpool'))

h3 = model.layer3[0].downsample[1].register_forward_hook(getActivation('comp'))

# forward pass -- getting the outputs

out = model(X)

print(activation)

# detach the hooks

h1.remove()

h2.remove()

h3.remove()

activationshould now contain 3 items with keysavgpool,maxpoolandcompcontaining the activations for themodel.avgpool,model.maxpoolandmodel.layer3[0].downsample[1]modules respectively.

Hooks with Dataloaders

If you have a dataloader instead of a single data input instance, here’s a code snippet that can make your life easier.

from PIL import Image

import torch

from torchvision.models import resnet18

from torchvision import transforms as T

from torch.utils.data import Dataset, DataLoader

import user_defined_dataset as transformed_dataset

# dataloader example instead of single input

dataloader = DataLoader(transformed_dataset, batch_size=4,

shuffle=True, num_workers=0)

# original model

device = torch.device('cuda') if torch.cuda.is_available() else torch.device('cpu')

model = resnet18(pretrained=True)

model = model.to(device)

# a dict to store the activations

activation = {}

def getActivation(name):

# the hook signature

def hook(model, input, output):

activation[name] = output.detach()

return hook

# register forward hooks on the layers of choice

h1 = model.avgpool.register_forward_hook(getActivation('avgpool'))

h2 = model.maxpool.register_forward_hook(getActivation('maxpool'))

h3 = model.layer3[0].downsample[1].register_forward_hook(getActivation('comp'))

avgpool_list, maxpool_list, comp_list = [], [], []

# go through all the batches in the dataset

for X, y in dataloader:

# forward pass -- getting the outputs

out = model(X)

# collect the activations in the correct list

avgpool_list.append(activation['avgpool']

maxpool_list.append(activation['maxpool']

comp_list.append(activation['comp']

# detach the hooks

h1.remove()

h2.remove()

h3.remove()

And there you go! A clean solution for this problem. Hope it was useful!

Note:

- You can always write more sophisticated wrapper classes for the hooks. Here are a couple that might be worth checking out: link 1 and link 2.

- For models with dynamic graphs, forward_hooks might not be able to help either. In that case, the only good option is to hack your way forward.

Acknowledgements

Thanks to Ashwin Paranjape for the useful discussion and pointers :)