Support vector machines

Contents

Support vector machines#

Linear classifiers can be described geometrically by separating hyperplanes.

An affine function $\( x \mapsto \beta_0 + \sum_{j=1}^p x_j \beta_j \)\( determines a hyperplane \)\( H = \left\{x: \sum_{j=1}^p x_j \beta_j + \beta_0 = 0 \right\}. \)\( and two half-spaces \)\( \left\{x: \sum_{j=1}^p x_j \beta_j + \beta_0 > 0 \right\}, \qquad \left\{x: \sum_{j=1}^p x_j \beta_j + \beta_0 < 0 \right\}. \)$

The vector \(N=(\beta_1, \dots, \beta_p)\) is the normal vector of the hyperplane \(H\).

For a given \(H\), by scaling, we can always choose \(N\) so that \(\|N\|_2=1\) (we must also scale \(\beta_0\) to keep \(H\) the same).

Support vector machines#

Hyperplanes and normal vectors#

Function is \(x \mapsto 1 + 2 x_1 + 3 x_2\)

\(\{x: 1+2x_1+3x_2>0\}\)

\(\{x: 1+2x_1+3x_2>0\}\)

Support vector machines#

Hyperplanes and normal vectors#

- If the hyperplane goes through the origin $(\beta_0=0)$, the deviation between a point $(x_1,\dots,x_p)$ and the hyperplane is the dot product: $$x\cdot \beta = x_1\beta_1 + \dots + x_p\beta_p.$$

- The sign of the dot product tells us on which side of the hyperplane the point lies.

- If the hyperplane goes through a point $-\beta_0 \beta$, i.e. it is displaced from the origin by $-\beta_0$ along the normal vector $(\beta_1, \dots, \beta_p)$, the deviation of a point $(x_1,\dots,x_p)$ from the hyperplane is: $$ \beta_0 + x_1\beta_1 + \dots + x_p\beta_p.$$

- The sign tells us on which side of the hyperplane the point lies.

Support vector machines#

Maximal margin classifier#

- Suppose we have a classification problem with response $Y=-1$ or $Y=1$.

- If the classes can be separated, most likely, there will be an infinite number of hyperplanes separating the classes.

Support vector machines#

Maximal margin classifier#

Idea:

- Draw the largest possible empty margin around the hyperplane.

- Out of all possible hyperplanes that separate the 2 classes, choose the one with the widest margin.

Support vector machines#

Maximal margin classifier#

This can be written as an optimization problem:

\(M\) is simply the width of the margin in either direction.

Ultimately, we will see that this problem can be transformed to something similar to logistic regression…

Support vector machines#

Finding the maximal margin classifier#

We can reformulate the problem by defining a vector \(w=(w_1,\dots,w_p) = \beta/M\):

This is a quadratic optimization problem. Having found \((\hat{\beta}_0, \hat{w})\) we can recover \(\hat{\beta}=\hat{w}/\|\hat{w}\|_2, M=1/\|\hat{w}\|_2\).

Support vector machines#

Finding the maximal margin classifier#

Introducing Karush-Kuhn-Tucker (KKT) multipliers, \(\alpha_1,\dots,\alpha_n\), this is equivalent to:

Support vector machines#

Finding the maximal margin classifier#

- Setting the partial derivatives with respect to $w$ and $\beta_0$ to 0, we get: $$\hat w = \sum_{i=1}^n \alpha_i y_i x_i, \quad \sum_{i=1}^n\alpha_iy_i = 0$$

- Furthermore, one of the KKT conditions yields $\alpha_i>0$ if and only if $y_i(\beta_0 +w\cdot x_i)=1$, that is, if $x_i$ falls on the margin.

Support vector machines#

Support vectors#

The vectors that fall on the margin and determine the solution are called support vectors

Support vector machines#

Finding the maximal margin classifier#

The solution is \(\hat w = \sum_{i=1}^n \alpha_i y_i x_i\), and \(\sum_{i=1}^n \alpha_iy_i=0\) so we can plug this in above to obtain the dual problem:

Support vector machines#

Maximal margin classifier#

Summary#

We’ve reduced the problem of finding \(w\), which describes the hyperplane and the size of the margin, to finding a set of coefficients \(\alpha_1,\dots,\alpha_n\) through:

This only depends on the training sample inputs through the inner products \(x_i\cdot x_j\) for every pair \(i,j\).

Whatever \(p\) is, this is an optimization problem in \(n\) variables. \(\to\) could be \(p \gg n\).

Support vector machines#

Support vector classifier#

Problem: It is not always possible to separate the points using a hyperplane.

Support vector classifier:

- Relaxation of the maximal margin classifier.

- Allows a number of points to be on the wrong side of the margin or even the hyperplane by allowing *slack* $\epsilon_i$ for each case.

Support vector machines#

Support vector classifier#

A new optimization problem: $\( \begin{align*} &\max_{\beta_0,\beta,\epsilon}\;\; M\\ &\text{subject to } \sum_{j=1}^p \beta_j^2 = 1,\\ &\underbrace{y_i(\beta_0 +\beta_1x_{i1}+\dots+\beta_p x_{ip})}_\text{How far is \)x_i\( from the hyperplane} \geq M(1-\epsilon_i) \quad \text{ for all }i=1,\dots,n\\ &\epsilon_i \geq 0 \;\text{for all }i=1,\dots,n, \quad \sum_{i=1}^n \epsilon_i \leq C. \end{align*}\\ \)$

\(M\) is the width of the margin in either direction

\(\epsilon=(\epsilon_1,\dots,\epsilon_n)\) are called slack variables

\(C\) is called the budget

Support vector machines#

Support vector classifier#

Tuning the budget, \(C\) (high to low)#

Support vector machines#

Support vector classifier#

If the budget is too low, we tend to overfit#

Maximal margin classifier, \(C=0\).

Adding one observation dramatically changes the classifier.

Support vector machines#

Support vector classifier#

Finding the support vector classifier#

We can reformulate the problem by defining a vector \(w=(w_1,\dots,w_p) = \beta/M\):

The penalty \(D\geq 0\) serves a function similar to the budget \(C\), but is inversely related to it.

Support vector machines#

Support vector classifier#

Finding the support vector classifier#

Introducing KKT multipliers, \(\alpha_i\) and \(\mu_i\), this is equivalent to: $\( \begin{align*} &\max_{\alpha,\mu}\; \min_{\beta_0,w,\epsilon}\;\; \frac{1}{2}\|w\|^2 - \sum_{i=1}^n \alpha_i [y_i(\beta_0 +w\cdot x_i)-1+\epsilon_i] + \sum_{i=1}^n (D-\mu_i)\epsilon_i\\ &\text{subject to } \;\;\alpha_i \geq 0, \mu_i\geq 0 , \quad \text{ for all }i=1,\dots,n. \end{align*} \)$

Support vector machines#

Support vector classifier#

Finding the support vector classifier#

- Setting the derivatives with respect to $w$, $\beta_0$, and $\epsilon$ to 0, we obtain: $$\hat w = \sum_{i=1}^n \alpha_i y_i x_i, \quad \sum_{i=1}^n\alpha_i y_i = 0, \quad \mu_i=D-\alpha_i$$

- Furthermore, the KKT conditions yield $\alpha_i>0$ if and only if $y_i(\beta_0 +w\cdot x_i)\leq 1$, that is, if $x_i$ falls on the wrong side of the margin.

Support vector machines#

Support vector classifier#

Support vectors#

Support vector machines#

Support vector classifier#

The problem only depends on \(x_i\cdot x_{i'}\)#

As with the maximal margin classifier, the problem can be reduced to finding \(\alpha_1,\dots,\alpha_n\):

As for maximal margin classifier, this only depends on the training sample inputs through the inner products \(x_i\cdot x_j\) for every pair \(i,j\).

Why care?

Support vector machines#

Support vector classifier#

Key fact about the support vector classifier#

To find the hyperplane all we need to know is the dot product between any pair of input vectors:

K_f(x_i, x_j) = \langle f(x_i), f(x_j) \rangle, \qquad K_f(x, x_i) = \langle f(x), f(x_i) \rangle $$

Support vector machines#

Support vector classifier#

How to create non-linear boundaries?#

The support vector classifier can only produce a linear boundary.

Support vector machines#

Support vector classifier#

How to create non-linear boundaries?#

- In **logistic regression**, we dealt with this problem by adding transformations of the predictors.

- The original decision boundary is a hyperplane: $$\log \left[ \frac{P(Y=1|X)}{P(Y=0|X)} \right] = \beta_0 + \beta_1 X_1 + \beta_2 X_2 = 0.$$

- With a quadratic predictor, we get a quadratic boundary: $$\log \left[ \frac{P(Y=1|X)}{P(Y=0|X)} \right] = \beta_0 + \beta_1 X_1 + \beta_2 X_2 + \beta_3 X_1^2= 0.$$

Support vector machines#

Support vector classifier#

How to create non-linear boundaries?#

- With a **support vector classifier** we can apply a similar trick.

- The original decision boundary is the hyperplane defined by: $$ \beta_0 + \beta_1 X_1 + \beta_2 X_2 = 0.$$

- If we expand the set of predictors to the 3D space $(X_1,X_2,X_1^2)$, the decision boundary becomes: $$ \beta_0 + \beta_1 X_1 + \beta_2 X_2 + \beta_3 X_1^2= 0.$$

- This is in fact a linear boundary in the 3D space. However, we can classify a point knowing just $(X_1,X_2)$. The boundary in this projection is quadratic in $X_1$.

Support vector machines#

Support vector classifier#

How to create non-linear boundaries?#

- **Idea:** Add polynomial terms up to degree $d$: $$Z = (X_1, X_1^2, \dots, X_1^d, X_2, X_2^2, \dots, X_2^d, \dots, X_p, X_p^2, \dots, X_p^d).$$

- Does this make the computation more expensive?

- Recall that all we need to compute is the dot product: $$x_i \cdot x_{k} = \langle x_i,x_k\rangle = \sum_{j=1}^p x_{ij}x_{kj}.$$

- With the expanded set of predictors, we need: $$z_i \cdot z_{k} = \langle z_i,z_k\rangle = \sum_{j=1}^p\sum_{\ell=1}^d x^\ell_{ij}x^\ell_{kj}.$$

- Modulo computing these dot products, the order of computations is the same as with linear features. *Not quite true for logistic regression as described above.*

Support vector machines#

Kernels and the kernel trick#

The kernel matrix defined by \(K(x_i,x_k) = \langle z_i, z_k \rangle\) for a set of linearly independent vectors \(z_1,\dots,z_n\) is always symmetric and positive semi-definite, i.e. has no negative eigenvalues.

Theorem: If \(K\) is a positive definite \(n\times n\) matrix, there exist vectors \((z_1,\dots,z_n)\) in some space \(\mathbf{Z}\), such that \(K(x_i,x_k) = \langle z_i,z_k\rangle\).

We just need \(\mathbf{K}_{ij}=K(x_i,x_j)\) to classify all of our learning examples, but to classify we need to know \(x \mapsto K(x, x_i)\).

The kernel \(K:\mathbb{R}^p \times \mathbb{R}^p \rightarrow \mathbb{R}\) should be such that for any choice of \(\{x_1, \dots, x_n\}\) the matrix \(\mathbf{K}\) is positive semidefinite.

Such kernel functions are associated to reproducing kernel Hilbert spaces (RKHS).

Support vector classifiers using this kernel trick are support vector machines…

Support vector machines#

Kernels and the kernel trick#

With a kernel function \(K\), the problem can be reduced to the optimization:

- This is the dual problem of a *different* optimization problem than we started with.

- Predictions can be computed similarly to original kernel $K(x,y)=x \cdot y$. (Details omitted.)

Support vector machines#

Kernels and the kernel trick#

| **Expand the set of predictors:** | **Define a kernel:** |

|

|

Support vector machines#

The polynomial kernel with \(d=2\):#

This is equivalent to the expansion: $\( \begin{align*} \Phi(x) =& (\sqrt{2}x_1,\dots,\sqrt{2}x_p, \\ & x_1^2,\dots,x_p^2, \\ & \sqrt{2}x_1x_2,\sqrt{2}x_1x_3, \dots, \sqrt{2}x_{p-1}x_p) \end{align*} \)$

- For a single $x$, computing $K(x,x_k)$ directly is $O(p)$.

- For a single $x$ computing the kernel using the expansion is $O(p^2)$.

Support vector machines#

How are kernels defined?#

- Proving that a bilinear function $R(\cdot,\cdot)$ is positive definite (PD) is not always easy.

- <2-> However, we can easily define PD kernels by combining those we are familiar with:

- Sums and products of PD kernels are PD.

- Intuitively, a kernel $K(x_i,x_k)$ defines a \emph{similarity} between the samples $x_i$ and $x_k$.

- This intuition can guide our choice in different problems.

Support vector machines#

Common kernels#

- The polynomial kernel: $$K(x_i,x_k) = \left( 1+ \langle x_i,x_k\rangle\right)^d$$

- The radial basis kernel: $$K(x_i,x_k) = \exp\big(-\gamma\underbrace{\sum_{j=1}^p (x_{ip}-x_{kp})^2}_\text{Euclidean $d(x_i,x_k)$}\big)$$

Support vector machines#

Summary so far#

- As noted above **support vector machine** is a support vector classifier applied on an expanded set of predictors, e.g. $$\Phi: (X_1,X_2) \to (X_1,X_2,X_1X_2, X_1^2,X_2^2).$$

- We expand the vector of predictors for each sample $x_i$ and then perform the algorithm.

- We only need to know the dot products: $$ \langle \Phi(x_i),\Phi(x_k) \rangle \equiv K(x_i,x_k)$$ for every pair of samples $(x_i,x_k)$ as well as how to compute $\langle \Phi(x), \Phi(x_i) \rangle$ for new $x$'s.

- Often, the dot product: $$\langle \Phi(x_i),\Phi(x_k) \rangle \equiv K(x_i, x_k) $$ is a simple function $f(x_i,x_k)$ of the original vectors. Even if the mapping $\Phi$ significantly expands the space of predictors.

Support vector machines#

Summary so far#

- **Example 1:** Polynomial kernel

- $$K(x_i,x_k) = (1+ \langle x_i,x_k \rangle)^2.$$

- With two predictors, this corresponds to the mapping: $$\Phi: (X_1,X_2) \to (\sqrt{2}X_1,\sqrt{2}X_2,\sqrt{2}X_1X_2, X_1^2,X_2^2).$$

- **Example 2:** RBF kernel

$$K(x_i,x_k) = \exp(-\gamma d( x_i,x_k )^2),$$

where $d$ is the Euclidean distance between $x_i$ and $x_k$.

- In this case, the mapping $\Phi$ is an expansion into an infinite number of transformations!

- We can apply the method even if we don't know what these transformations explicitly are.

Support vector machines#

Kernels for non-standard data types#

- We can define families of kernels (with tuning parameters), which capture similarity between non-standard kinds of data:

- Text, strings

- Images

- Graphs

- Histograms

- Sometimes we know the mapping $\Phi$, but there are algorithms that are fast for computing $K(x_i,x_k)$ without doing the expansion explicitly.

- Other times, the expansion $\Phi$ is infinite-dimensional or simply not known.

Support vector machines#

Kernels for non-standard data types#

Example: strings#

Suppose we want to compare two strings in a finite alphabet:

- **Stringdot kernel:** For each word $u$ of length $p$, we define a feature: $$\Phi_u(x_i) = \text{# of times that }u\text{ appears in }x_i$$

- Naive algorithm would require looping over each sequence, for every subsequence $u$ of length $p$.

- This would be $O(n^2)$ steps, where $n$ is the length of the sequences.

Support vector machines#

Kernels for non-standard data types#

Example: strings#

Suppose we want to compare two strings in a finite alphabet: $\(x_1 = ACCTATGCCATA\)\( \)\(x_2 = AGCTAAGCATAC\)$

- **Gap weight kernel:** For each word $u$ of length $p$, we define a feature: $$\Phi_u(x_i) = \sum_{v: \text{a subsequence of $x_i$ containing $u$}} \lambda^{\text{length}(v)} $$ with $0<\lambda\leq 1$.

- The number of features can be huge! However, this can be computed in $\mathcal O(Mp\log n )$ steps where $M$ is the number of matches.

Support vector machines#

Applying SVMs with more than 2 classes#

- SVMs don't generalize nicely to the case of more than 2 classes.

- Two main approaches:

- **One vs. one:** Construct $n \choose 2$ SVMs comparing every pair of classes. Apply all SVMs to a test observation and classify to the class that wins the most one-on-one challenges.

- **One vs. all:** For each class $k$, construct an SVM $\beta^{(k)}$ coding class $k$ as $1$ and all other classes as $-1$. Assign a test observation to the class $k^*$, such that the distance from $x_i$ to the hyperplane defined by $\beta^{(k^*)}$ is largest (the distance is negative if the sample is misclassified).

Support vector machines#

Relationship of SVM to logistic regression#

Recall the Lagrange form of the problem.

Support vector machines#

Relationship of SVM to logistic regression#

- Set $D=1/\lambda$ and minimize over $\epsilon_i$ explicitly.

- If $1 - y_i(\beta+0 + w \cdot x_i) \leq 0$ we can take $\hat{\epsilon}_i=0$. Otherwise, we take $$\hat{\epsilon}_i=1 - y_i(\beta_0 + w \cdot x_i).$$

- Or, $$\hat{\epsilon}_i = \max(1 - y_i(\beta_0+w \cdot x_i), 0).$$

Support vector machines#

Relationship of SVM to logistic regression#

- Plugging this into the objective (and replacing $w$ with $\beta$) yields $$ \begin{align*} &\min_{\beta}\;\; \sum_{i=1}^n \max(1 - y_i(\beta_0 + \sum_{j=1}^p \beta_j x_{ij}, 0) + \frac{\lambda}{2}\sum_{j=1}^p\|\beta_j\|^2 + \\ \end{align*} $$

- This loss is a function of $y_i(\beta_0 + \sum_{j=1}^p \beta_j x_{ij}$ and a ridge penalty.

- Loss for logistic regression is also a function of $y_i(\beta_0 + \sum_{j=1}^p \beta_j x_{ij}$.

- Large $\lambda$ $\iff$ small $D$ $\iff$ large $C$.

Support vector machines#

Relationship of SVM to logistic regression#

Comparing the losses#

Support vector machines#

The kernel trick can be applied beyond SVMs#

Kernels and dot products:

- Associated to a positive definite $R$ is a dot product. For $x$ in the feature space $\mathbb{R}^p$, define $R_x:\mathbb{R}^p \rightarrow \mathbb{R}$ by $$ R_x(x_0) = R(x,x_0) $$

- The kernel defines a dot product on linear combinations of the $R_x$'s for different $x$'s: $$\left \langle \sum_j c_j R_{x_j}, \sum_i d_i R_{y_i} \right \rangle_R = \sum_{i,j} c_j d_i R(x_j,y_i) $$ and hence a length $$ \|\sum_j c_j R_{x_j}\|^2_R = \sum_{i,j} c_i c_j R(x_i, x_j) $$

Support vector machines#

The kernel trick can be applied beyond SVMs#

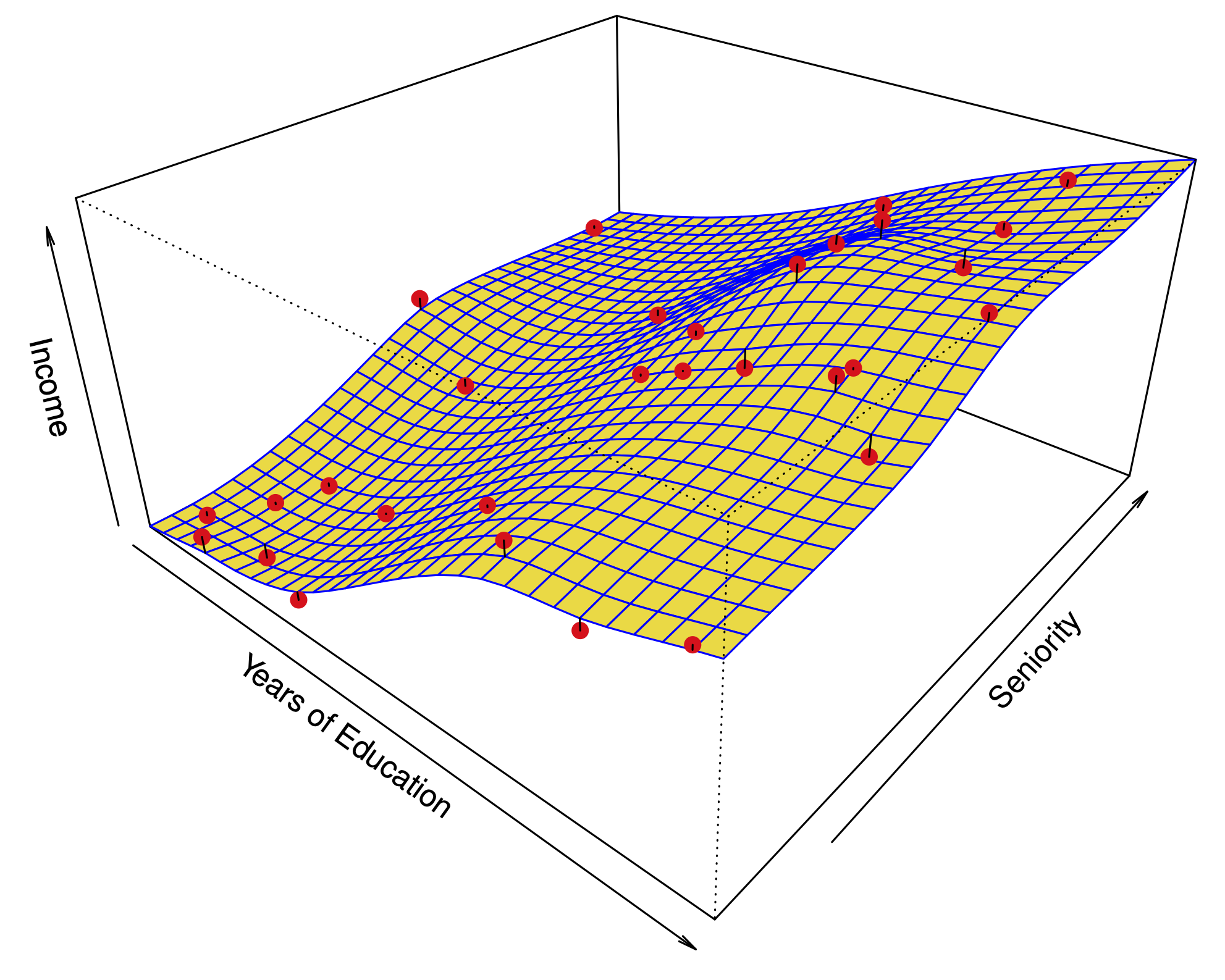

Kernel regression:

- For tuning parameter $\lambda$ define $$ \hat{f}_{\lambda} = \text{argmin}_f \sum_{i=1}^n (Y_i - f(X_i))^2 + \lambda \|f\|^2_K $$

- Remarkably, it is known that $$ \hat{f} = \sum_{i=1}^n \hat{\alpha}_i K_{X_i}. $$ for some $\hat{\alpha}$.

Support vector machines#

The kernel trick can be applied beyond SVMs#

Kernel regression:

- Problem reduces to finding $$ \hat{\alpha} = \text{argmin}_{\alpha} \sum_{i=1}^n (Y_i - \sum_{j=1}^n \alpha_j K(X_i, X_j))^2 + \lambda \sum_{l, r=1}^n \alpha_l \alpha_r K(X_i, X_j) $$

- Finding $\hat{\alpha}$ is just like ridge regression!

- Similar phenomenon for logistic regression...

- Just like smoothing splines, we solved a problem over an big space of functions!

- Smoothing splines are a special case of the kernel trick...

- Like support vector machines, optimization is over an $n$-dimensional vector, not a $p$-dimensional vector as in linear regression...

Support vector machines#

The kernel trick can be applied beyond SVMs#

Kernel PCA:

- Suppose we want to do PCA with an expanded set of predictors, defined by the mapping $\Phi$.

- First principal component is $$ \hat{f}_1 = \text{argmax}_{f: \|f\|_K \leq 1} \hat{\text{Var}}(f(X)). $$

- Even if $\Phi$ expands the predictors to a very high dimensional space, we can do PCA!

- The cost only depends on the number of observations $n$.

- **Long story short:** Kernel trick allows transformation of linear methods into nonlinear ones by *implicit* featurization.