Assignment 2#

You may discuss homework problems with other students, but you have to prepare the written assignments yourself.

Please combine all your answers, the computer code and the figures into one PDF file, and submit a copy to your folder on Gradescope.

Grading scheme: 10 points per question, total of 50.

Due date: 11:59 PM Thursday May 8, 2025

Download RMarkdown#

Part A#

Consider regressing a multivariate \(Y_{n \times q}\) onto \(X_{n \times p}\), i.e. \(Y|X \sim N(XB, I \otimes \Sigma)\).

Describe a realistic data example for which you’d expect the reduced rank regression model to be a better estimator than the MLE.

Write a function to simulate from your data generating mechanism and compare the reduced rank regression model (using 5-fold cross-validation to choose the rank) with the MLE. Can you make the reduced rank model obviously better than the MLE?

Fit a reduced rank multivariate regression model using the

Yresponses as a function of theXresponses in the Stock portfolio performance data in the UCI archive (include an intercept in the model). Take the 1st through 4th period and stack the data usingrbindyielding roughly 250 instances. Use 5-fold cross-validation to choose the rank.

The data on the UCI website is a bit off. You can find a cleaned up version of the data here.

For Y, use columns ['Annual Return', 'Excess Return', 'Systematic Risk', 'Total Risk', 'Abs. Win Rate', 'Rel. Win Rate'].

For X, use columns `[‘Large B/P’, ‘Large ROE’, ‘Large S/P’, ‘Large Return Rate in the last quarter’, ‘Large Market Value’, ‘Small systematic Risk’].

Normalize the columns of X and Y to have standard deviation 1.

The column period contains the period information. Use periods 1-4.

Test the null hypothesis \(H_0: B(I - 1/q 11^T)=0\) using Wilks’ \(\Lambda\). For a p-value use Bartlett’s approximation (3.7.11) from MKB.

Test the overall goodness of fit of the regression model, i.e. test the null \(H_0:XB = 1\gamma^T\) for some \(\gamma \in \mathbb{R}^q\) (i.e. . Use Wilks’ \(\Lambda\) again and Bartlett’s approximation.

Part B#

The proximal map \(\pi_{\lambda}\) of the group LASSO with one group is

Show that

That is, the solution to the above minimization problem is \(\pi_{\lambda / L}\).

Write a function to compute the proximal map \(\pi_{\lambda}\) for the block \(\ell_2\) norm:

\[ \mathbb{R}^{p \times q} \ni B \mapsto \lambda \sum_{j=1}^p \|B[j,]\|_2 \]It should take argument \(Z \in \mathbb{R}^{p \times q}\) and \(\lambda\).

Use your function to implement proximal gradient descent to solve the group LASSO problem

\[ \text{minimize}_B \ell(B) + \lambda \sum_{j=1}^p \|B[j,]\|_2 \]The iterations are of the form

\[ \widehat{B}^{(k+1)} = \pi_{\lambda / L}\left(\widehat{B}^{(k)} - \frac{1}{L} \nabla \ell(\widehat{B}^{(k)}) \right) \]For your loss function, take \(\ell(B) = \ell(B;X,Y) = \frac{1}{n} \|Y-XB\|^2_F\) the squared Frobenius loss (i.e. assume \(\Sigma=I\) in the “normal data matrix” model for \(Y|X\)).

We’ll use this algorithm to fit the block group LASSO model to the stock performance data above on a grid of \(\lambda\) values. Before fitting, standardize both \(X\) and \(Y\) to have mean 0 and a standard deviation of 1. The largest \(\lambda\) for which the solution is 0 is often referred to as \(\lambda_{\max}\). Check that \(\lambda_{\max}\) for this group LASSO is

Do this by verifying that at \(1 + \delta\) times this value the solution is 0 while it is i non-zero at \(1-\delta\) for appropriately small \(\delta\). Having found \(\lambda_{\max}\), fit the block group LASSO

at values \(\lambda_{\max} \cdot \texttt{seq(0.05, 1, length=20)}\). Compare your solutions to glmnet using family=mgaussian. Use 5-fold cross-validation in cv.glmnet to pick \(\lambda\).

Part C: PCA on the digits data#

Download the training data for the MNIST handwritten digit dataset. There are several packages in

Rto do this, as well aspython. InRit can be found in thedslabspackage, inpythonit can be found intorchvision.datasets(there are certainly other places as well). A smaller subset can be found here.Extract the

7’s in the training data into a data matrixX. Reforming the images into vectors, compute the PCA of the7’s. Ensure you have centered the data. What problems do you encounter if you try to scale the data?Make a screeplot of the singular values. Do you see an “elbow”? How many components are needed to explain 90% of the variance in the

7’s? Call this rank \(r_{90}\)Pick a random set of 5 of the images. For each of the 5 images, make a plot of the rank \(k\) reconstruction of that image (i.e. for the \(i\)-th case this reconstruction is the \(i\)-th row of \(\sum_{j=1}^k u_j d_j v_j^T\)) for \(k\) in [5, 10, \(r_{90}\), 100, 200]. Does the reconstruction “look like” the original at \(r_{90}\) or does the visual quality keep improving as you increase the rank of the approximation?

Part D: Procrustes transformation#

Read the discussion on the Procrustes problem in Chapter 14 of ESL II.

Solve Problem 14.8 of ESL II.

Find the Procrustes rotation relating the first

Sto the secondSin the signature data.Moving on to the Procrustes average, argue that the algorithm presented is a descent algorithm on the objective (14.59)

Find the Procrustes average of the 3

Simages in the signature data.

Part E: Smoother PCA#

One formulation we saw of PCA for a data matrix \(X_{n \times p}\) involved solving the problem

Informally, each row of \(X\) is ultimately approximated by a linear combination of rows of an estimated \(B\) matrix, the “decoder”. In some situations (the MNIST data above being one, the CGH example in our discussion of PMD being another), the columns are arranged in such a fashion that it makes sense to impose some constraint on the rows of \(B\), i.e. that each row can be represented as a linear combination of some set of \(K \geq k\) smooth “functions”.

Use the formulation above to “fit” PCA with the constraint that the rows of \(B\) are contained in some \(K\)-dimensional subspace \(L\) of \(\mathbb{R}^p\).

The milk data, described here contains near infrared spectroscopy (NIR) signals measured on 9 different samples of milk. In this case, each row of the data corresponds to a signal measured at 601 uniformly spaced NIR frequencies (which you can take to be the interval [0,1]). The analysis presented in the link above suggests the derivatives of the spectra contain much of the information, hence the authors first estimate smooth derivatives and then run PCA on the smoothed data. Your task is to compute a “smooth PCA” on the discrete derivatives for the data. If

Xis our data, then we can compute these discrete derivatives with:

t(apply(X, 1, diff))

As for the linear subspace, we’ll take a set of natural cubic splines:

library(splines)

smooth_basis = ns(seq(0, 1, length=600), df=12)

You can read about natural splines in Ch. 5 of ESL II.

Compare the first three loadings (plotted as functions of the frequency index) to those obtained by PCA without smoothing. Does smoothing add anything here?

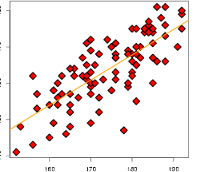

Plot the smooth PCA scores for each observation for the first two components, and color the points according to

labels. Compare this to the same plot without smoothing. Does smoothing add anything here?Compare your plot to the plot proposed in the link. If you’re ambitious you could compare your solution to the one in the code provided in the link.