Introduction#

Download#

Common tasks#

Data matrix#

Basic data block: data matrix \(X \in \mathbb{R}^{n \times p}\).

Usual model: \(X_i=X[i] \sim F\).

The exam score data#

library(MVT)

data(examScor)

head(examScor)

Loading required package: fastmatrix

| mechanics | vectors | algebra | analysis | statistics | |

|---|---|---|---|---|---|

| <dbl> | <dbl> | <dbl> | <dbl> | <dbl> | |

| 1 | 77 | 82 | 67 | 67 | 81 |

| 2 | 63 | 78 | 80 | 70 | 81 |

| 3 | 75 | 73 | 71 | 66 | 81 |

| 4 | 55 | 72 | 63 | 70 | 68 |

| 5 | 63 | 63 | 65 | 70 | 63 |

| 6 | 53 | 61 | 72 | 64 | 73 |

One sample problem#

Let \(\mu \in \mathbb{R}^p, \Sigma \in \mathbb{R}^{p \times p}\).

Do these data come from distribution \(N(\mu, \Sigma)\)?

Or, \(F \overset{?}{=} N(\mu,\Sigma)\)?

Picking a statistic#

null_mean = rep(50, 5)

sample_mean = apply(examScor, 2, mean)

T = sum((null_mean-sample_mean)^2)

Is \(T\) big?

Is it the “right” statistic?

Two sample problem#

Two data matrices: \(X_1 \in \mathbb{R}^{n_1 \times p}, X_2 \in \mathbb{R}^{n_2 \times p}\).

Basic model: \(X_1[i] \sim F_1\), \(X_2[i] \sim F_2\).

Usual question: \(F_1 \overset{?}{=} F_2\)?

Picking a statistic#

Let’s artificially made a group here: 1:50 in group 1, 51:88 in group 2.

X1 = examScor[1:50,]

X2 = examScor[51:88,]

sum((apply(X1, 2, mean) - apply(X2, 2, mean))^2) # is this big? is this the right statistic?

Multi sample problem#

Many data matrices: \(X_k \in \mathbb{R}^{n_k \times p}, 1 \leq k \leq K\).

Basic model: \(X_k[i] \sim F_k\)

Usual question: \(F_k \overset{?}{=} F \ \forall k\)?

Regression#

Response matrix: \(Y \in \mathbb{R}^{n \times q}\)

Design matrix: \(X \in \mathbb{R}^{n \times p}\)

Regression with multivariate response#

Usual task:

What if errors are correlated?

Penalized form?

Frobenius norm#

Plays role of squared error in multivariate problems

Corresponds to inner product:

Multiple-response regression#

Y = as.matrix(examScor[, c('mechanics', 'vectors')])

X = as.matrix(examScor[, c('algebra', 'statistics', 'analysis')]) # use X to predict Y...

M = lm(Y ~ X)

M

Call:

lm(formula = Y ~ X)

Coefficients:

mechanics vectors

(Intercept) -5.96774 13.02915

Xalgebra 0.82101 0.64325

Xstatistics 0.03887 0.02500

Xanalysis 0.03712 0.08471

Inference in multiple-response regression#

summary(M)

Response mechanics :

Call:

lm(formula = mechanics ~ X)

Residuals:

Min 1Q Median 3Q Max

-37.057 -6.921 0.556 10.569 35.962

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) -5.96774 7.91325 -0.754 0.45287

Xalgebra 0.82101 0.23493 3.495 0.00076 ***

Xstatistics 0.03887 0.12805 0.304 0.76220

Xanalysis 0.03712 0.15809 0.235 0.81493

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual standard error: 14.88 on 84 degrees of freedom

Multiple R-squared: 0.3006, Adjusted R-squared: 0.2756

F-statistic: 12.03 on 3 and 84 DF, p-value: 1.251e-06

Response vectors :

Call:

lm(formula = vectors ~ X)

Residuals:

Min 1Q Median 3Q Max

-41.816 -6.018 1.242 7.135 18.279

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 13.02915 5.61221 2.322 0.022678 *

Xalgebra 0.64325 0.16661 3.861 0.000221 ***

Xstatistics 0.02500 0.09082 0.275 0.783769

Xanalysis 0.08471 0.11212 0.756 0.452054

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual standard error: 10.56 on 84 degrees of freedom

Multiple R-squared: 0.3776, Adjusted R-squared: 0.3554

F-statistic: 16.99 on 3 and 84 DF, p-value: 1.034e-08

Inference in multiple-response regression#

library(car)

Manova(M)

Loading required package: carData

Type II MANOVA Tests: Pillai test statistic

Df test stat approx F num Df den Df Pr(>F)

X 3 0.44131 7.9277 6 168 1.554e-07 ***

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Classification#

Response matrix: \(Y \in \mathbb{R}^{n \times K}\): each row of \(Y\) is 1-hot – indicator of category

Design matrix: \(X \in \mathbb{R}^{n \times p}\)

Classification beyond binary#

Usual question: for some classification loss

Generative classifiers#

LDA, QDA, Naive Bayes

Discriminative#

Multinomial logistic + variants

RKHS methods (inference?)

Linear Discriminant Analysis#

data(iris)

library(MASS)

lda(Species ~ ., data=iris)

Call:

lda(Species ~ ., data = iris)

Prior probabilities of groups:

setosa versicolor virginica

0.3333333 0.3333333 0.3333333

Group means:

Sepal.Length Sepal.Width Petal.Length Petal.Width

setosa 5.006 3.428 1.462 0.246

versicolor 5.936 2.770 4.260 1.326

virginica 6.588 2.974 5.552 2.026

Coefficients of linear discriminants:

LD1 LD2

Sepal.Length 0.8293776 -0.02410215

Sepal.Width 1.5344731 -2.16452123

Petal.Length -2.2012117 0.93192121

Petal.Width -2.8104603 -2.83918785

Proportion of trace:

LD1 LD2

0.9912 0.0088

Matrix factorization#

Find \(U, D, V\) such that $\( X = U D V^T \)$

PCA the “most popular” exploratory technique… SVD in disguise

SVD#

svd_scor = svd(examScor)

names(svd_scor)

dim(svd_scor$u)

length(svd_scor$d)

dim(svd_scor$v)

- 'd'

- 'u'

- 'v'

- 88

- 5

- 5

- 5

PCA#

prcomp(examScor)

Standard deviations (1, .., p=5):

[1] 26.210490 14.216577 10.185642 9.199481 5.670387

Rotation (n x k) = (5 x 5):

PC1 PC2 PC3 PC4 PC5

mechanics -0.5054457 -0.74874751 0.2997888 0.296184264 0.07939388

vectors -0.3683486 -0.20740314 -0.4155900 -0.782888173 0.18887639

algebra -0.3456612 0.07590813 -0.1453182 -0.003236339 -0.92392015

analysis -0.4511226 0.30088849 -0.5966265 0.518139724 0.28552169

statistics -0.5346501 0.54778205 0.6002758 -0.175732020 0.15123239

Proportional asymptotics#

We’ll explore some properties of PCA and related methods as \(n, p\) both grow with \(n/p \to \gamma\)

(Maybe) some implications for regression and other methods under proportional asymptotics

Matrix approximation#

Find \(\hat{U}, \hat{D}, \hat{V}\) such that

Other methods for matrix approximation#

Low-rank approximation to \(X\) (low-rank SVD)

Structure in \(\hat{U}, \hat{V}\)? (Non-negative matrix factorization, sparse PCA, …)

Covariance matrix approximation#

Diagonalize

Factor analysis#

Factor analysis models as low-rank + diagonal

Canonical correlation analysis (CCA)#

Find common structure in different feature sets#

Given groups of measurements \(Y, X\) on same individuals (so data matrix is \(\mathbb{R}^{n \times (n_Y + n_X)}\).

CCA#

Example: \(Y\) is a set of phenotypes, \(X\) is a set of genes.

Want to find functions of \(Y\) related to functions of \(X\):

Common structure in different feature sets#

Given groups of measurements \(X_1, \dots, X_2, \dots, X_K\) on same individuals (so data matrix is \(\mathbb{R}^{n \times (n_1 + \dots + n_K)}\).

Want to find functions of \(X_k\)’s that are all highly “related” to one another.

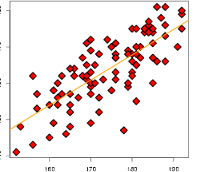

Visualization: low-dimensional projection#

Given \(X \in \mathbb{R}^{n \times p}\), find \(\hat{X} \in \mathbb{R}^{n \times k}\) with \(k \ll p\) such that

Low-dimensional projection#

Examples: principal component scores (biplot), t-SNE, “manifold learning”

Low-dimensional projection using only distance#

Given pairwise “distances” \(D \in \mathbb{R}^{n \times n}\) find \(\hat{X} \in \mathbb{R}^{n \times k}\) with \(k\) small such that

Multidimensional scaling#

Clustering#

Given \(X \in \mathbb{R}^{n \times p}\), partition \(\{1, \dots, n\}\) into “homogeneous” clusters.

Often can be done using only pairwise distances \(D \in \mathbb{R}^{n \times n}\).

Hierarchical clustering#

Mixture modeling#

Soft version of \(K\)-means

EM algorithm

Mixed models (time permitting)#

Random effects: \(\alpha \sim N(0, \bar{\Sigma})\)

EM approach

Connection to ridge, GAM, other kernels

Inference?

Generalized linear mixed models (time permitting)#

Logistic#

Random effects: \(\alpha \sim N(0, \bar{\Sigma})\)

Difficulty: likelihood doesn’t have a closed form…

Inference?