RKHS#

Download#

Reproducing kernel Hilbert spaces (RKHS)#

Here, we briefly cover some properties of RKHS that are well-known and potentially useful.

Kernels are used to produce new (hopefully interesting) features on some existing feature space \(T\).

For concreteness, let’s think \(T=\mathbb{R}^p\).

Covariance kernel#

The key ingredient is a covariance kernel \(K:T \times T \mathbb{R}\)

\(K(t,s) = K(s,t)\)

Non-negative definiteness: for any \((a_1,\dots,a_k) \in \mathbb{R}, (t_1, \dots, t_k) \in T\):

RKHS#

Feature map#

For every \(t \in T\), define \(K_t:T \to \mathbb{R}\) by

The RKHS \({\cal H}\) is formed by linear combinations of the form

Inner product#

Define (this is the reproducing aspect)

We see that

Completion#

Call all finite linear combinations \({\cal H}_0\).

The RKHS \({\cal H}\) is the completion of \({\cal H}_0\) (i.e. equivalence classes of Cauchy sequences in \({\cal H}_0\)).

Evaluation#

For any \(h \in {\cal H}\) and any \(t \in T\)

Proof: verify on \({\cal H}_0\), extend to \({\cal H}\).

Example: linear kernel#

Take \(T=\mathbb{R}^p\) and any \(\Sigma \geq 0 \in \mathbb{R}^{p \times p}\)

For \(v \in \text{row}(\Sigma)\) define

We see that \({\cal H}\) is \(\text{row}(\Sigma)\) equipped with the inner product

Example: piecewise linear kernel#

Take \(T=[0,1]\) and

I.e.

This is the completion of

with

The feature maps are piecewise linear functions…

Example: piecewise cubic kernel#

Take \(T=[0,1]\) and

We recognize the smoothing spline penalty

The feature maps are piecewise cubic functions…

What is the corresponding kernel?

Gaussian process#

To see what the corresponding kernel is, it is helpful to consider another object associated to \(K\)

For any covariance kernel \(K\), we can associate a Gaussian process \(Z:T \times \Omega \rightarrow \mathbb{R}\):

Piecewise linear kernel the process is Brownian motion on \([0,1]\), let’s call it \(B\).

Piecewise cubic kernel

Straightforward to check that its covariance is piecewise cubic…

Kernel trick (aka Representer theorem)#

Let \({\cal T}=(t_1, \dots, t_n)\) denote any finite set in \(T\)

Associated to \({\cal T}\) are:

Orthogonality / interpolation#

For any \(h \in {\cal H}\) and any \(t_i \in {\cal T}\)

For any function \(\ell\) of the fitted values for \(h \in {\cal H}\)

Implications#

\(\implies\)

\(\implies\) suffices to solve the finite dimensional problem

Functions in \(L_{\cal T}\) can be parameterized as

Bases for an RKHS#

Mercer’s theorem / Karhunen Loeve expansion#

Take \(T\) to be a bounded subset of \(\mathbb{R}^p\) with Lebesgue measure \(\Lambda_T\) and consider

We can view \(\bar{K}:L^2(\Lambda_T) \to L^2(\Lambda_T)\) which admits an eigen-decomposition

Formally (sums over index \(i\) may be finite or infinite)

Multiple expansions#

Replace Lebesgue measure with a probability measure \(F\) with density \(f\) whose support is all of \(T\):

We now have \(\bar{K}_f:L^2(F) \to L^2(F)\) with a similar eigen-decomposition

Which is preferable?#

Computationally, one may want to use a low-rank approximation to \(K\)…

We’d expect Lebesgue expansion \(L^2(\Lambda_T \times \Lambda_T)\) while the above would be preferable for approximation in \(L^2(F \times F)\)

Realizing the Gaussian process#

Let \(\xi_i(\omega) \overset{IID}{\sim} N(0,1)\)

Given a basis \(e_{i,F}\) from such an expansion (or any other ONB) set

As \(e_{i,F}(t) = \langle e_{i,F}, K_t \rangle_{\cal H}\) we can define, for any \(h \in {\cal H}\)

Orthonormal basis for \({\cal H}\)#

Given any orthonormal basis \((\varphi_i)_{1 \leq i \leq dim({\cal H})}\) of \({\cal H}\) we see

we can realize the law as

Conversely, for countable index set \({\cal I}\), suppose

Then, \((\varphi_i)_{i \in {\cal I} }\) is an ONB for \({\cal H}\) determined by \(K\). The corresponding process is

\(\implies\) both \((\lambda_i^{1/2} e_{i})\) and \((\lambda_{i,F}^{1/2} e_{i,F})\) are ONB for \({\cal H}\).

A statistical problem#

Suppose we want to approximate the function \(Z\) by its projection onto a single function \(g \in L^2(\Lambda_T)\) (\(\|g\|^2_{L^2(\Lambda_T)}=1\)):

We’ll measure accuracy by

Minimizing over \(g\) (unsurprisingly?) is seen to be the same as

Solution is to take top Karhunen-Loeve eigenfunction \(e_{1,\Lambda_T}\)

Generalizes to projection onto a subspace of dimension \(k\).

Swapping \(\Lambda_T\) to \(F\) yields \(e_{1,F}\)

Connections to PCA #1: Functional PCA#

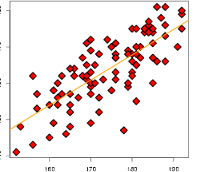

Image credit: Towards data science

For functional data analysis, the observations are functions

Connections to PCA #2: Kernel PCA#

Given a sample \(X_i \overset{IID}{\sim} F\) and kernel \(K\), consider

Resulting eigenproblem (see 14.5.4 in ESL)

with \(G_{ij} = K(X_i, X_j)\).

This is (the population version of) a low-rank perturbation of the original kernel… corresponding process is